Google search is without a doubt the easiest way to find answers quickly, but it can also spur new questions. Sometimes those questions are sparked by a random autocomplete suggestion, but the company just announced changes to its search engine that will help you find answers to questions you didn’t ask.

At the company’s Search On event Wednesday, Google announced several interesting new features that will turn its search engine into something of a mind reader, including a Google Lens tool that lets you search with both words and photos and a new “Things to know” box that serves up answers to questions commonly related to your search.

The new features are powered by a machine learning model called MUM, or Multitask Unified Model, which can pick up information from formats beyond text, including pictures, audio, and video. MUM can also transfer that knowledge across the 75 languages and counting that it’s trained on.

Using machine learning, Google can now include context outside of standard text-based search terms, which can be helpful if you’re feeling stuck on what to search for and which words or phrases to use. In the coming months, Google search results will start surfacing links related to what you were looking for but that you might not have considered.

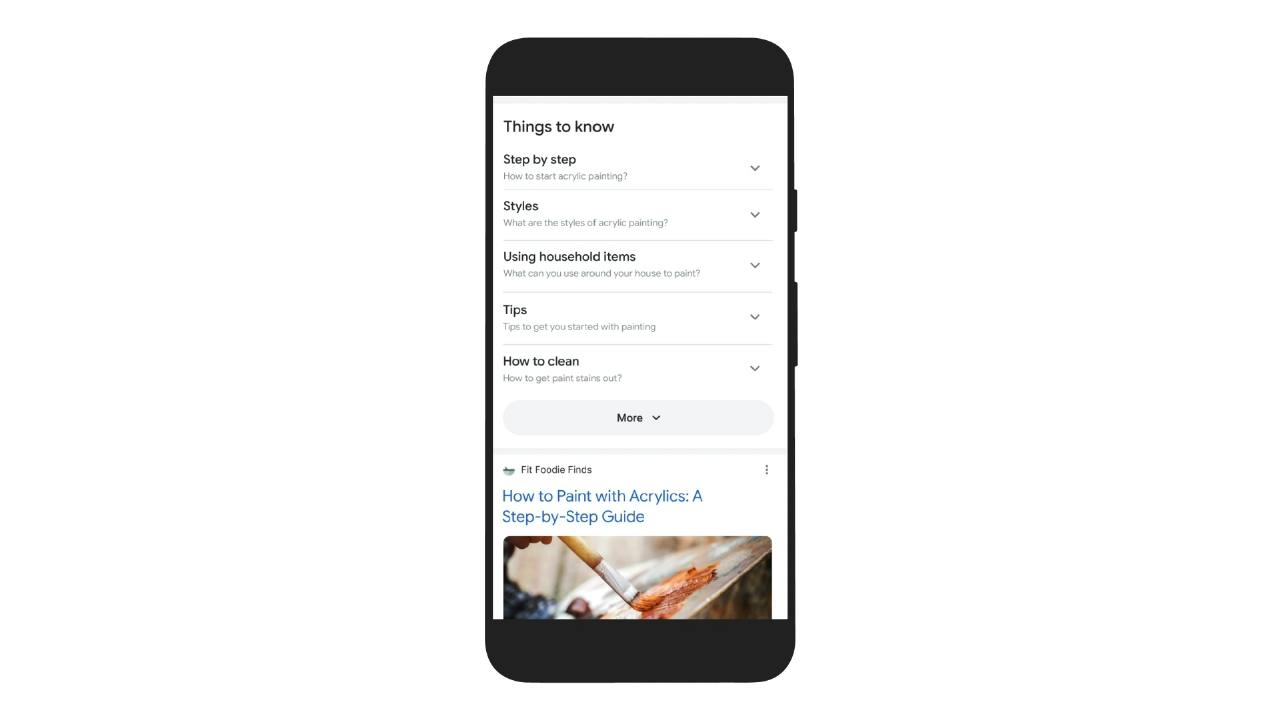

One example the company offered was a search for acrylic paint: You’d type the term into the search bar and then scroll down to a section called Things to know. Google will surface a description of acrylic paint and where you can buy it, along with links for how to use acrylic paints, the styles associated with this type of painting, and tips on technique and how to get started with things around the house. In this particular case, Google’s AI will expand your search and lead you down a rabbit hole paved with acrylic paint, or something like that.

Google’s AI is pulling all of this information from relevant search results, and it’s possible that “Things to know” might make you less likely to actually click on links to find more information if search is serving it up in a neat little box. But Google will still link you to the resource it grabbed the info from, so maybe it’s not quite a “saved you a click” situation (though content creators might have reason to be nervous).

Google Lens will use machine learning to let you ask questions about photos with real-time visual search. For example, if you see a photo of a sweater, you can use Lens to ask for other types of clothing items with the same pattern.

However, perhaps the most practical implementation for this feature is when you’re looking for help identifying something specific that you’re not sure how to phrase in a search. Google demonstrated this with a photo of a bike with a broken Derailleur, which I didn’t even realise was a part of the bike until this moment. You’d point the Google Lens-enabled camera toward the bike’s broken part, then ask Google how to fix it without identifying the part for Google. These features for Google Lens won’t be available until early next year, and it’s unclear whether they will be baked into the standalone Google Lens app or inside an Android phone’s default Camera app.

Google is also using machine learning to recognise moments in a video and identify topics related to the scene. For instance, if you’re watching a video on a specific animal not mentioned in the video title or description, Google can still pick up on the species and return related links. Google says this particular feature will roll out in the coming weeks, with more “visual enhancements” in the coming months.

Finding related images will soon be easier with a newly redesigned image search results page. This should make looking for project ideas and other related searches a bit easier, and you can try it now in Google search on the web.

Two more basic search changes coming, unrelated to Google’s machine learning, are options to Refine this search and Broaden this search, which you can choose as you search the internet. This will help you go beyond your original question, or narrow it down. Google said these features will roll out in the coming months.