You may recall last week when we wrote about an absolute, unrepentant knob that was arrested for sitting in the back seat of his Tesla while it was under the partial control of Tesla’s Level 2 semi-automated Autopilot system. Said knob claimed to have not-driven over 64,374 km in this irresponsible and dangerous manner. Incredibly, there’s at least one person who thinks this is just fine, and has expressed that, unequivocally, online. It’s someone we’ve met before.

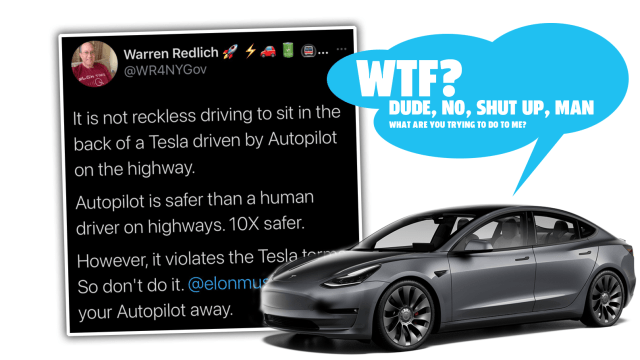

The person is Warren Redlich, the combative and delusional Elon Muskovite whose YouTube show I was on a few weeks back. Redlich tweeted the following, which you can see responded to by autonomy expert and the only dude to set the Cannonball Run record in a Tesla using Autopilot, Alex Roy:

WRONG

This IS reckless driving

You should NOT sit in back of a Tesla on Autopilot EVER

Autopilot is NOT autonomous or self-driving

YOUR supervision is required 100% of the time

You may kill yourself or others

Signed,

The guy w/the Tesla Autopilot #CannonballRun record pic.twitter.com/LweMVv4JsA

— Alex Roy (@AlexRoy144) May 17, 2021

Warren, a former DUI lawyer, comes right out and says this:

It is not reckless driving to sit in the back of a Tesla driven by Autopilot on the highway.

Just take a moment and let that sink in. Do a little thought experiment and imagine doing just that, and being pulled over by a cop. Of course, to actually pull over, you’d have to climb into the driver’s seat at highway speeds and take control of the car, being careful not to jostle the steering wheel or the stalks that control the semi-automated system, which seems, uh, not terribly safe.

Once pulled over, and talking to the cop, how would you imagine they would take your claim that it’s not reckless driving to, you know, have a car driving at highway speeds with no one at the controls?

I’m going to guess it wouldn’t go over so hot.

Warren backs up his idiotic claim by saying that

Autopilot is safer than a human driver on highways. 10X safer.

This is a claim that comes from Tesla themselves, and Warren’s getting a very crucial part wrong here: Even if we take this claim as absolutely true (I don’t, and I’ll explain why in a moment) the study is referring to Autopilot use on highways with a human driver ready to take over at any moment, the way Autopilot is supposed to be used. That very clearly means not from the back seat, like a moron.

And, for that study itself, there’s all sorts of issues, which have been covered before, but include crucial factors like not factoring in the age or condition of the cars (Teslas are overall much newer than the average car), lack of clarity regarding what’s constituting an “accident,” as well as a lack of clarity showing what type of road and situation the accidents occurred in — freeway driving, city, rural, etc. In the 2020 study, 94 per cent of Autopilot use was on limited access freeways.

The Q1 2021 report that suggests 10X safer still doesn’t break down all of the conditions at play, so it’s hard to get a really apples-to-apples comparison.

But, really, that doesn’t even matter, since Autopilot is a Level 2 system that clearly requires a human to be alert and ready to take over, and you’re not going to fucking do that from the back seat. Oh, and the threat that Elon may not like it and take it away is hardly enough of a reason, and misses the point entirely.

It really doesn’t matter how well AP does when it’s working great; in fact, you could argue that the better it does, the more complacent a driver can get, and the less attention they’re paying, so when their attention is needed, they’re not ready, and that’s how crashes happen.

Even when Autopilot is working well, it’s fragile. There’s so many common events that can happen that can cause Autopilot to disengage. Look on Tesla forums and you can see many examples of Autopilot cameras failing and preventing Autopilot from activating or causing it to disengage due to common things like condensation, rain or snow or ice, or even glare from the sun.

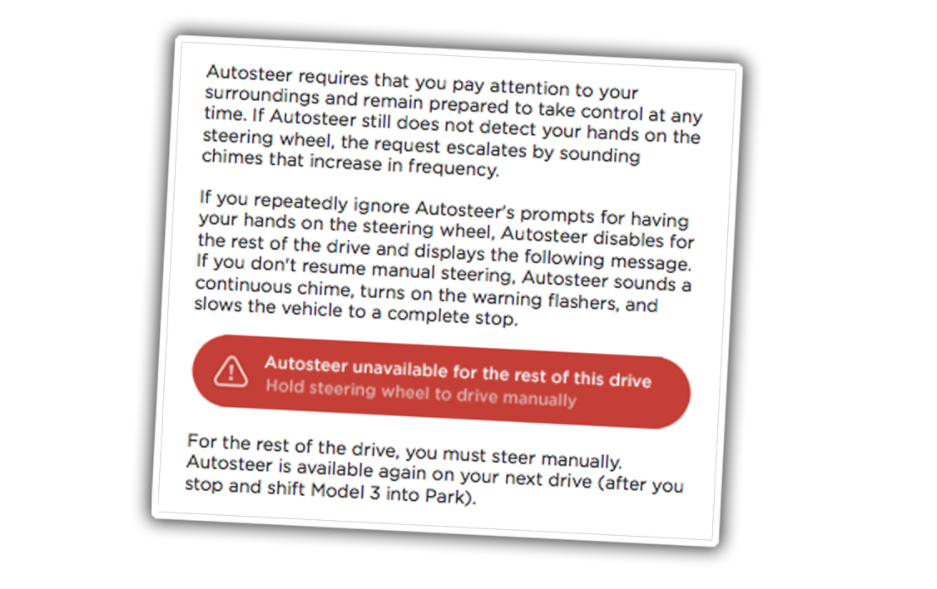

And, remember, when AP disengages, the best it can do is just stop:

On a highway, coming to a stop in an active lane of high-speed traffic is a recipe for disaster.

And that’s if it actually detects something is wrong; if it doesn’t then the result could be far worse, as it has been before.

Teslas have no sensor cleaning systems in place, and while there are multiple cameras, it’s not a redundant system — the three main cameras located in the upper middle part of the windshield all need to be unobscured for the system to work.

A YouTuber who had a bird shit on one of his cameras and found that it disabled Autopilot — again, not exactly a meteor strike when it comes to chances of it happening to you. He took the time to semi-methodically see just which cameras were actually needed for Autopilot to function:

Again, it’s the three in front of the rear view mirror, a place that I’m pretty sure nobody told birds to avoid shitting upon or swarms of bugs to avoid slamming into and splattering like a Jackson Pollack.

We’ve covered a lot of ground here before, and you may be wondering why I’m even giving trolls like Warren any more attention (he’s blocked me on Twitter, sadly), and the reason is because the types of ideas he’s pushing are the kinds of things that have the potential to get people hurt.

Now, there may be some arcane legalistic justification why being in the back seat while no one is behind the wheel of your car speeding at 113 km/h down the highway is not technically “reckless driving,” but that would just be an idiotic semantic game. There’s no possible way it’s anything other than a horrible idea.

It’s worth noting here that Warren isn’t an expert on the technical aspects of automated driving. Fucker doesn’t even own a Tesla. He drives a Passat. I know because he said so on that miserable YouTube show I was on.

The man who desperately wanted me to say the Tesla Model Y was the “greatest car in the world,” something a lightly intoxicated eight-year-old might demand to hear as well, doesn’t even drive a Tesla. Amazing.

For example, just recently on May 5, there was another fatal crash involving a Tesla. Now, authorities have not yet issued any definitive statements about whether or not the car was on Autopilot, but what we do know is that the driver, a father of two who was tragically killed, did make a number of videos and posts on TikTok and other social media that featured his Tesla on Autpilot, which he referred to as “self-driving” and “FSD” (for Full Self-Driving):

The most troubling posts are on what appears to be his TikTok account. The posts show him using his Tesla’s driving automation in traffic, describing it as “FSD” and “selfdriving,” and describing his state has “tired” and “bored” https://t.co/D5UvMQM1Srhttps://t.co/TaywbHVq7V pic.twitter.com/Dt6AXDmPTj

— E.W. Niedermeyer (@Tweetermeyer) May 6, 2021

Again, no formal conclusion about the wreck has been issued — initially, CHP’s preliminary investigation stated AP was active, but on Friday they walked that back, saying no “final determination made as to what driving mode the Tesla was in or if it was a contributing factor to the crash.” So, it’s possible Autopilot was not engaged.

What is clear is that, much like our deluded pal Warren, the victim of the crash appeared to be under the illusion that Autopilot was far more capable than it actually is, which, again, is very bad.

I feel like I write about this a lot lately, and I was asking myself why; the conclusion I came to is that this is a situation unique in automotive history, where a technical feature about a particular carmaker’s cars becomes such a source of focus and misinformation and glorification by a dedicated sub-group of people.

I can’t think of any time something of this intensity has happened. Sure, there’s always been hardcore fans, and people who believe in their cars so much it occasionally gets them into trouble, like Jeep owners that may get stuck on the trail or Hellcat owners crashing as they leave a Cars and Coffee.

But this is different. This time the feature of the car is touted as a revolutionary safety innovation, and there’s a loud group of people — which sometimes includes the company’s own CEO — that suggest misleading and overly optimistic things about the system.

Imagine if Volkswagen in the 1960s and 1970s, after doing those ads where the Beetle was shown to float, like this one…

…really leaned in to the idea of a floating Beetle and described its flat chassis design as being “Amphiready” or something like that? What if VW put out videos of Beetles driving into water with disclaimer text saying things like “it’s only not classified as a water vessel for legal reasons” and the 1970s version of TikTok (Super8 films sent out over the mail?) featured lots of short movies of people driving their Beetles into streams?

Sure, VW may have a sticker on the dash saying THIS CAR IS NOT A BOAT and DO NOT DRIVE IN WATER but people still see floating Beetles all over the place, so sometimes they try it.

And when someone drowns because the Beetle is not a boat, the amphibious VW fans say it was the driver’s fault for ignoring the stickers, and not how many people may have been saved when they accidentally drove into lakes in Beetles as opposed to other cars that would sink immediately.

If this happened, and I was an auto journo in that era, you can damn well bet I’d be writing about how irresponsible it is for VW to keep letting people think that their cars were amphibious, and would push for them to either finish the job and make it actually amphibious or take real efforts to prevent people from doing stupid things. Maybe I’d suggest holes in the floor. I’d prefer an actually amphibious Beetle, but if it’s not, then people should not think it is.

It’s less visually exciting, but this is essentially what’s happening right now with Tesla and the autonowashing of its semi-automated Level 2 driver assist system: a group of very dedicated fans are foolishly overselling the capabilities of the car; so much so that a grown fucking adult actually suggested that being in the back seat of a moving car with no one at the wheel is in any possible way a safe and acceptable idea.

So that’s why these trolls sometimes need to be fed, in the vain hope that in doing so, there will be a better quality of droppings. That analogy fell apart there, but let’s just leave it at this: if you think your Tesla (or any other L2 driver assist system) can drive itself, you’re wrong.

You’re wrong, and if you pretend like it can, your dumb, lazy fantasy can get people killed. So knock it off, dummy.