Shocker: Despite conservatives’ endless kvetching about the supposed liberal bias of Silicon Valley technocrats, one of the easiest ways to go viral on Facebook is spouting extreme, far-right rhetoric, according to a new study by New York University’s Cybersecurity for Democracy project.

In results released Wednesday, researchers with the project analysed various types of posts promoted as news ahead of the 2020 elections and found that “content from sources rated as far-right by independent news rating services consistently received the highest engagement per follower of any partisan group.” Far-right sources that regularly promoted hoax, lies, and other misinformation did even better, outperforming other far-right sources by 65%.

The researchers relied on data for 2,973 news and info sources with more than 100 followers on Facebook provided by Newsguard and Media Bias/Fact Check, two sites that rate the accuracy and partisan leanings of various outlets. (There’s reason to quibble with the ratings provided by these places, but they’re reasonable proxies for categorising a large number of sources by ideological bent.) The team then downloaded some 8.6 million public posts from those nearly 3,000 sources between Aug. 10, 2020 and Jan. 11, 2021, just shy of a week after a crowd of pro-Trump rioters tried to storm the Capitol to overturn the 2020 election results.

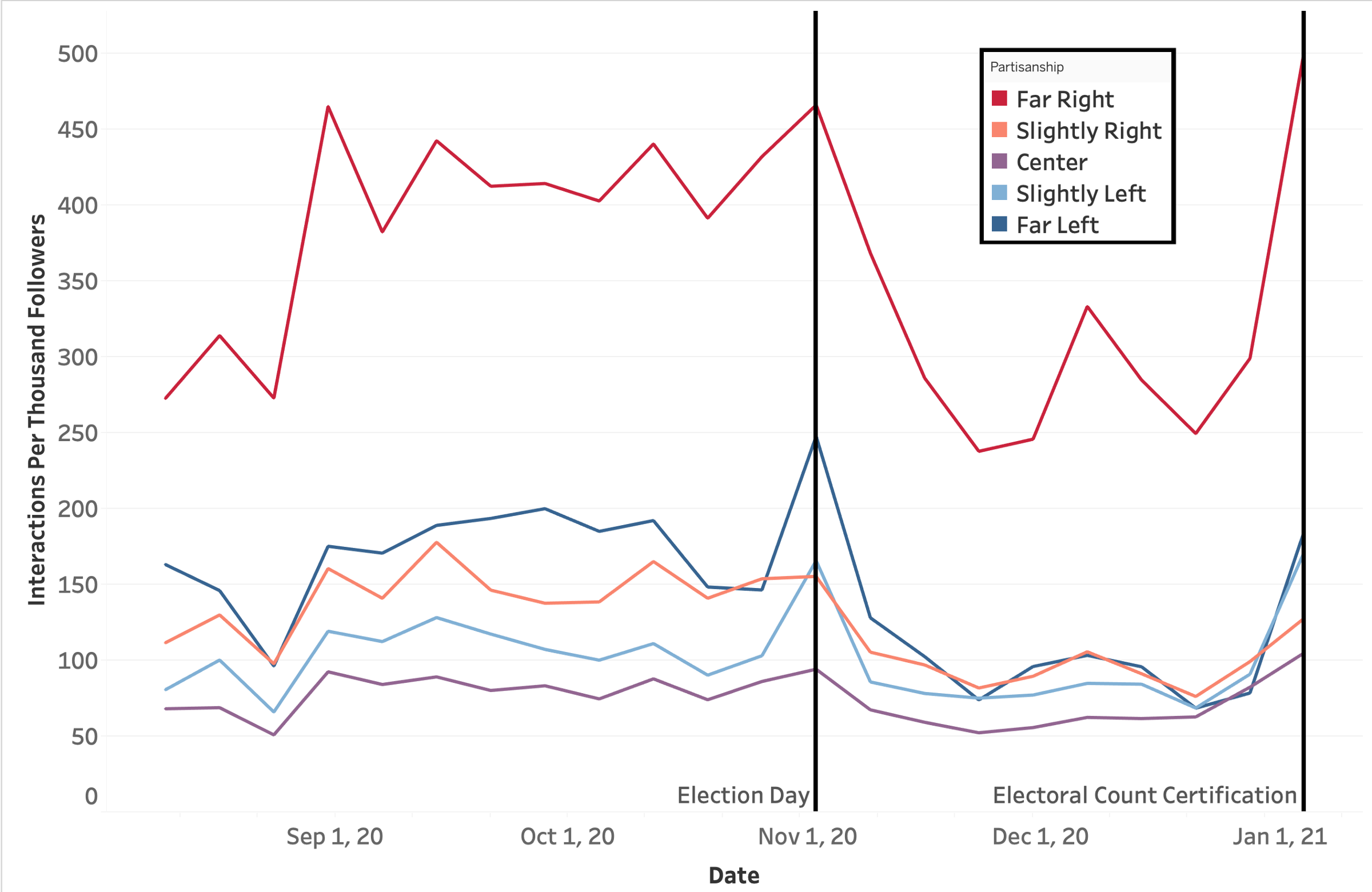

They found that sources categorised as far-right by Newsguard and Media Bias/Fact Check did very well on Facebook, followed by those classified as far-left, other more moderately partisan sources, and finally those that were “centre”-oriented. Those far-right sources tended to receive several hundred more interactions (likes, comments, shares, etc.) per 1,000 followers than other outlets. Far-right pages experienced skyrocketing engagement in early January, before the riot at the Capitol.

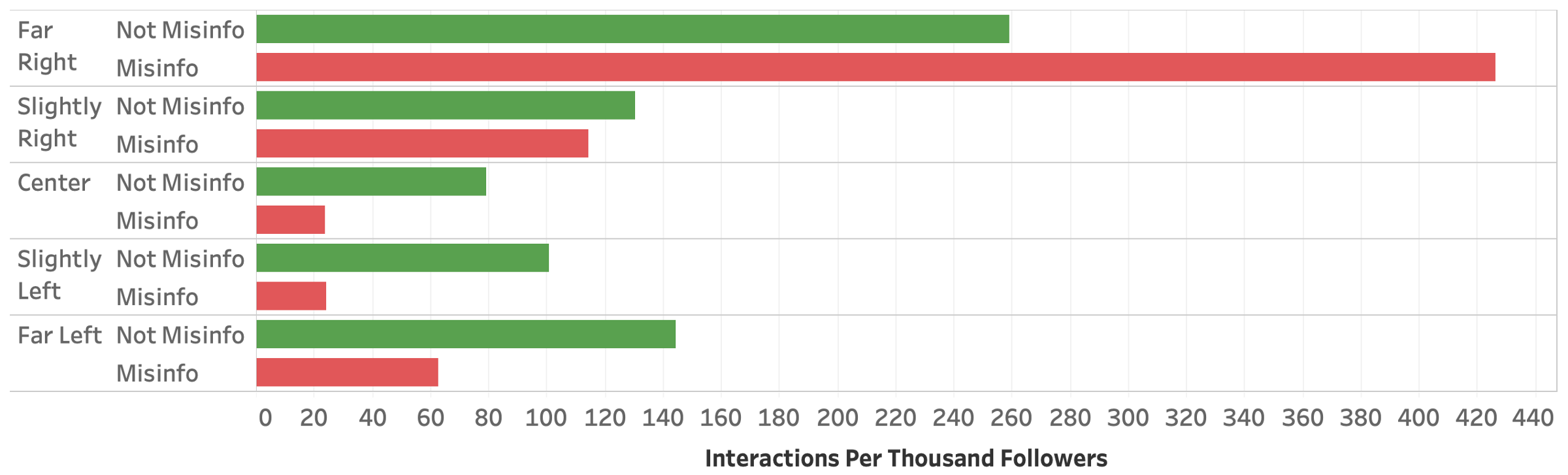

Furthermore, those far-right sources classified as regularly spreading misinformation and conspiracy theories actually did better on engagement (426 interactions per 1,000 followers a week on average) than every other type of source (including far-right pages not classified as sources of misinfo, which got 259 interactions per 1,000 followers a week on average).

That’s not even the most egregious part of it. While far-right sources were rewarded with higher engagement on Facebook when they spread misinfo or conspiracy theories, the Cybersecurity for Democracy findings show sources classified as “slightly right,” “centre,” “slightly left,” or “far left” appeared to be subject to a “misinformation penalty.” Said penalty appeared to be much heavier for sources classified as centrist or left of centre.

“What we find is that among the far right in particular, misinformation is more engaging than non-misinformation,” Laura Edelson, the lead researcher of the study and an NYU doctoral candidate, told Wired. “I think this is something that a lot of people thought might be the case, but now we can really quantify it, we can specifically identify that this is really true on the far right, but not true in the centre or on the left.”

Edelson told CNN, “My takeaway is that, one way or another, far-right misinformation sources are able to engage on Facebook with their audiences much, much more than any other category. That’s probably pretty dangerous on a system that uses engagement to determine what content to promote.”

Edelson added that because Facebook is optimised to maximise engagement, it follows that it may be more likely to juice right-wing sources by recommending more users follow them.

The researchers wrote their data aligns with previous research by the German Marshall Fund and the Harvard Misinformation Review that extreme and/or deceptive content tends to perform better on social media; the latter study also found that “that the association between partisanship and misinformation is stronger among conservative users.”

The study didn’t investigate why Facebook seems to favour right-wing sources, and the researchers noted that engagement numbers don’t necessarily reflect how widely content was shared and viewed across the social network. In a statement to Wired, a Facebook spokesperson used a similar line of defence: “This report looks mostly at how people engage with content, which should not be confused with how many people actually see it on Facebook. When you look at the content that gets the most reach across Facebook, it’s not at all as partisan as this study suggests.”

Facebook has floated similar defences before — that engagement data doesn’t reflect how often a given news outlet’s content is shared sitewide or how many users actually encounter or click on it. As Recode has argued, including other data sources such as engagement on links shared privately on Facebook does indicate the top performers sitewide include more mainstream sources like CNN, the BBC, and papers like the New York Times, but doesn’t change the overall takeaway that “certain kinds of conservative content — mostly emotion-driven, deeply partisan posts” have an inherent advantage on the site.

Facebook has also tried to explain away the issue by suggesting right-wingers are just inherently more engaging, with its algorithms having little to do with it.

One anonymous executive at the company told Politico in September 2020 that “Right-wing populism is always more engaging” because it taps into “incredibly strong, primitive emotion” on topics like “nation, protection, the other, anger, fear.” The executive argued that this phenomenon “wasn’t invented 15 years ago when Mark Zuckerberg started Facebook” and was also “there in the [19]30s” (not reassuring) and “why tabloids do better than the [Financial Times].”

Prior reporting and research has repeatedly shown that while Facebook is great for creating partisan echo chambers, right-wingers are far and away the biggest beneficiary, in some cases by design. For example, Facebook reportedly conducted internal research showing Groups were becoming vehicles for extreme and violent rhetoric, and was made aware from user reports that a feature called In Feed Recommendations that wasn’t supposed to promote political content was boosting right-wing pundits like Ben Shapiro. In these and other cases, a former company core data scientist recently told BuzzFeed, Facebook’s policy team reportedly intervened, citing the possibility of backlash from conservatives if changes were made.

Facebook is, of course, by no means the only way far-right ideas slip into the conservative mainstream — nor is the far right anything new to U.S. politics — but it is an extremely important toolset in an era where movement conservatives are extremely online and constantly searching for the latest viral outrage. While traditional conservative media like Fox News and its mutant stepchildren like Newsmax and One America News Network are powerful in their own right, Facebook offers an easy way for GOP politicians, right-wing propagandists, troll-the-libs pundits, QAnon conspiracists, and the like to repackage extreme viewpoints into memes, owns, and other shareable content for a mass audience.

“We’re looking forward to learning more about the news ecosystem on Facebook so that we can start to better understand the whys, instead of just the whats,” the Cybersecurity for Democracy team wrote in the report.