Like all social media companies, misinformation has tested TikTok, and sometimes TikTok has failed. Its latest attempt to move the needle is implementing warning labels on potential misinformation that hasn’t been verified by independent fact-checkers.

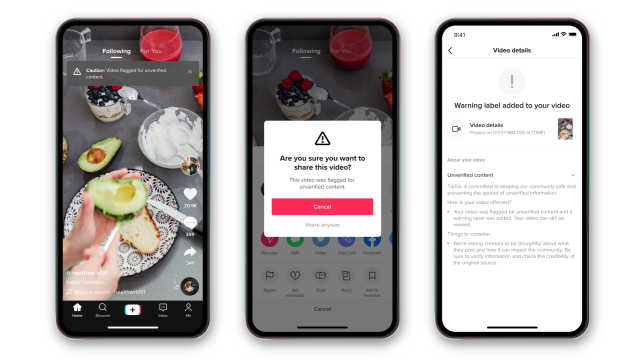

TikTok said today in a press release that it’ll be looking for content that includes unproven claims, especially those spreading during “unfolding events.” Such videos, it says, won’t reach the For You page (TikTok’s curated main feed where users scroll through random videos) and will include a warning banner reading “Caution: Video flagged for unverified content.” If a user chooses to share the video, they’ll have to click through a substantial popup asking them: “Are you sure you want to share this video?” with an “unverified content” reminder. The video’s creator also gets a notification informing them that they’ve gotten a warning label.

Seems like a lot for a massive platform that supports text, audio, and video. A spokesperson confirmed in an email to Gizmodo that the platform takes “all of those signals into account” and identifies potentially troublesome content through a mix of proactive searching, user reports, and automated detection.

In order to deem a claim “unverified,” TikTok’s moderation team sends videos to one of their fact-checking partners, PolitiFact, Lead Stories, and SciVerify. When a partner determines something to be unambiguously debunked, it’s removed outright, while “inconclusive” determinations will receive this new warning label. (These labels can’t be appealed by the content creator.)

TikTok says that the product — designed with the behavioural science-driven consulting firm Irrational Labs — reduced sharing of labelled unverified claims by 24%. Irrational Labs founder Kristen Berman explained to Gizmodo in a phone call that the key is the warning label “Are you sure you want to share this video?” Because misinformation often activates an emotionally-driven “hot state,” Berman said, people tend to react to it on impulse; a warning label returns us to a “cold state” in which we’re “more logical and deliberate.”

“We hypothesise that adding a second piece of friction, making people pause, gets us to potentially reevaluate if we really want to share this information,” Berman said. “We just saw something that reminded us of the value of truth.”

TikTok’s intervention mirrors Twitter’s, though it’s difficult to say how effective these labels have been. Warning labels led to a 29% reduction in “Quote Tweets of misleading information,” Twitter claimed last November; however, this was during a broad attempt to put a similar “cold state” roadblock on quote tweets while simultaneously limiting retweets, a shift that was considered a failure, and the change was reverted last December, after about two months of testing. Facebook’s own attempts to stanch the flow of misinformation have been no less bumbling.

It’s impossible to know the scope of misinformation on TikTok, since it doesn’t provide a research tool like Facebook’s CrowdTangle. But it has worked on making it harder to find conspiracies and hate speech for U.S.-based users. This isn’t to say that seditionist militia content isn’t easily searchable (it is) or that QAnoners haven’t played whack-a-mole with the tags (they have) or that TikTok hasn’t engaged in extraordinary levels of censorship (that too). This is just to say that scrubbing QAnon and extremist group hashtags from search results long before the Capitol insurrection was probably a good thing, and these roadblocks can’t hurt. Hopefully. It’s not like the bar for a social media company protecting its users is especially high.