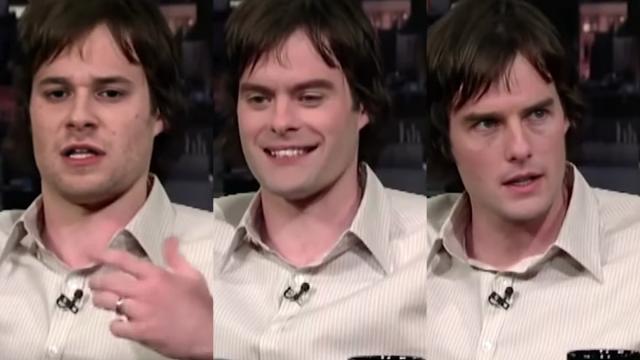

Videos of former Saturday Night Live comedian Bill Hadar discretely morphing into Tom Cruise and Seth Rogen during an interview went viral back in 2019. While it looked like another tale of internet magic, it pointed to something darker stirring in the internet’s depths — deepfakes.

This story was originally published August 19, 2019.

The video exists thanks to deepfake technology and while its realism is still in its infancy, it’s fast becoming one of the most terrifying developments in technology.

To better understand how it works and what it means the future, we peeked under the covers.

[referenced url=”https://gizmodo.com.au/2019/07/virginias-anti-revenge-porn-law-now-covers-deepfakes-and-other-falsely-created-content/” thumb=”https://i.kinja-img.com/gawker-media/image/upload/t_ku-large/rfn7dt5vg1odkin4islh.jpg” title=”Deepfakes And Other ‘Falsely Created’ Content Now Covered Under New U.S. Anti-Revenge Porn Law” excerpt=”A update to Virginia, U.S.A.’s law against revenge porn banning the distribution of videos and images that have been deepfaked – modified using machine learning algorithms to picture someone else – or otherwise created with the intent to “coerce, harass, or intimidate” a victim went into effect on Tuesday, per CNET.”]

What even is a deepfake?

To put it simply, the term deepfake is a portmanteau of “deep learning” and “fake” and refers to a computer program with the ability to attach a new face to someone else’s within a video. The difference between Snapchat’s face swapping feature, which uses similar technology, is that it’s starting to look more realistic.

So naturally, people are becoming concerned with the implications.

Which brings us to the Bill Hadar video. It was created and uploaded by a YouTuber named Ctrl Shift Face and depicts one of the most realistic deepfakes we’ve ever seen.

The user’s been uploading equally terrifying deepfake videos for months but it’s this latest morph that’s got people talking.

But how are they making it look so realistic?

The neural network

Deepfake technology uses generative adversarial networks (GANs), which use a data set of images to generate an accurate image or video using the data set available.

This is composed of two competing networks — the ‘generator’, which creates the deepfake and the ‘discriminator’, which tries to detect its forgery. They tussle until the discriminator can no longer recognise it as a fake.

There’s a reason why celebrities are so often the focus of deepfake videos. Their facial data is so public and vast across a variety of visual mediums that there’s enough for the neural learning to work with.

As an example, Ctrl Shift Face uploaded a video to demonstrate what his machine learning dataset of Tom Cruise looks like.

It’s filled with screenshots of Tom Cruise from the 50 or so movies he’s been in, capturing each angle of his face with multiple expressions.

As terrifying as this is, it does demonstrate why us non-famous can rest easy for now. So much visual data is needed to make a convincing deepfake, so it’s unlikely to happen to you due to the current state of the technology.

Ad the MIT Technology Review pointed out in 2018, GANs aren’t the bad guys here. They’ve made “self-driving cars and the conversational technology that powers Alexa, Siri, and other virtual assistants” possible. Deepfakes are an unfortunate offshoot of this.

“Supply a deep-learning system with enough images and it learns to, say, recognise a pedestrian who’s about to cross a road,” Giles wrote.

“But while deep-learning AIs can learn to recognise things, they have not been good at creating them.”

How have people used it so far?

In 2018, the internet’s deepfakers couldn’t stop themselves from injecting Nicolas Cage’s expressive face into a number of movies to chilling effect.

At the time it was relatively easy to identify them as fakes — they didn’t look natural. It was like a mask of Nicholas Cage had been stuck to a moving face.

But as the recent Bill Hader video demonstrated, the tech is advancing at an alarming rate. Not only that, apps and programs that do the work for people are becoming increasingly widespread and accessible.

But while the Nicholas Cage fiasco might give you night terrors for years, it’s also been used for real-life criminal offences.

The most thriving community of deepfake creators is for fake pornography. Once living on a sub-Reddit before it was banned from the site in 2018, deepfake porn was created by applying celebrity faces to actual pornography videos. Wonder Woman actress Gal Gadot, Natalie Portman, Katy Perry and Taylor Swift have all been the subject of these deepfakers.

It’s also been used to doctor videos attributing false quotes to known politicians. BuzzFeed uploaded a supposed video of Barack Obama talking about deepfakes in 2018 but by the end of the video, it’s revealed to be a doctored video with voicing by Jordan Peele. It looks strange but if you’re unaware of deepfakes, you’d probably believe it was really him.

How to spot a deepfake

Spotting a deepfake is sometimes really obvious because it looks like a stationary face pasted on to a moving body.

But with more sophisticated offerings, paying close attention to the frequency of eye blinking, skin tone changes around the edge of faces (for e.g. where facial data might have been cut) and shadows that don’t look natural are some telltale signs.

[referenced url=”https://gizmodo.com.au/2019/06/a-new-method-of-spotting-deepfake-videos-looks-for-the-subtle-movements-we-dont-realise-we-make/” thumb=”https://i.kinja-img.com/gawker-media/image/upload/t_ku-large/l3ev4lx2bddd3wxlvcmu.gif” title=”A New Method Of Spotting Deepfake Videos Looks For The Subtle Movements We Don’t Realise We Make” excerpt=”The quality and speed at which videos can now be faked using neural networks and deep learning processes promises to make the upcoming US presidential election even more of a nightmare. But by exploiting something overlooked in current deepfake techniques, researchers have found an automated way to spot fake videos.”]

What does the future hold?

If deepfake technology continues to get better and more believable, the future of video content will become a very difficult place to navigate. It may become harder for audiences to tell whether a video has been doctored or not (like the Jim Acosta and Trump example) and whether you can believe a video quote from a politician.

This also presents a whole new challenge for the media as defences against ‘fake news’ become more difficult to discount. Trust in press conferences could drop as doctored videos fill the internet and video leaks like former PM Kevin Rudd’s swearing spat might not be as easily believed.

In terms of video-based abuse and the potential for resulting blackmail, these issues are going to become increasingly complicated when deepfakes become more realistic.

But while deepfake technology has mostly been used for non-consensual fake pornography, it’s possible it could also be used for good. Some researchers believe the AI technology used to make deepfakes, GANs, might be able to accurately spot tumours and other physical health issues.

The technology is still awhile off, however, and doesn’t seem to be moving as quickly as negative deepfake mimicry is.