Searching for information online sometimes takes creativity”depending on how you word a query, Google can bring back some wonky results. That’s why Google announced today that it’s improved search to better understand natural language for “queries [it] can’t anticipate.”

Part of the issue is that we imperfect humans don’t always know how to spell what we’re looking for, or even word it in a way that makes sense to computers. If you phrase a query conversationally, you run the risk of getting garbage results back. That’s why it’s way more common to use what Google calls “keyword-ese”, where you’re just typing related words together. Sort of the equivalent of baby talk, for a computer algorithm.

[referenced url=” thumb=” title=” excerpt=”]

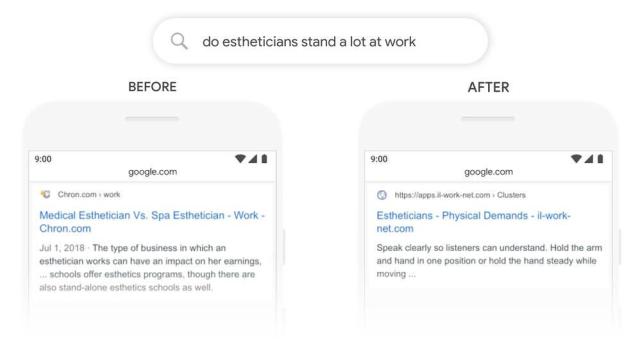

To combat this, Google says it’s using an open-sourced Google‘ caption=’Somewhat related news results, versus the thing the person was actually searching for. (Image: Google)’ align=’centre’ clear=’true’ ]

Google’s seen success with its natural language processing in recent years with best at understanding and answering conversational commands and queries. It’s of course, not perfect, but as someone with all three in my apartment, I don’t have to think quite as hard about the exact phrasing I use when speaking to my Google Nest Hub.

According to Google, using BERT will help improve one-in-ten English-language searches in the U.S. That’s not to say that BERT won’t roll out to other languages sometime down the line. Google says its taking improvements from English models and is currently seeing significant improvements in Korean, Hindi, and Portuguese.