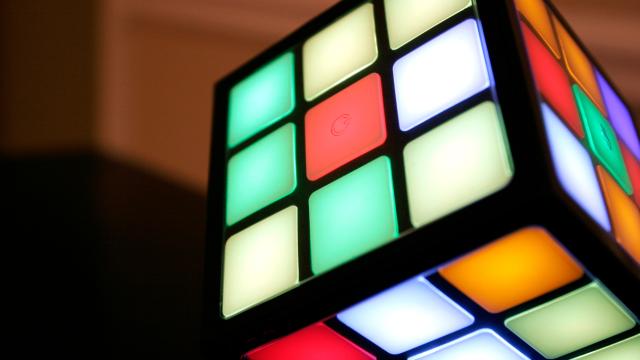

Meet DeepCube, an artificially intelligent system that’s as good at playing the Rubik’s Cube as the best human master solvers. Incredibly, the system learned to dominate the classic 3D puzzle in just 44 hours and without any human intervention.

Image: AP

“A generally intelligent agent must be able to teach itself how to solve problems in complex domains with minimal human supervision,” write the authors of the new paper, published online at the arXiv preprint server. Indeed, if we’re ever going to achieve a general, human-like machine intelligence, we’ll have to develop systems that can learn and then apply those learnings to real-world applications.

And we’re getting there. Recent breakthroughs in machine learning have produced systems that, without any prior knowledge, have learned to master games such as chess and Go.

But these approaches haven’t translated very well to the Rubik’s Cube. The problem is that reinforcement learning – the strategy used to teach machines to play chess and Go – doesn’t lend itself well to complex 3D puzzles.

Unlike chess and Go – games in which it’s relatively easy for a system to determine if a move was “good” or “bad” – it isn’t immediately clear to an AI that’s trying to solve the Rubik’s Cube if a particular move has improved the overall state of the jumbled puzzle. When an artificially intelligent system can’t tell if a move is a positive step towards the accomplishment of an overall goal, it can’t be rewarded, and if it can’t be rewarded, reinforcement learning doesn’t work.

On the surface, the Rubik’s Cube may seem simple, but it offers a staggering number of possibilities. A 3x3x3 cube features a total “state space” of 43,252,003,274,489,856,000 combinations (that’s 43 quintillion), but only one state space matters – that magic moment when all six sides of the cube are the same colour.

Many different strategies, or algorithms, exist for solving the cube. It took its inventor, Erno Rubik, an entire month to devise the first of these algorithms. A few years ago, it was shown that the fewest number of moves to solve the Rubik’s Cube from any random scramble is 26.

We’ve obviously acquired a lot of information about the Rubik’s Cube and how to solve it since the highly addictive puzzle first appeared in 1974, but the real trick in artificial intelligence research is to get machines to solve problems without the benefit of this historical knowledge.

Reinforcement learning can help, but as noted, this strategy doesn’t work very well for the Rubik’s Cube. To overcome this limitation, a research team from the University of California, Irvine, developed a new AI technique known as Autodidactic Iteration.

“In order to solve the Rubik’s Cube using reinforcement learning, the algorithm will learn a policy,” write the researchers in their study. “The policy determines which move to take in any given state.”

To formulate this “policy”, DeepCube creates its own internalised system of rewards. With no outside help, and with the only input being changes to the cube itself, the system learns to evaluate the strength of its moves.

But it does so in a rather ingenious, although labour intensive, way. When the AI conjures up a move, it actually jumps all the way forward to the completed cube and works its way backward to the proposed move. This allows the system to evaluate the overall strength and proficiency of the move.

Once it has acquired a sufficient amount of data in regards to its current position, it uses a traditional tree search method, in which it examines each possible move to determine which one is the best, to solve the cube. It isn’t the most elegant system in the world, but it works.

The researchers, led by Stephen McAleer, Forest Agostinelli and Alexander Shmakov, trained DeepCube using two million different iterations across eight billion cubes (including some repeats), and it trained for a period of 44 hours on a machine that used a 32-core Intel Xeon E5-2620 server with three NVIDIA Titan XP GPUs.

The system discovered “a notable amount of Rubik’s Cube knowledge during its training process,” write the researchers, including a strategy used by advanced speedcubers, namely a technique in which the corner and edge cubelets are matched together before they’re placed into their correct location.

“Our algorithm is able to solve 100 per cent of randomly scrambled cubes while achieving a median solve length of 30 moves – less than or equal to solvers that employ human domain knowledge,” write the authors. There’s room for improvement, as DeepCube experienced trouble with a small subset of cubes that resulted in some solutions taking longer than expected.

Looking ahead, the researchers would like to test the new Autodidactic Iteration technique on harder, 16-sided cubes. More practically, this research could be used to solve real-world problems, such as predicting the 3D shape of proteins. Like the Rubik’s Cube, protein folding is a combinatorial optimisation problem. But instead of figuring out the next place to move a cubelet, the system could figure out the proper sequence of amino acids along a 3D lattice.

Solving puzzles is all fine and well, but the ultimate goal is to have AI tackle some of the world’s most pressing problems, such as drug discovery, DNA analysis, and building robots that can function in a human world.

[arXiv via MIT Technology Reivew]