On Tuesday at tech festival SXSW, streaming site and Google subsidiary YouTube’s CEO Susan Wojcicki proposed one solution to the conspiracy screeds and “false flag” hoax videos that are slowly but surely taking over the site by gaming its algorithms.

No, it’s not really a critical self-examination of whether YouTube’s business model is all about pushing the most controversial and thus “engaging” content to undeserved prominence, as has repeatedly happened with promoted videos smearing mass shooting survivors. Instead, Wojcicki explained it may just insert a link to Wikipedia here and there under said conspiracy content, as shown in the tweet below featuring her example of a “Moon Landing Conspiracies” video:

New from @YouTube – CEO Susan Wojciki announces a feature to fight conspiracy theories w/ related links to news, Wikipedia #sxsw pic.twitter.com/sLtjdQNqbu

— Maureen Fitzgerald (@movandy) March 13, 2018

There’s numerous, very obvious problems with the example floated by Wojcicki. For one, the module appearing under the YouTube video in question just adds the words “Moon Landing,” the definition of a moon landing, and passes on that NASA’s Apollo 11 mission was the first such landing… before cutting off mid sentence with a link to Wikipedia. Seriously, this is all it says:

Moon Landing

A Moon landing is the arrival of a spacecraft on the surface of the Moon. The United States’ Apollo 11 was the first manned mission to land… Wikipedia

At no point does the module challenge any specific claim that might have been made in the video, or even warn that claims made in it should be judged with an abundance of scepticism — as Google already does in its (admittedly imperfect) sidebars that appear alongside searches for news sites.

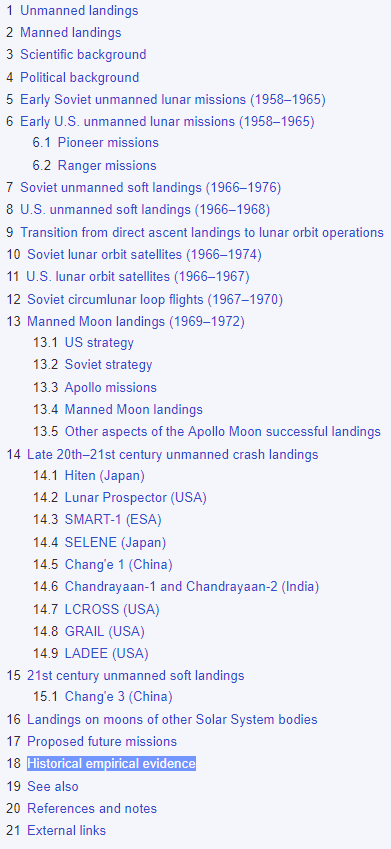

Wikipedia may indeed be relatively reliable as a general reference tool. But as the Guardian noted, the site explicitly warns that it sometimes has trouble keeping a handle on fast-breaking stories, and directing a slew of conspiracy theorist video enthusiasts towards a web encyclopedia anyone can edit seems like a dubious strategy at best. (BuzzFeed’s Tom Gara referred to the module as a “good way to encourage mass defacing Wikipedia articles at scale.) Also, Wikipedia’s primary purpose is not really debunking things; the article touted by Wojcicki only mentions lunar landing conspiracy theories dozens of sections and subsections down, and mostly in passing.

While YouTube says that Wikipedia could just be one of several third-party sources for this module, it’s not exactly a reassuring intro. It feels more like hand-waving to mask YouTube’s consistent unwillingness to engage with the trash content it routinely circulates and profits from on anything more than the minimal level necessary to avoid lawsuits or bad PR.

The streaming site in fact appeared to be doubling down on the idea that platforms shouldn’t be in the business of regulating content until it affects their bottom line. As BuzzFeed’s Ryan Mac noted on Twitter, Wojcicki suggested that while YouTube can determine what content is hateful, it shouldn’t be debating the nature of truth.

Wojcicki then engages on flat earth truthers.

Me paraphrasing what she said: Most people think the earth is round, some people think it’s flat. That’s the way it is. Why should we decide what people think?

— Ryan Mac (@RMac18) March 13, 2018

Earlier, @nxthompson asked how do you decide who is a credible, trustworthy source on YouTube. @SusanWojcicki refused to say what factors go into deciding. She suggested that some of the things they look at are “number of journalistic awards” and “amount of traffic” they have.

— Ryan Mac (@RMac18) March 13, 2018

.@SusanWojcicki‘s statement reminds me of when Wall Street said it couldn’t explain how it packaged subprime mortgages because it was too complex… but trust us, it’ll be fine!

Nothing is ever too complicated to explain. Complication generally amounts to obfuscation… https://t.co/dLUTLQoPBF— Katie Benner (@ktbenner) March 13, 2018

Obviously there is room for nuance — but this response seems like a retreat from the reality that the inevitable intersection of garbage lies and hateful harassment is exactly what keeps on getting YouTube in trouble.

A YouTube spokesperson offered few clarifications or insights into the thinking behind the demonstration in an email to Gizmodo:

We’re always exploring new ways to battle misinformation on YouTube. At SXSW, we announced plans to show additional information cues, including a text box linking to third-party sources around widely accepted events, like the moon landing. These features will be rolling out in the coming months, but beyond that we don’t have any additional information to share at this time.

(Followup questions regarding how exactly sidebar links to general reference Wikipedia articles would slow down the proliferation of conspiracy content on YouTube, or whether partnering with organisations based around fact-checking might be more effective, did not receive a specific response.)

One other, arguably better solution that YouTube has explored and acted on is hiring thousands of new moderators, but it’s clear that even with the additional staff the site is having a hard time controlling the sheer amount of user-generated content that rolls in. Staff have also stumbled back and forth over whether to ban people like InfoWars founder Alex Jones or his subordinate Jerome Corsi, adding to the impression the site always ends up being noncommittal to any sweeping changes.