Shutterstock/Sam Woolley

Privacy experts have sounded alarms on face recognition for years. Originally, there were concerns that walking into airports and in public parks meant you were scanned and matched to secret criminal databases. Those fears have since evolved, and now face recognition is a bedrock of predictive technology. Soon, your chances of becoming a terrorist or developing a rare disease could be determined from your face. And our oncoming new-normal of ever-present face recognition technology may also be used to predict sexuality — accurately or otherwise — prompting concerns for queer people in countries where anonymity is key to survival.

Stanford researchers claim they have used face recognition software trained on dating profile pictures to accurately identify someone’s sexuality. Researchers Michal Kosinski and Yilun Wang trained software using 35,326 pictures of 14,776 people. Participants (who weren’t contacted for the study, but have public profiles) were placed into four equal, self-reported categories: gay women, gay men, straight women, and straight men.

The Economist summarises the results:

When shown one photo each of a gay and straight man, both chosen at random, the model distinguished between them correctly 81 per cent of the time. When shown five photos of each man, it attributed sexuality correctly 91 per cent of the time. The model performed worse with women, telling gay and straight apart with 71 per cent accuracy after looking at one photo, and 83 per cent accuracy after five. In both cases the level of performance far outstrips human ability to make this distinction. Using the same images, people could tell gay from straight 61 per cent of the time for men, and 54 per cent of the time for women. This aligns with research which suggests humans can determine sexuality from faces at only just better than chance.

91 per cent accuracy in guessing sexuality is startling, even if it’s attainable only in ideal conditions. The larger takeaway, however, is that the software reliably outperformed humans by as much as 30 per cent, meaning that the AI’s rote pattern-based analysis seems to be much sharper than whatever alchemy humans use to intuit people’s sexuality from a glance.

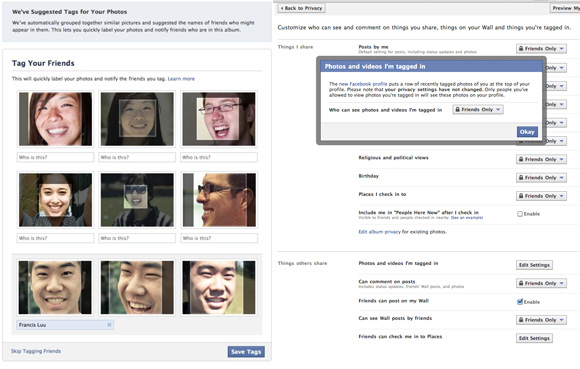

Dr Kosinski makes clear to The Economist that he didn’t create any new software tools for this, but simply put together preexisting tech. In fact, there’s precedent for these concepts independently, just not put together. Facebook already uses facial recognition when it suggests tagging friends in photos and further, the social network analyses user behaviour uses search history to determine which suggestions and ads pop up.

This suggests the study could be replicated, a matter not just of privacy but life-or-death in places where homosexuality is outlawed. In Chechnya, for example, gay men are imprisoned and forced to reveal names of other gay men. Dr. Kosinski acknowledges that even a proof of concept can be dangerous, though it’s important to show what AI and face recognition are capable of. It’s essential to be aware of what those in power could do with face recognition technology.

Facebook’s ‘Tag Your Friends’ feature uses faceprints

Broadly, face recognition works by measuring people’s faces and assigning them a “faceprint.” In this particular study, the software was shown the faceprints of men and women who identified themselves as gay or straight. It found patterns and then, when shown pictures it had never seen before, it searched for those patterns and categorized people accordingly.

Of course, as these are dating profiles, all the queer people are presumably open about their sexuality. And that makes a difference in their appearance, particularly as appearing more masculine or feminine affects their dating chances. And as these categories are self-reported, it’s also entirely possible not all of the people marked as “straight” are exclusively heterosexual.

If a nation hostile to queer people wanted to re-create similar technology, they’d first have to train AI on localised photos of queer people. That would be difficult, but not impossible, to find if they outlaw homosexuality and few people are “out” to have their photos scanned.

This particular study was also conducted in the US with a limited sample size; the accuracy rate could significant differ with a different set of people located elsewhere on the planet.

There are technical limitations as well. A follow-up test was recalibrated to more accurately reflect the ratio of gay to straight men. The software was given photos of 1,000 men, fewer than 100 of which identified as gay on the site. The software’s accuracy in this case dropped: Only 47 of the 100 men it selected as gay actually identified that way.

Even as face recognition becomes standard in public places, we’re still discovering ways it can be misused against vulnerable members of society. For queer people specifically, this dawning surveillance dystopia is all too familiar.