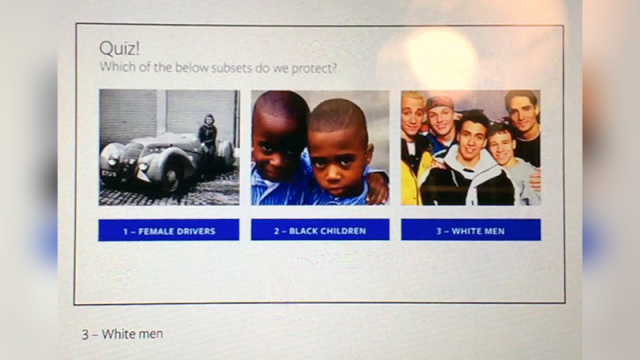

On Wednesday, ProPublica published dozens of startling training documents reportedly used by Facebook to train moderators on hate speech. As the trove of slides and quizzes reveals, Facebook uses a warped, one-sided reasoning to balance policing hate speech against users’ freedom of expression on the platform. This is perhaps best summarized by the above image from one of its training slideshows, wherein Facebook instructs moderators to protect “White Men,” but not “Female Drivers” or “Black Children.”

Image: Pro Publica

Facebook only blocks inflammatory remarks if they’re used against members of a “protected class.” But Facebook itself decides who makes up a protected class, with lots of clear opportunities for moderation to be applied arbitrarily at best and against minoritized people critiquing those in power (particularly white men) at worst — as Facebook has been routinely accused of.

According to the leaked documents, here are the group identifiers Facebook protects:

Sex, Religious affiliation, National origin, Gender identity, Race, Ethnicity, Sexual Orientation, Serious disability or disease

And here are those Facebook won’t protect:

Social class, continental origin, appearance, age, occupation, political ideology, religions, countries

So “White men are arseholes” is unacceptable on Facebook because race and gender are protected. “Christians are arseholes” is verboten because religious affiliation is protected. “Christianity is for arseholes” is fine because religions themselves can be critiqued and no specific demographic is targeted. And “Black children are arseholes” is allowed because “children,” a group categorized according to age, are not protected.

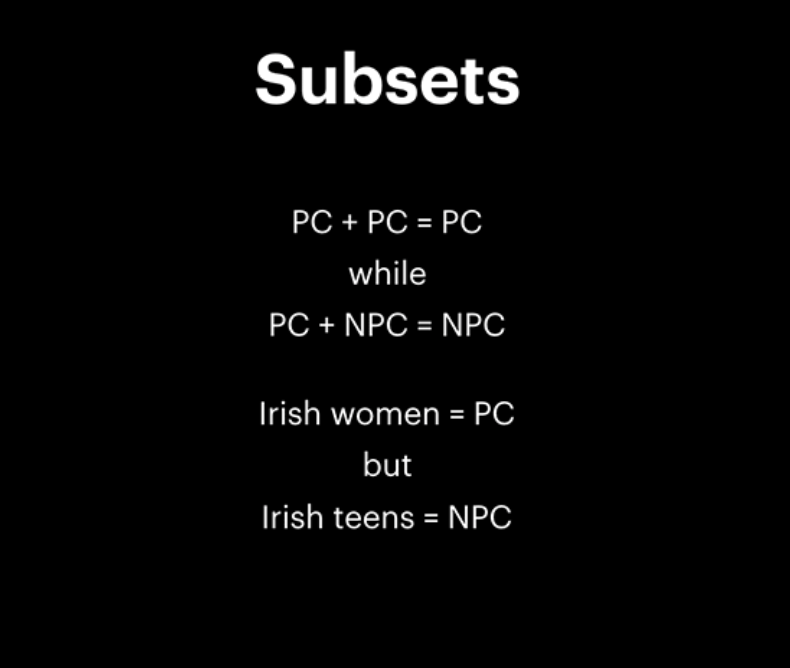

Image: ProPublica

Subsets of groups — female drivers, Jewish professors, gay liberals — aren’t protected either, as ProPublica explains:

White men are considered a group because both traits are protected, while female drivers and black children, like radicalized Muslims, are subsets, because one of their characteristics is not protected.

This absurd “protected + not protected = not protected” reasoning only confirms that Facebook is woefully ill-equipped to combat hate speech or flag most instances of racism on its site. The company said that the exact wording of some of the rules may have changed, but the slides still raise the question of who gets to belong to a protected class and why. When asked by Gizmodo, Facebook would only point to a Tuesday blog post on hate speech, which provides no insight, only offering a peaceful blue tint and a stated desire to “reflect the diversity of human experience.”

As the leaked slides recreated by ProPublica (presumably out of caution) reveal, Facebook lists only three scenarios where inflammatory speech against subsets aren’t allowed: calls for violence, calls for exclusion and calls for segregation. But enormous loopholes let people disregard all three.

Image: ProPublica

Image: ProPublica

A “call for violence” against black people (such as “The police should kill Black Lives Matter thugs!”) is unacceptable, but it’s completely fine to say Mike Brown, shot dead in Ferguson, Missouri, by a white police officer, deserved to die.

A “call for exclusion” is unacceptable, but the group Round Up And Deport Every Illegal Alien In The USA is cool, apparently.

A call for segregation is impermissible, but it seems groups like “White Genocide Watch” and “White Genocide or Diversity,” which argue that separatism is the only way white people can survive in America, are both fine. Totally fine.

Facebook is neither bound by the First Amendment nor legally required to police hate speech. But, in its current approach, the company is ignoring its ostensible mission statement to “give people the power to build community and bring the world closer together.” Facebook knows full well that online communication is only the first step for people to go out into the real world and actionize the beliefs that tie them together. So reducing hate speech to an LSAT prep semantics game reframing hate and hate groups as “just” words is dishonest. Ultimately, balancing freedom of expression with combatting hate speech for 2 billion users will require a much more robust, complex approach.