After steadfastly denying it has a problem with fake news, Facebook is finally admitting it does, in fact, have a problem with fake news. Today, it announced several specific ways in which it will try to fix these thorny issues.

In a blog post, Adam Mosseri, Facebook’s vice president of news feed, outlined the efforts. There are four primary initiatives: “easier reporting,” “flagging stories as disputed,” “informed sharing,” and “disrupting financial incentives for spammers.”

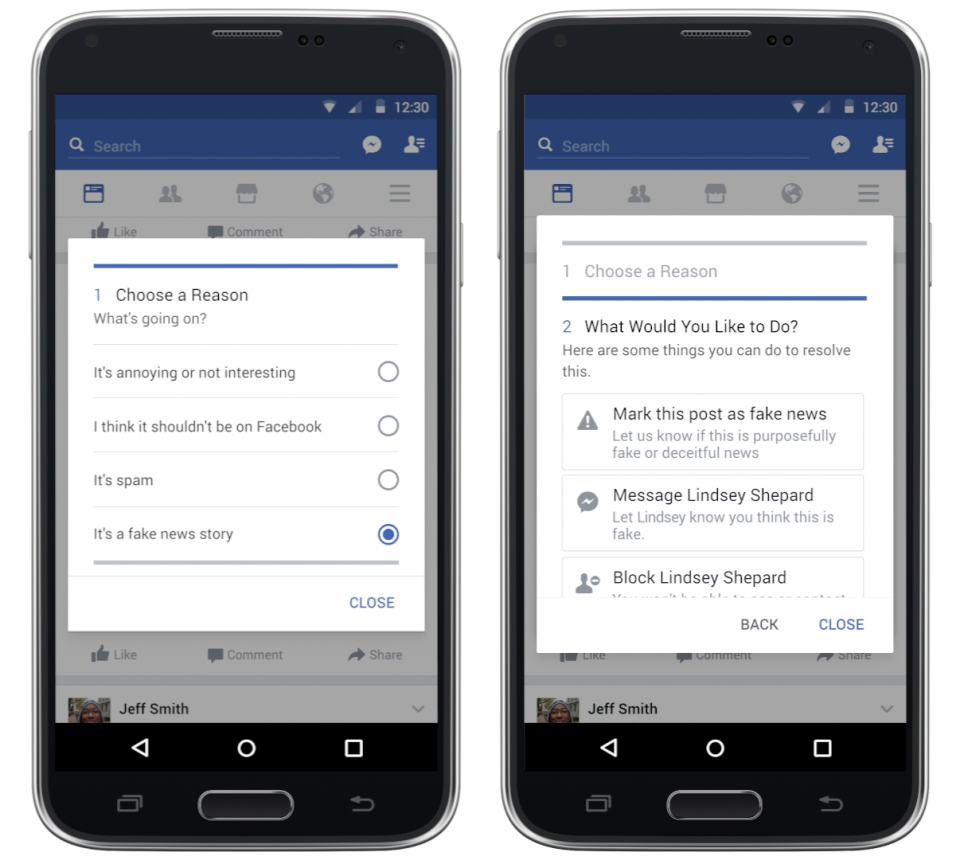

The first involves community reporting — something that hasn’t exactly worked perfectly for Facebook in the past — and will apparently make it easier for users to report something as fake.

The second initiative, “flagging stories as disputed,” is an interesting one. Further up in the blog post, Mosseri made it very clear that Facebook “cannot become arbiters of truth [them]selves,” which falls pretty squarely in line with Mark Zuckerberg’s repeated (and disingenuous) statements that the social platform isn’t a media company. To bolster this claim, Facebook will begin working with third-party fact checking organisations to check out potentially fake news stories.

Recode reports that four organisations — ABC News, Politifact, FactCheck, and Snopes — will be part of this effort. According to ABC News president James Goldston, his company will use its team of election fact-checkers — about a half-dozen in all — and put them to use ferreting out bullshit on Facebook. (He says ABC isn’t getting paid by Facebook.)

Once a story has been “disputed,” it will still show up, but users “will see a warning that the story has been disputed as [they] share.”

As for the “informed sharing” portion of the effort, Facebook says that the number of times a story is shared — supposedly an indicator as to whether it’s misleading — will factor into how it’s ranked on the news feed. And finally, “disrupting financial incentives for spammers” will allegedly deter fake website purveyors from making money, though the actual mechanisms by which Facebook plans to do this are rather vague.

Of course, Facebook is an incredibly powerful company that carries out its operations in opaque and mysterious ways, so it’s difficult to tell whether or not these efforts will actually do much of anything. At the end of the day, it’s still asking us to trust it to do the right thing, which is a very risky prospect indeed.