Last week, Mark Zuckerberg really pushed the limits of a Friday night news dump when he posted Facebook’s new plan for dealing with fake news, which includes vague notes on “warnings” and “disrupting fake news economics”. Again, the social media mogul mostly communicated that he would just like us to trust him.

Photo: Getty

Zuckerberg began his message on combating false information by writing several sentences in a row that are mostly untrue. First, he wrote, “The bottom line is: we take misinformation seriously.” Facebook has repeatedly refused to acknowledge that it functions as a media company and therefore has a responsibility to make every effort to deliver the truth.

Then, he writes, “Our goal is to connect people with the stories they find most meaningful, and we know people want accurate information.” As Gizmodo reported earlier this week, insiders at the company claim that Facebook had tools to fight fake news but decided not to use them because they “disproportionately impacted right-wing news sites by downgrading or removing that content from people’s feeds.”

That is to say, users would not be happy if they found out they weren’t getting their “news” because the information was inaccurate.

Zuckerberg has previously said “more than 99 per cent of what people see [on Facebook] is authentic,” but that information comes from internal statistics that haven’t been independently reviewed. Pressure then increased for the company to address the issue when it was reported by Buzzfeed that false news stories “outperformed” accurate information in the weeks before the election.

The CEO then wrote that Facebook currently relies on the community to flag posts and that they “use signals from those reports along with a number of others — like people sharing links to myth-busting sites such as Snopes — to understand which stories we can confidently classify as misinformation.” Zuckerberg seems to be saying that Facebook has already made some determinations about what kind of site is trustworthy (Snopes, for example) and it uses that information.

In the past, the young billionaire has publicly worried that making decisions like that would be a slippery slope in which his company could wield too much power. But, reading this statement it is clear that they already have determined that at least one site is trustworthy — Snopes. He doesn’t name any other sites that the company has deemed to be reliable, leaving our imaginations to run wild with possibility.

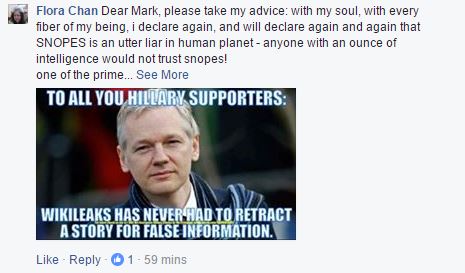

But of all his plans that were laid out in the post, using third-parties for fact checking seems to be prominent and the most realistically effective option. Unfortunately for him, he will have to take some heat from the people who don’t particularly “want accurate information,” like this commenter on his post who takes issue with Snopes:

Screenshot: Facebook

Facebook is afraid to offend certain users and afraid of giving up the illusion that its service is just as open and neutral as the scary world wide web.

Here’s the plan to fix the fake news problem that Mark Zuckerberg is now kinda-sorta admitting might be a big deal.

– Stronger detection. The most important thing we can do is improve our ability to classify misinformation. This means better technical systems to detect what people will flag as false before they do it themselves.

– Easy reporting. Making it much easier for people to report stories as fake will help us catch more misinformation faster.

– Third party verification. There are many respected fact checking organisations and, while we have reached out to some, we plan to learn from many more.

– Warnings. We are exploring labelling stories that have been flagged as false by third parties or our community, and showing warnings when people read or share them.

– Related articles quality. We are raising the bar for stories that appear in related articles under links in News Feed.

– Disrupting fake news economics. A lot of misinformation is driven by financially motivated spam. We’re looking into disrupting the economics with ads policies like the one we announced earlier this week, and better ad farm detection.

– Listening. We will continue to work with journalists and others in the news industry to get their input, in particular, to better understand their fact checking systems and learn from them.

This vague multi-pronged outline identifies the core approaches that Facebook can take to fight the problem and it’s not bad as far as first thoughts go. But the plan, yet again, is almost entirely without specifics.

The two bullet points with the most potential are “Third party verification” and “Listening.” There’s nothing wrong with “listening” and “learning” but if you’re going to be this vague would it kill you to throw out “and take action once we have”?

Until Facebook shows more specific progress, allows greater independent review of its internal statistics/studies and starts acting like a responsible media company, this whole plan is very difficult to trust.

Many of the comments on Zuckerberg’s post wanted to know why he would make such an important announcement so late on a Friday night when it would get missed in the news cycle. The mogul responded, “For those asking why I posted this at 9:30pm, that’s when I landed and got into in Lima last night. Looking forward to the APEC Summit today.” At 9am this morning, that conference kicked off with a Facebook Live broadcast posted to Zuckerberg’s page, ensuring that his previous post would get lower priority in his followers’ news feed. Maybe he knows a thing or two about how it works after all.