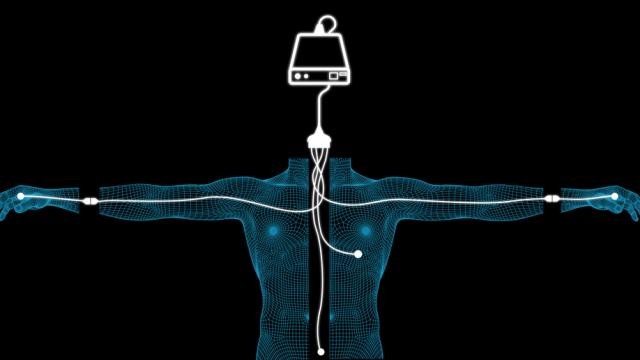

The prospect of uploading your brain into a supercomputer is an exciting one — your mind can live on forever, and expand its capacity in ways that are hard to imagine. But it leaves out one crucial detail: Your mind still needs a body to function properly, even in a virtual world. Here’s what we’ll have to do to emulate a body in cyberspace.

Illustration by Jim Cooke

We are not just our brains. Conscious awareness arises from more than just raw calculations. As physical creatures who emerged from a material world, it’s our brains that allow us to survey and navigate through it; bodies are the fundamental medium for perception and action. Without an environment — along with the ability to perceive and work within it — there can be no subjective awareness. Brains need bodies, whether that brain resides in a vertebrate, a robot, or in future, an uploaded mind.

In the case of an uploaded mind, however, the body doesn’t have to be real. It just needs to be an emulation of one. Or more specifically, it needs to be a virtual body that confers all the critical functions of a corporeal body such that an uploaded or emulated mind can function optimally within its given virtual environment. It’s an open question as to whether or not uploading is possible, but if it is, the potential benefits are many.

But knowing which particular features of the body need to reconstructed in digital form is not a simple task. So, to help me work through this futuristic thought experiment, I recruited the help of neuroscientist Anders Sandberg, a researcher at the University of Oxford’s Future of Humanity Institute and the co-author of Whole Brain Emulation: A Roadmap. Sandberg has spent a lot of time thinking about how to build an emulated brain, but for the purposes of this article, we exclusively looked at those features outside the brain that need to be digitally re-engineered.

Emulated Embodied Cognition

Traditionally, this area of research is called embodied cognition. But given that we’re speculating about the realm of 1’s and 0’s, it would be more accurate to call it virtual or emulated embodied cognition. Thankfully, many of the concepts that apply to embodied cognition apply to this discussion as well.

(Bletchley Museum)

Philosophers and scientists have known for some time that the brain needs a body. In his 1950 article, “Computing Machinery and Intelligence,” AI pioneer Alan Turing wrote:

It can also be maintained that it is best to provide the machine with the best sense organs that money can buy, and then teach it to understand and speak English. That process could follow the normal teaching of a child. Things would be pointed out and named, etc.

Turing was talking about robots, but his insights are applicable to the virtual realm as well.

Similarly, roboticist Rodney Brooks has said that robots could be made more effective if they plan, process, and perceive as little as possible. He recognised that constraint in these areas would likewise constrain capacity, thus making the behaviour of robots more controllable by its creator (i.e. where computational intelligence is governed by a bottom-up approach instead of superfluous and complicated internal algorithms and representations).

Indeed, though cognition happens primarily (if not exclusively) in the brain, the body transmits critical information to it, in order to fuel subjective awareness. A fundamental premise of embodied cognition is the idea that the motor system influences cognition, along with sensory perception, and chemical and microbial factors. We’ll take a look at each of these in turn as we build our virtual body.

More recently, AI theorist Ben Goertzel has tried to create a cognitive architecture for robot and virtual embodied cognition, which he calls OpenCog. His open source intelligence framework seeks to define the variables that will give rise to human-equivalent artificial general intelligence. Though Goertzel’s primary concern is in giving an AI a sense of embodiment and environment, his ideas fit in nicely with whole brain emulation as well.

The Means of Perception

A key aspect of the study of embodied cognition is the notion that physicality is a precondition to our intelligence. To a non-trivial extent, our subjective awareness is influenced by motor and sensory feedback fed by our physical bodies. Consequently, our virtual bodies will need to account for motor control in a virtual environment, while also providing for all the senses, namely sight, smell, sound, touch, taste. Obviously, the digital environment will have to produce these stimuli if they’re to be perceived by a virtual agent.

For example, we use our tactile senses a fair bit to interact with the world.

“If objects do not vibrate as a response to our actions, we lose much of our sense of what they are,” says Sandberg. “Similarly, we use the difference in sound due to the shape of our ears to tell what directions they come from.”

So, in a virtual reality environment, this could be handled using clever sound processing, rather than simulating the mechanics of the outer ear. Sandberg says we’ll likely need some exceptionally high-resolution simulations of the parts of the world we interact with.

As an aside, he says this is also a concern when thinking about the treatment of virtual lab animals. Not giving virtual mice or dogs a good sense of smell would impair their virtual lives, since rodents are very smell-oriented creatures. While we know a bit about how to simulate them, we don’t know much about how things smell to rodents — and the rodent sense of smell can be tricky to measure.

Sandberg also stresses the importance of physicality and the capacity to move, adding: “When viewing the world, we move our eyes in saccades to focus on particular objects — to see, we need to move (saccades are “rapid, ballistic movements of the eyes that abruptly change the point of fixation“). Just projecting patterns onto the visual cortex is not going to correspond to how we experience the world.”

Our physicality also has a profound impact on our emotional states, and how we interpret our interactions with the world. As University of Southern California neuroscientist Antonio Damasio pointed out in Descartes’ Error: Emotion, Reason, and the Human Brain, our brains use our bodies as intermediaries to inform us of the feelings we have. This is what’s known as the “somatic marker hypothesis” — the idea that there’s a mechanism by which emotional responses can guide or bias behaviour and decision-making.

As Sandberg explains: “When we have butterflies in our stomachs — because of activity in the sympathetic nervous system — this feeling tells some parts of our minds that “didn’t get the memo” that we are nervous. Leaving out the muscular fluttering would change how it feels to be scared as an emulation.” Sometimes these might (obviously) be a good thing, but he says we should also consider the corresponding somatic markers in sexual arousal — something else we might want our virtual bodies to have.

Along the same lines, the dynamic state of our physicality, like posture and facial expressions, can influence our mood. Studies show that facial movement can influence emotional experience, a phenomenon known as the “facial feedback hypothesis.”

A 1988 study showed that when we’re forced to smile, in this case by placing a pen between our teeth, we’re happier. But when we’re forced to frown, say by placing a pen between our lips, we don’t respond as jovially to amusing stimuli. Similar studies have shown that botox injections decrease our ability to empathise with others, and that this stunts the emotional growth of young people.

Lastly, the act of laughing — which has a definite physical component — is known to confer a number of health benefits, including a better mood. A recent proof-of-concept trial showed that laughing gas‚ or nitrous oxide, can ease the symptoms of severe depression.

Consequently, when building our emulated body, we’ll have to provision for the facial feedback hypothesis and other aspects of physicality that alter our brain states.

Body Chemistry

The brain communicates with itself by disseminating chemical information among neurons. But the body plays an important role in this process as well. At the lowest level, the brain interacts chemically with the body, and some of this has important effects on our subjective awareness.

“We do not know if emulations need to go down to the neurotransmitter level,” Sandberg tells io9, “but it is likely: we know noradrenaline, serotonin and dopamine affect mood and how we think, so abstracting them away may not be feasible. We may not have to simulate their detailed biochemistry, but there are no doubt some interactions that need to be dealt with.”

At the same time, there are other factors to consider as well, like the ingredients of food and atmospheric composition.

“It wouldn’t surprise me if some low-level biochemistry like glucose and oxygen levels affect how we function,” says Sandberg. “A blood glucose boost tends to produce a memory enhancement for example, and this appears to be one way adrenaline boosts improve memory — they trigger a glucose boost. At the very least, it might feel different if one always has optimal levels of glucose and oxygen, or no buildup of adenosine, which makes us feel tired.”

Indeed the ingestion of substances, like foods, drink, and drugs, is an important factor in all of this. While many of the chemicals in these substances affect brain function directly, there’s a strong possibility that they’re working in conjunction with bodily processes and in how these chemicals are metabolized in the body.

“I suspect an emulation will have some emulated biochemistry but leave out much of what is going on in real biology, since it is just maintenance processes,” adds Sandberg. “But some of these processes might affect the ‘feel’ of living, so there could be a need for a fair bit of tinkering.”

He quips that an uploaded person might say: “I went back to having a glucose peak after virtual meals, it fitted better with the alcohol emulation.” And on that note, to emulate the effects of alcohol we’d have to modulate various GABA receptors as per David Nutt’s ideas for artificial alcohol.

And of course, there are the hormones. Sandberg says that hunger and sexual desire are driven by ghrelin sex hormones which subtly alter our moods. Leptin might also influence our appetite.

“Fortunately most of these diffusing chemicals change on slow (seconds to minutes) timescales and in relatively simple ways, so simulating them is computationally easy compared to the brain,” he says. “We can also map what neurons have receptors for them. The headache is figuring out what affects their production.”

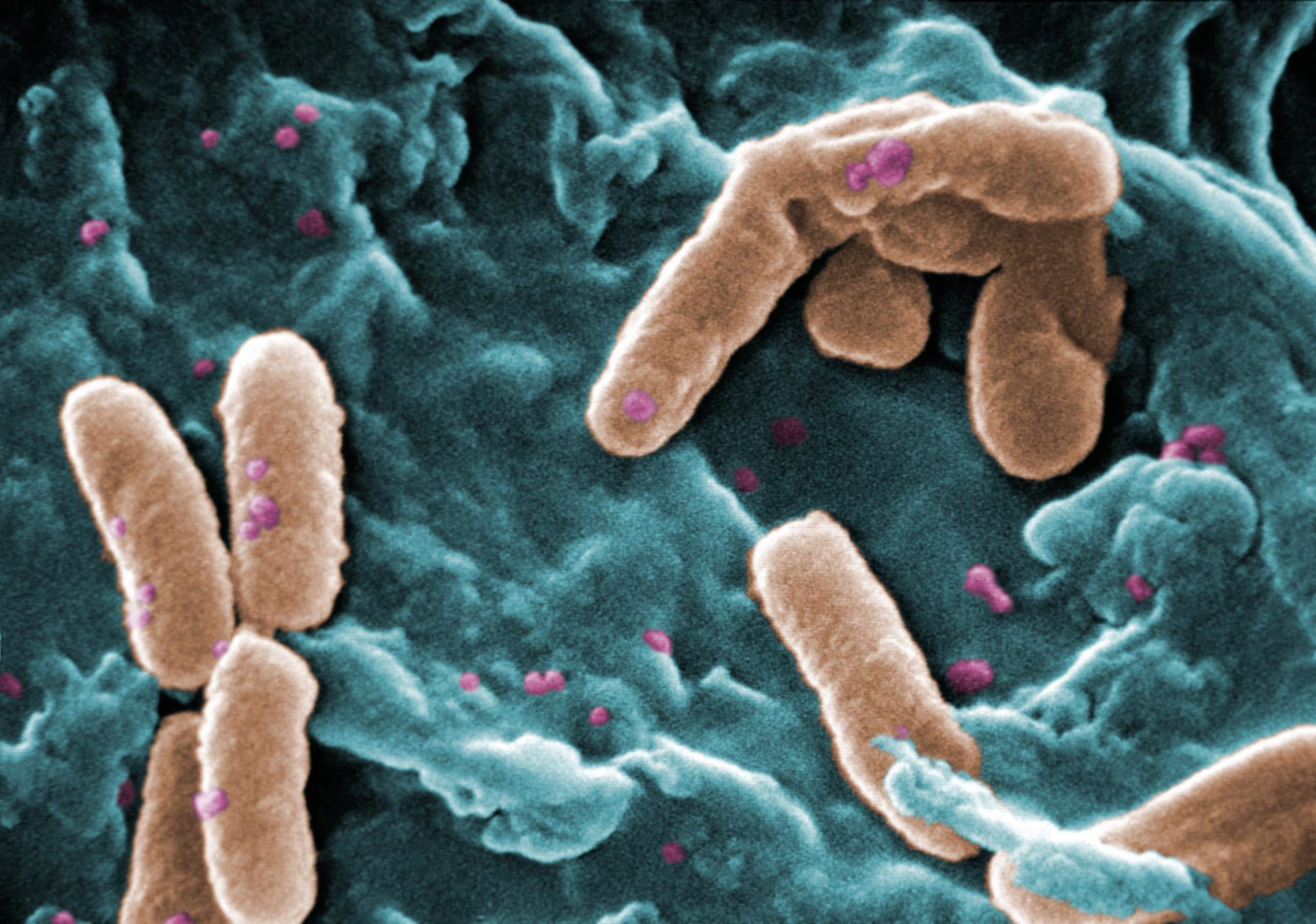

(wustl.edu)

Another challenge: Figuring out how all the microbes in your body might affect your cognitive function. University College Cork neuroscientist John F. Cryan suspects that our gut microbiota communicates with the central nervous system, possibly through neural, endocrine and immune pathways, thus influencing brain function and behaviour. Studies suggest that gut microbiota play a role in the regulation of anxiety, mood, cognition, and pain. And indeed, research from last year pointed to a possible link between gut microbiota and depression.

Emulating the role of our gut microbiota in a virtual body will not be easy. The human gastrointestinal tract is home to 1014 bacterial organisms. Knowing which ones affect human cognition and in which ways will be a monumental — but no impossible — task.

“It may seem overwhelming, but this is largely a Big Data and Big Sensors issue,” says Sandberg. “Figuring out what produces what chemicals and where they bind — we are currently doing this on a scale that would astound a 90’s researcher in genomics and proteomics.

Metabolomics and microbiomics are next in line. The challenge will be to know which obscure pathways and interconnections to leave out because many of them are simply redundant. Simply simulating and seeing that the system behaves nicely is not enough if we want to get the right “feel.”

And in the end, it may not be a critical factor in ensuring authentic brain function.

“But we cannot know it is irrelevant — we’d better experiment with it,” says Sandberg. “Fortunately we can experiment on ourselves before uploading by changing our microflora, and no doubt many of those results will be medically useful or scientifically interesting anyway.”

Low Resolution Bodies

All this said, emulating or uploading a brain will prove to be far more complicated than creating a virtual body. Sandberg suspects that we won’t need to emulate bodies at anything near brain resolution. Close enough will be good enough.

“We don’t need perfectly accurate muscle nutrition or mechanical simulation of every inch of intestine,” he says. “And we can probably leave out the poor spleen.”

Indeed, there are parts of the body that don’t need to be included at all, unless we wish to preserve them for functional or aesthetic reasons — or to simply show off our computational wealth. We won’t need every strand of hair, nor will we need our fingernails to grow. Moreover, given the radical potential of cyberspace — and the realisation that we’ll be able to upload ourselves in virtual bodies of near-limitless shapes, sizes, and functions — we’ll have to make sure that our virtual brains match the demands of whatever body we put them into.

For example, a virtual body with no momentum would likely move too quickly to control. And adding an extra set of arms would require a new part of cortex that learns how to control them — and the capacity for the rest of our motor system and body image to learn that they’re there.

“It is a kind of inverse phantom limb problem, and I think it will require a fair bit of training to learn to use them,” says Sandberg. “Sure, we can speed up motor plasticity in the emulation, but there will still be a bit of exercising.”

In the end, this will be a work in progress, Sandberg speculates: “My guess is that newbie emulations will spend an inordinate time fine tuning their bodies, and that being a body emulation consultant will be a lucrative job in the post-uploading world.”

Follow George on Twitter and friend him on Facebook.

Abstract 3D image: Koya979/Shutterstock