The Amazon Fire Phone’s Dynamic Perspective — the famed “3D effect” — works almost as if by magic. As you look at the phone and move it around, the Fire Phone watches you back and changes what you see. It’s not voodoo though. It’s crazy camera tech. Here’s a closer look at how it works, even in the dark.

At its core, Amazon’s Dynamic Perspective is a facial-recognition and tracking technology similar to what we’ve had on smartphone cameras and point-and-shoots for years. You’ve seen face-detect auto-focus — most smartphones have it — and it works decently well. You point your camera at a person, it throws a box around their face and focuses the photo at the correct distance so it’s nice and sharp.

The facial-recognition technology Amazon is using takes that same concept to a much more sophisticated level. It’s not just trying to detect your face for focus; it needs to track the position of your face and its movements extremely accurately for a number of different uses.

In the flashiest case, shown at the top of this post, moving your head moves the map or wallpaper or whatever in front of you to create a 3D effect. Google even built an engine so that the effect can be used to look around a world in games. But beyond the 3D, the cameras watch your face so that a flick or tilt of your phone (or your face) scrolls a webpage or to reveal additional information and settings.

To do it, Amazon needs perspective on you: It uses four specialised cameras that take very accurate measurements of your head’s position many times a second. (Jeff Bezos quoted 60 times a second, but just as an example)

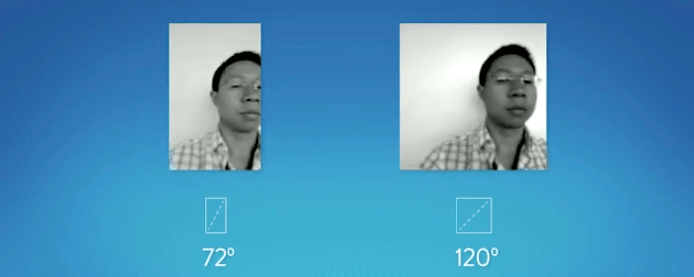

The cameras on the Fire Phone have a 120-degree field-of-view, much wider than a standard 72-degree front-facing camera. As Bezos explained, the wider field-of-view lets the Fire Phone track a wider range of motion, without which Dynamic Perspective would be impossible.

The Fire Phone’s cameras monitor your head position relative to center in X, Y, Z coordinates, as well as the angle of your head to the left or the right. Depending on how much your face moves and how quickly you can get variations or entirely different effects.

In order to keep track of your head in three dimensions, the phone needs measurements from two cameras, much like you need two eyes to perceive three dimensions. The phone requires four cameras total — one in each corner — because we all use our phones in different positions and hold them in different ways depending on the situation or app. An algorithm determines which two are returning the best data at any given time.

Additionally, since the 3D feature needs to work even when we’re in darker situations, each of the cameras has infrared LEDs that help the camera see you, even in darkness.

Beyond the hardware, there’s a database of millions of faces that Amazon says took years to assemble. The huge database helps the phone very accurately identify a variety of different faces and avoid false positives. For example, the phone is going to find you even if you’re backlit or wearing a hat. And it won’t lock onto things which look like faces but aren’t, like a 3-inch image of a rock star on a poster.

All of this tech is really impressive and unique, but it remains to be seen if the implementations can be anything more than a gimmick. Following some hands-on time with the Fire Phone we weren’t totally convinced that being able to scroll with a tilt or reveal menu with a flick really made the phone better, even though it definitely worked as promised.

The great hope is that we’ve only seen the beginning. Amazon’s Dynamic Perspective SDK makes it easy for developers to grab and use this data. It’s even got a few shortcut gestures. We’ll have to wait and see if third-parties can use Amazon tech to do what Amazon hasn’t: Build some uses for the Dynamic Perspective that make us feel like we can’t live without it.