Google’s new Project Tango is a limited-run experimental phone that’ll be handed out to 200 lucky developers next month. It’s got Kinect-like vision and a revolutionary chipset to help it process what it sees, enabling phones to see the world in a whole new way. A bit like a really sophisticated Kinect in your phone. It’s pretty nuts.

Project Tango is an experiment…

Like Google’s modular smartphone concept, Project Ara, Project Tango comes out of the Advanced Technology and Projects group, the one part of Motorola that Google isn’t selling to Lenovo. Tango is headed up by Johnny Lee, the former Microsoft researcher who was one of the brains behind Kinect.

Guts that can see better

Project Tango is a five-inch phone, but what sets it apart is its unique visual processing chipset. Developed by the startup Movidius, the Myriad 1 is the first implementation of the company’s homegrown visual processing technology. The hardware is entirely proprietary, from the silicon layout, to the instruction set, to the software platform built on top. Basically, the Myriad 1 is capable of much more complex processing than your ordinary smartphone chip. In the words of CEO Remi El-Ouazzane, the goal is to “extract intelligence out of a scene.”

In other words, the goal is to create a processor that sees not just depth and space but also objects and context. As El-Ouazzane points out, this higher-level visual processing has been accomplished computationally before, so what makes Movidius’ chipset interesting is its 8mm x 8mm size.

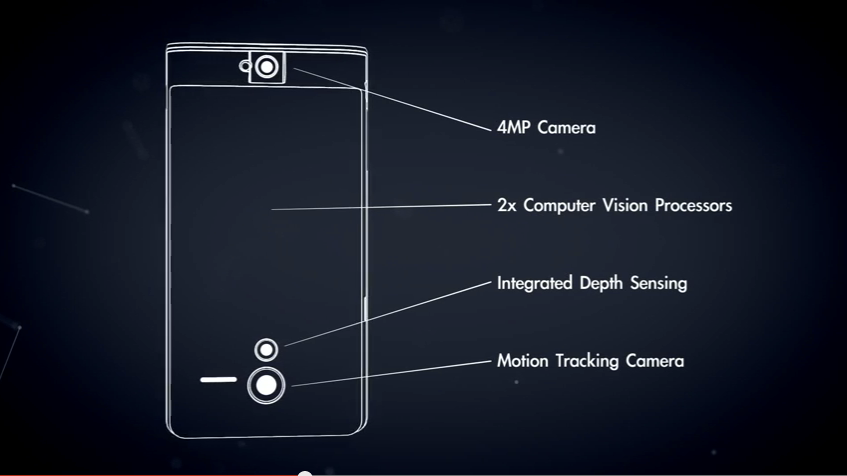

Of course, all that power need more than the standard gyroscope, compass, and accelerometers. According to Google, Project Tango will also have a Kinect-like depth camera, a motion sensing camera, and two vision processors. We know that at least one of those is Movidius. What’s the other?

What is Project Tango for?

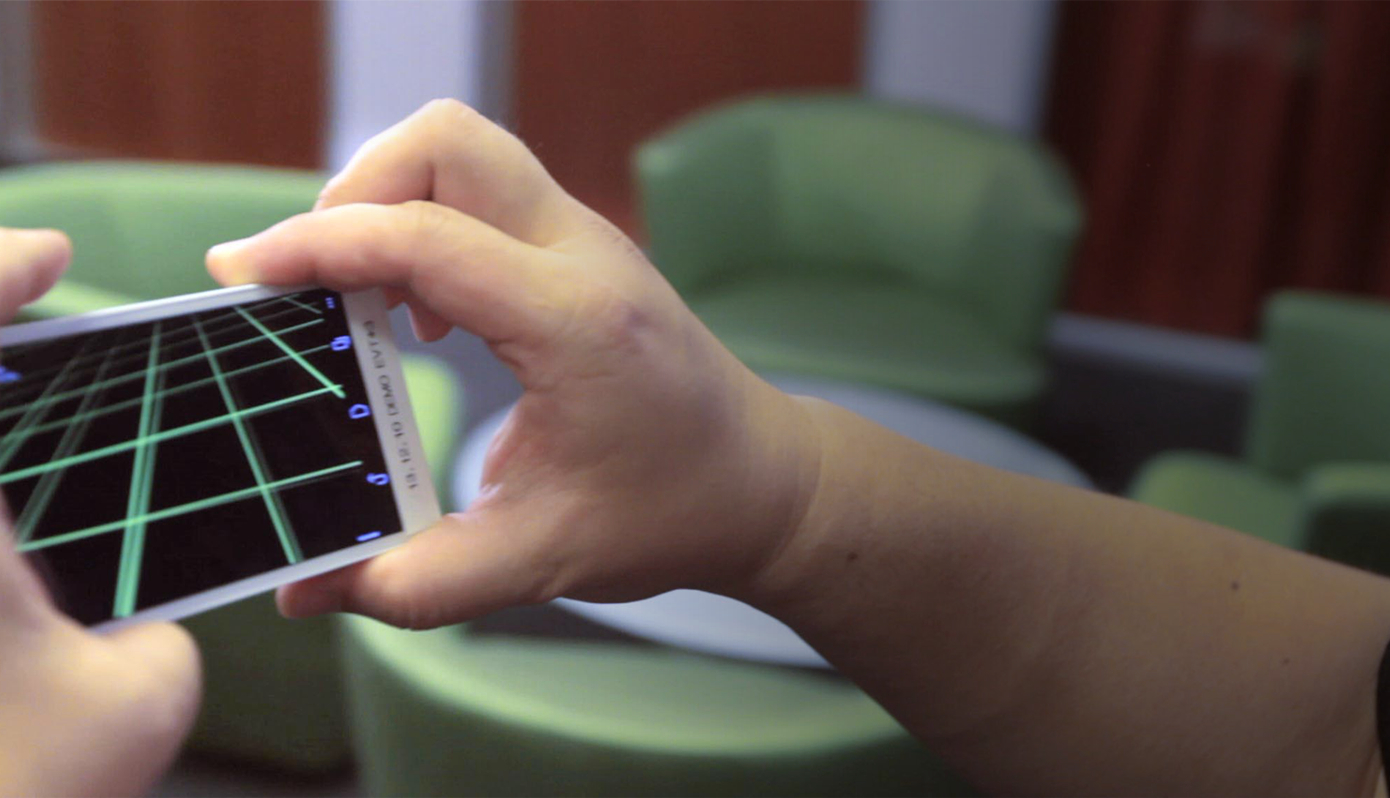

Despite all of Movidius’ claims about the potential power of its chipset, it appears Google’s interested in leveraging the chipset to create sophisticated mapping applications. According to what Google told us before the announcement, Project Tango is a “Mobile device that understands space and motion using custom hardware and software,” while simultaneously taking that information and using it to create 3D maps.

To do this, the device’s sensors captures and processes position, orientation, and depth data in real-time into a single 3D model. This data will be available via API which’ll pull it into Android platform apps. The on-board sensors capture “over a quarter million” 3D measurements every second.

According to Google ATAP’s PR, Google wants to see what people want to do with it, but the company does offer some guidance for the sorts of applications this might be useful for like, What if directions to a place didn’t end with the street address, or, what if you could simply walk around a room to figure out its dimensions before shopping for furniture?

“Mobile phones today assume that the physical world ends at the boundaries of the screen,” says Johnny Lee in the demo video above. “Our goal is to give mobile devices a human scale understanding of space and motion.”

Oh and if you were worried about privacy, this shouldn’t make you feel any better.

How do I get it?

The first run of 200 Project Tango dev kits will be, as their name implies, for developers only. If you’re interested you’ll need to submit an application. They’ll be sent out by 14 March 2014. [Project Tango]