A few weeks ago, we wrote about a tiny micro-bot designed to be injected into a patient’s eye and controlled via magnet — a speck-sized eye surgeon. This week, a group of Berkeley researchers published a study positing a similar concept, except the ‘bots are inside your brain. And they’re the size of dust particles. It’s called neural dust. Of course.

Published on July 8, Neural Dust: An Ultrasonic, Low Power Solution for Chronic Brain-Machine Interfaces is the research equivalent of a think piece. But as think pieces go, it’s pretty compelling.

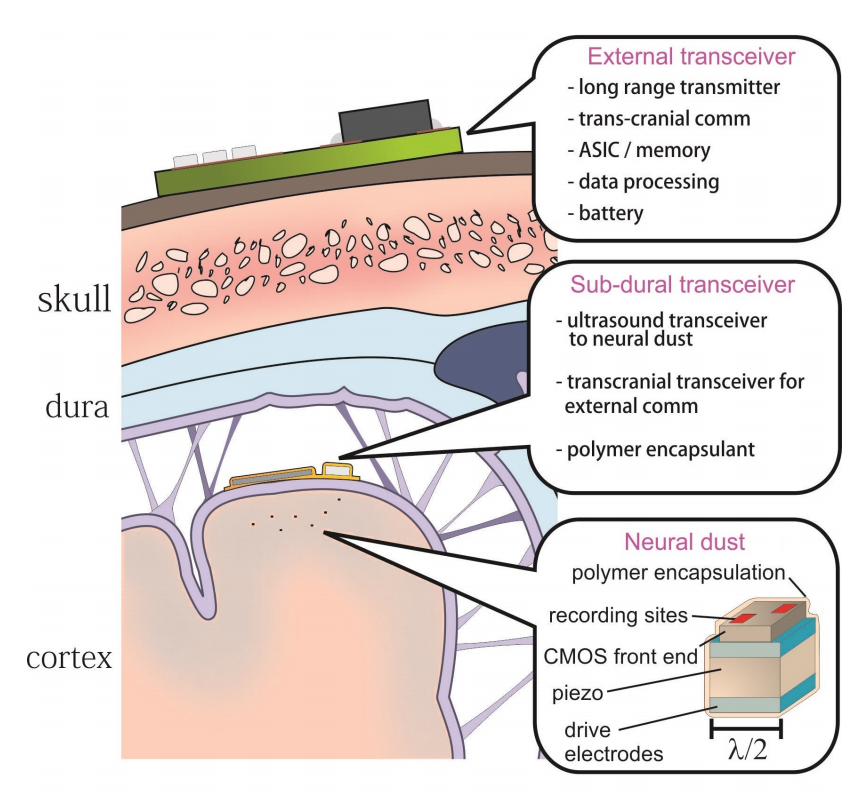

It assesses the plausibility of a brain-machine interface composed of three parts: First, thousands of microbe-sized sensor nodes (the “neural dust”) that detect signals within your grey matter — specifically, within your cortex. Second, an ultrasound transceiver installed between your cranium and skin — the thing that monitors the dust. The particles would be powered piezoelectrically, meaning that they would transform sound waves from the ultrasound transceiver into electrical signals. Finally, a larger node on the surface of your head supplies the battery power, data-processing, and the ability to transmit data to a nearby receiver.

Scientists have been experimenting with brain-embedded sensors for decades. But the idea of a whole fleet of micro-sensors that can be injected (or snorted!) inside the ol’ brain box is a new development. In one way, it’s ominous, hinting at the idea of a kind of surveillance technology so tiny, you wouldn’t even be cognisant of it. But that’s an extreme version of how neural dust could be used. It could also provide an interface for the disabled to interact with the world, or a monitoring system for neurology patients — a step towards a truly “invisible” brain-computer interface.

The concept of the brain-computer interface has, technically, been around since the invention of an EEG machine, but it took off in the 1970s when University of Washington School of Medicine researchers hooked monkeys up to a biofeedback meter that let the primates control a robotic arm with their thoughts. The basic gist of the technology is this: every time a neuron fires, a tiny bit of electricity escapes from the otherwise well-insulated pathway it’s travelling. Those leaked signals are what scientists can detect from outside your cranium — and they’ve gotten fairly good at decoding those signals. That’s how technologies like following to say:

The current brain technologies are like trying to listen to a conversation in a football stadium from a blimp. To really be able to understand what is going on with the brain today you need to surgically implant an array of sensors into the brain.

So the biggest challenge to BCI right now is whittling down the size of that array so that it doesn’t permanently damage the brain. An army of microbe-sized sensors that, together, could transmit and receive data at much higher resolution. In other words, neural dust. [Neural Dust: An Ultrasonic, Low Power Solution for Chronic Brain-Machine Interfaces via MIT]