ai ethics

-

Google Employees Call On Company To Kick Heritage Foundation Ghoul Off AI Ethics Board

Google announced the formation of a global council on technology ethics last week to some deserved trepidation. Sure, the company had amassed some highly qualified individuals to fill seats on this board—but Google’s track record of following its own internal ethics codes is less than spotless.

-

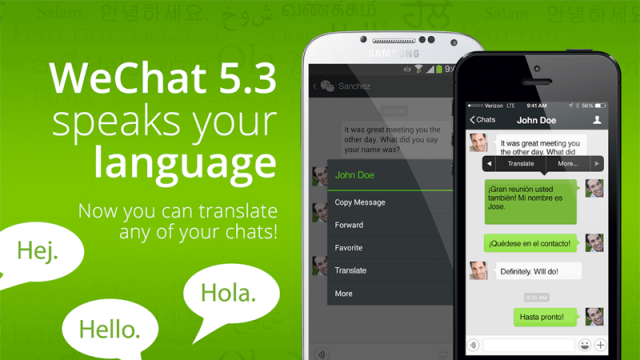

China’s Most Popular App Apologizes After Translating ‘Black Foreigner’ As The N-Word

The translation service in China’s biggest messaging app, WeChat, is being retooled after offering a racist slur as a translation for the phrase “black foreigner.”

-

Google’s DeepMind Launches Ethics Group To Steer AI

The company responsible for AlphaGo — the first AI program to defeat a grandmaster at Go — has launched an ethics group to oversee the responsible development of artificial intelligence. It’s a smooth PR move given recent concerns about super-smart technology, but Google, who owns DeepMind, will need to support and listen to its new…