philosophy of artificial intelligence

-

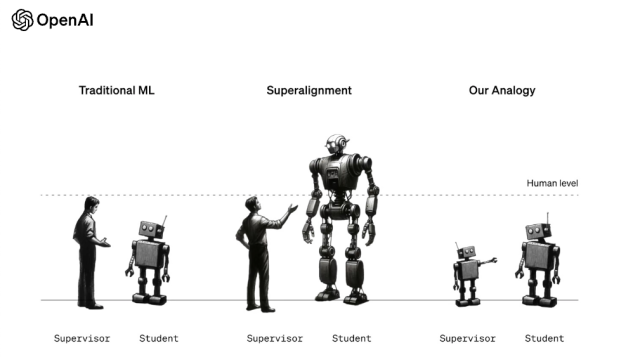

Ilya Sutskever’s Team at OpenAI Built Tools to Control a Superhuman AI

A new research paper out of OpenAI Thursday says a superhuman AI is coming, and the company is developing tools to ensure it doesn’t turn against humans. OpenAI’s Chief Scientist, Ilya Sutskever is listed as a lead author on the paper, but not the blog post that went with it, and his role at the…

-

The UN Wants to Figure Out Just How Dangerous AI Is

The United Nations created a 39-member advisory body to guide the world through governance issues around artificial intelligence, announced United Nations Secretary-General António Guterres announced. Steve Wozniak, Elon Musk and the world’s 500 top technologists called for a pause of advanced AI systems back in March to develop sufficient safeguards, sounding the alarm to take…

-

DeepMind Co-Founder Wants the ‘New Turing Test’ to Be Based on How Good an AI Is at Getting Rich

With artificial intelligence hype leaving venture capitalist firms foaming at the mouth, ready to buy into any new company that sticks an “A” and “I” in its name, we sure as hell need a new way of defining what constitutes an AI. The Turing Test fails to define real intelligence in today’s world of large…

-

Secretive AI Companies Need to Cooperate With Good Hackers to Keep Us All Safe, Experts Warn

A new kind of community is needed to flag dangerous deployments of artificial intelligence, argues a policy forum published today in Science. This global community, consisting of hackers, threat modelers, auditors, and anyone with a keen eye for software vulnerabilities, would stress-test new AI-driven products and services. Scrutiny from these third parties would ultimately “help…