A group of House lawmakers charged with investigating the implications of biometric surveillance empaneled three experts Wednesday to testify about the future of facial recognition and other tools widely employed by the U.S. government with little regard for citizens’ privacy.

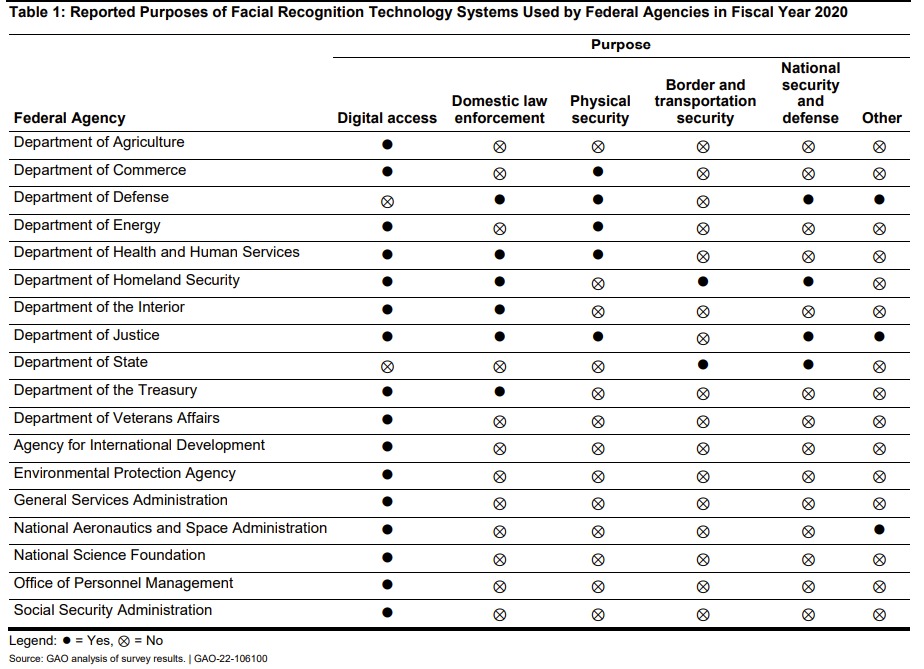

The experts described a country — and a world — that is being saturated with biometric sensors. Hampered by few, if any, real legal boundaries, companies and governments are gathering massive amounts of personal data for the purpose of identifying strangers. The reasons for this collection are so myriad and often unexplained. As is nearly always the case, the development of technologies that make surveilling people a cinch is vastly outpacing both laws and technology that could ensure personal privacy is respected. According to the Government Accountability Office (GAO), as many as 18 federal agencies today rely on some form of face recognition, including six for which domestic law enforcement is an explicit use.

Rep. Jay Obernolte, the ranking Republican on the Investigations and Oversight subcommittee, acknowledged that he was initially “alarmed” to learn that, in one survey, 13 out of 14 agencies were unable to provide information about how often their employees used face recognition. Obernolte said that he then realised, “most of those were people using facial recognition technology to unlock their own smartphones, things like that.”

Candice Wright, the director of science, technology assessment, and analytics the Government Accountability Office, was forced to issue the first of many correction during the hearing. “The case of where we found agencies didn’t know what their own employees were using. it was actually the use of non-federal systems to conduct facial images searches, such as for law enforcement purposes,” she told Obernolte.

In those cases, she said, “what was happening is perhaps the folks at headquarters didn’t really have a good sense of what was happening in the regional and local offices.”

In his opening remarks, Rep. Bill Foster, chair of the Investigations and Oversight subcommittee, said overturning Roe had “substantially weakened the Constitutional right to privacy,” adding that biometric data would prove a likely source of evidence in cases against women targeted under anti-abortion laws.

“Biometric privacy enhancing technologies can and should be implemented along with biometric technologies,” Foster, pointing to an array of tools designed to help obfuscate personal data.

Dr. Arun Ross, a Michigan State professor and noted machine learning expert, testified that huge leaps over the past decade in artificial neural networks had ushered in a new age of biometric dominance. There is a growing awareness among academic researchers, he said, that no biometric tool should be considered viable today unless its negative effect on privacy can be quantified.

In particular, Ross warned, there have been rapid advancements in artificial intelligence that have led to the creation of tools capable of sorting humans based solely on their physical characteristics: age, race, sex, and even health-related cues. Like mobile phones before them — nearly all of which are equipped with some form of biometrics today — biometric surveillance has become virtually omnipresent overnight, applied to everything from customer service and bank transactions to border security points and crime scene investigations.

House lawmakers, at times, seemed unfamiliar with not only the laws and procedures relevant to the government’s use of biometric data, but the widespread use of face recognition by federal employees on an ad-hoc basis, absent any hint of federal oversight.

Obernolte followed up by asking if federal agencies accessing privately-owned face recognition databases had to go through the typical procurement process — a potential chokepoint that regulators could hone in on to implement safeguards. Reiterating her agency’s findings, which had already been submitted to the panel, Wright explained that federal employees were regularly tapping into state and local law enforcement databases. These databases are owned by private companies with which their respective agencies have no ties.

In some cases, she added, access is obtained through “test” or “trial” accounts that are freely passed out by private surveillance firms eager to ensnare a new client.

Law enforcement misuse of confidential databases is a notorious issue, and facial recognition is only the latest surveillance technology to be placed in the hands of police officers and federal agents without anyone looking over their shoulders. Police have abused databases to stalk neighbours, journalists, and romantic partners, as have government spies. And concerns have only escalated with the roll back of Roe v. Wade due to fears that women seeking medical care are the next to be targeted. Sen. Ron Wyden has voiced similar concerns.

Obernolte, meanwhile, pressed on with the idea of adopting different mindsets when it comes to biometric data used to verify one’s own identity versus surveillance technologies used to identify others. Dr. Charles Romine, director of information technology at the National Institute of Standards and Technology, or NIST, said that Obernolte had hit the issue on the head, “in the sense that the context of use is critical to understanding the level of risk.”

NIST, an agency comprised of scientists and engineers charged with standardising parameters for “everything from DNA to fingerprint analysis to energy efficiency to the fat content and calories in a jar of peanut butter,” is working through the introduction of guidelines to influence new thinking around risk management, Romine said. “Privacy risk hasn’t been included typically in that, so we’re giving organisations the tools now to understand that data gathered for one purpose, when it’s translated to a different purpose — in the case of biometrics — can have a completely different risk profile associated with it.”

Rep. Stephanie Bice, a Republican member, questioned the GAO over whether laws current exist requiring federal agencies to track their own use of biometric software. Wright said there was already a “broad privacy framework” in place, including the Privacy Act, which applies limits to the government’s use of personal information, and the E-Government Act, which requires federal agencies to perform privacy impact assessments on the systems they’re using.

“Do you think it would be helpful for Congress to look at requiring these assessments to be done maybe on a periodic basis for agencies that are utilising these types of biometrics?” Bice asked.

“So again, the E-Government Act calls for agencies to do that, but the extent to which they’re doing that really varies,” Wright replied.

Over the course of a year, the GAO published three reports related to the government’s use of, specifically, face recognition. The last was released in Sept. 2021. Its auditors found that the adoption of face recognition technology was widespread, including by six agencies whose focus is domestic law enforcement. Seventeen agencies reported that they owned or had collectively accessed up to 27 separate federal face-recognition systems.

The GAO also found that as many as 13 agencies had failed to track the use of face recognition when the software was owned by a non-federal entity. “The lack of awareness about employees’ use of non-federal [face recognition technology] can have privacy implications,” one report states, “including a risk of not adhering to privacy laws or that system owners may share sensitive information used for searches.”

The GAO further reported in 2020 that U.S. Customs and Border Protection had failed to implement some of its mandated privacy protections, including audits that were only sparingly conducted. “CBP had audited only one of its more than 20 commercial airline partners and did not have a plan to audit all its partners for compliance with the program’s privacy requirements,” it said.

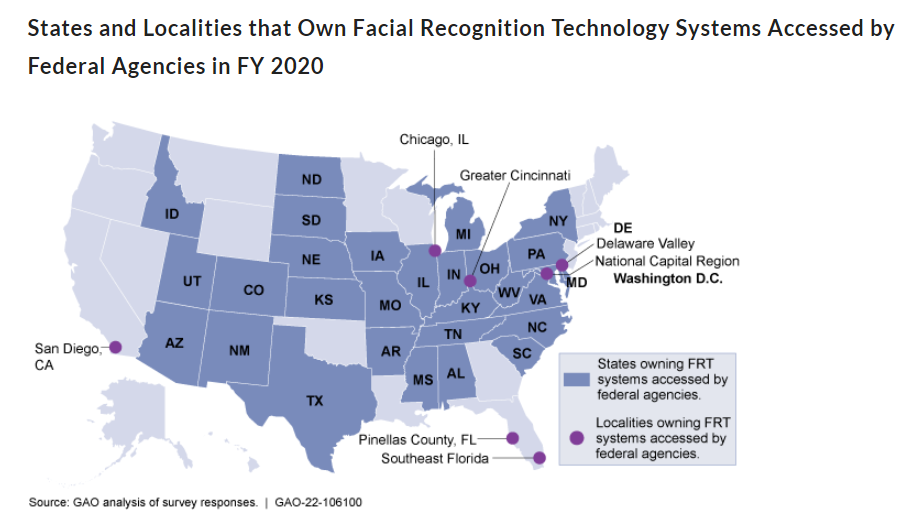

The agency also produced the first map highlighting known states and cities in which federal agents have acquired access to face recognition systems that operate outside of the federal government’s jurisdiction.

Dr. Ross, the academic, outlined a number of practices and technologies that, in his mind, were necessary before biometric privacy could be realistically assured. Encryption schemes, such as homomorphic encryption, for instance, will be necessary to guarantee that underlying biometric data “is never revealed.” NIST’s expert, Romaine, noted that, while cryptography has a lot of potential as a means of safeguarding biometric data, a lot of work remains before it can be considered “significantly practical.”

“There are situations in which even with an obscured database, through encryption that’s queriably, if you provide enough queries and have a machine learning backend to take a look at the responses, you can begin to infer some information,” said Romine. “So we’re still in the process of understanding the specific capabilities that encryption technology, such as as homomorphic encryption, can provide.”

Ross also called for the advancement of “cancellable biometrics,” a method of using mathematical functions to create a distorted version of — for example — a person’s fingerprint. If the distorted image gets stolen, it can be immediately “cancelled” and replaced by another image distorted in another, unique way. A system in which original biometric data needn’t be widely accessible across multiple applications is, theoretically, far safer in terms of both risk of interception and fraud.

One the biggest threats, Ross contended, is allowing biometric data to be reused across multiple systems. “Legitimate concerns have been expressed,” he noted, about using face datasets scrapped from the open web. Ethical questions surrounding the use of social media images without consent by companies like Clearview AI — which is now being used to help identify enemy combatants in a war zone — are compounded by the risks associated with allowing the same personal data to be vacuumed time and again by an endless stream of biometric products.

Ensuring it is more difficult for face images to be scraped from public websites will be key, Ross said, to creating an environment in which both biometric systems exist and privacy is reasonably respected.

Lastly, new camera technologies would have to advance and be widely adopted with the aim of making recorded images both uninterpretable to the human eye — a sort of visual encryption — and exclusively applicable to the programs for which they are captured. Such cameras can be, particularly in public spaces, Ross said, “acquired images are not viable for any previously unspecified purposes.”