Each passing day is allegedly bringing us closer to the virtual reality cosmopolis known as the metaverse. The bad news is that prominent voices in tech are increasingly referencing the “virtual world created by an evil monopolist” in the Neal Stephenson novel Snow Crash in the same breath they’re using to talk about the metaverse; the worse news is that, apparently, Meta CEO Mark Zuckerberg’s latest vision of the virtual hangout space reportedly includes the phrase “robot skin.”

In a Monday blog, the AI division of Meta — the company formerly known as Facebook — announced that it has teamed up with Carnegie Mellon University in Pennsylvania to develop a lightweight, tactile-sensing robot “skin” that will help researchers develop AI’s tactile sensing in constructing the metaverse.

“When you’re wearing a Meta headset, you also want some haptics to be provided so users can feel even richer experiences,” Meta research scientist Abhinav Gupta said on a call with press, according to CNBC. “How can you provide haptic feedback unless you know what kind of touch humans feel or what are the material properties and so on?”

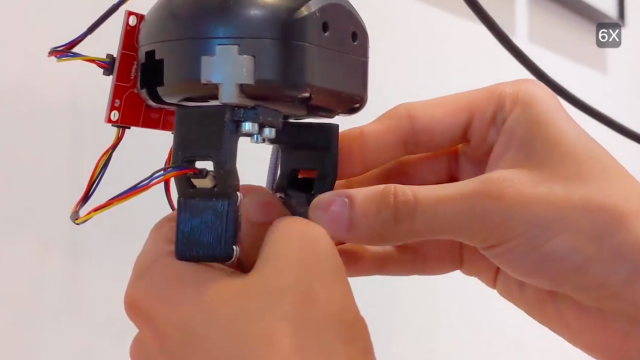

Known as ReSkin, the new open source “skin” would feed data to AI researchers who “struggle to exploit richness and redundancy from touch sensing the way people do” to incorporate touch into their models. According to Meta, the skin would utilise machine learning and magnetic sensing in order to autocalibrate its own sensor, which would create a generalizable skin that would be able to share data between systems, essentially solving the problem of having to train a new skin every time it was replaced.

That problem, it turns out, is a big one when it comes to generating soft materials at a massive scale, particularly because of the natural manufacturing variations that occur that make it difficult to produce other tactile-sensing experiments with any kind of reliable uniformity. But ReSkin, according to Meta, would account for those inconsistencies by allowing the skin to transmit data magnetically rather than electronically, by utilising data from multiple sensors and by utilising self-supervised learning models so that the sensors can calibrate themselves independently.

“Robust tactile sensing is a significant bottleneck in robotics,” Lerrel Pinto, an assistant professor of computer science at NYU, told Meta. “Current sensors are either too expensive, offer poor resolution, or are simply too unwieldy for custom robots. ReSkin has the potential to overcome several of these issues. Its lightweight and small form factor makes it compatible with arbitrary grippers, and I’m excited to further explore applications of this sensor on our lab’s robots.”

ReSkin — which would run about 2-3 mm thick, and could be used for more than 50,000 interactions — also has the added bonus of being relatively inexpensive to produce at roughly $US6 (A$8) each at 100 units (and even less expensive at higher quantities).

ReSkin’s tactile-sensing features make it particularly adept at completing tasks that the human hand would be adept at, like “using a key to unlock a door or to grasp delicate objects, like grapes or blueberries.” When our robot overlords come for us, at least they’ll be gentle.