Everyone, please dramatically slow clap: YouTube says it will finally ban videos that lie about the effectiveness or nonexistent dangers of any vaccine — not just clips focused on covid-19 vaccines — and it has terminated the accounts of prominent antivaxxers like Robert F. Kennedy Jr.’s Children’s Health Defence Fund and disgraced researcher Dr. Joseph Mercola.

In October 2020, YouTube announced a ban on videos spreading unfounded claims that the coronavirus vaccines didn’t work or were dangerous, such as by causing autism or that it swelled up your cousin’s friend’s balls so bad his fiance dumped him. Astute readers will note that October 2020 is an extremely long time after antivax conspiracy theories and misinformation had become an obvious problem on the platform. YouTube didn’t ban the same type of hoax content about other vaccines at the time, and it continues to be rife with antivax videos (though some of the most prolific offenders fled to greener pastures like right-wing competitor Rumble, and in general it has made significant strides forward in not actively recommending antivax content to users).

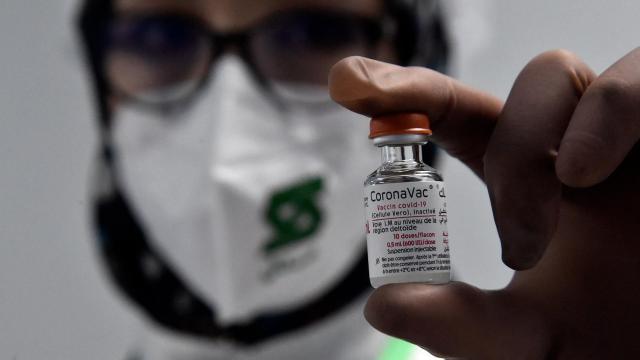

The modern antivax movement is one of the reasons that vaccination campaigns in the U.S. and elsewhere have slowed. While polls have shown the minority of Americans opposed to covid-19 vaccines is shrinking, antivax influencers have carved out small empires on social media. The movement is ideologically diverse but has deep roots in anti-government conspiracism and is increasingly converging with the far right.

Matt Halprin, YouTube’s vice president of global trust and safety, explained to the Washington Post that it just took so gosh-darn long to update the policy because it was really focused on fighting misinformation about coronavirus vaccines specifically, and also they had to agree on the wording.

“Developing robust policies takes time,” Halprin told the Post. “We wanted to launch a policy that is comprehensive, enforceable with consistency and adequately addresses the challenge.”

According to a YouTube blog post, the company now bans:

Specifically, content that falsely alleges that approved vaccines are dangerous and cause chronic health effects, claims that vaccines do not reduce transmission or contraction of disease, or contains misinformation on the substances contained in vaccines will be removed. This would include content that falsely says that approved vaccines cause autism, cancer or infertility, or that substances in vaccines can track those who receive them. Our policies not only cover specific routine immunizations like for measles or Hepatitis B, but also apply to general statements about vaccines.

Great! This also is very close to what it announced about coronavirus vaccines last year, but apparently, it takes a year to adjust a policy to remove the word coronavirus.

YouTube will still allow videos in which people discuss “personal testimonials” with vaccination, “so long as the video doesn’t violate other Community Guidelines, or the channel doesn’t show a pattern of promoting vaccine hesitancy.”

A September 2020 study by the Oxford Research Institute and Reuters Institute, partially covering the period between October 2019 and June 2020, found that YouTube videos with misleading or false content about coronavirus vaccines had been shared 20 million times on Facebook, Twitter, and Reddit, outranking news sites like CNN, ABC News, BBC, Fox News, and Al Jazeera. Interestingly, the primary fuel for the fire seemed to be shares of the videos on Facebook, which had lax rules about supposedly organic antivax content and was slow to expand its rules against antivaxxers. This illustrates the interconnected nature of platforms — for example, YouTube might stop recommending a video or downrank it heavily in search results, but it can still rack up ample views if the link is widely shared. (A survey released in July 2021 found that people who primarily got their news from Facebook were inordinately likely to oppose vaccination, surprising no one.)

“For a long time, the companies tolerated that because they were like, ‘Who cares if the Earth is flat, who cares if you believe in chemtrails?’ It seemed harmless,” Hany Farid, a University of California at Berkeley professor specializing in research on misinformation, told the Post. “The problem with these conspiracy theories that maybe seemed goofy and harmless is they have led to a general mistrust of governments, institutions, scientists and media, and that has set the stage of what we are seeing now.”

Antivax content is primarily pushed by a relatively small, but extremely determined and often profit-minded segment of users. As the Post noted, until recently, six out of the 12 antivaxxers a Centre for Countering Digital Hate report identified as wildly disproportionate in pushing antivax content online “were easily searchable and still posting videos” on YouTube. Mercola, the originator of the vaccines-cause-autism conspiracy theory, and RFK Jr., an environmental activist who has become one of the nation’s leading bloviators on vaccines, were on the list. Others who have lost their accounts include antivaxxers like Erin Elizabeth and Sherri Tenpenny, according to The Verge, while a YouTube spokesperson told the site others such as Rashid Bhuttar and Ty and Charlene Bollinger lost their accounts months ago.

Others are likely to follow. On Tuesday, YouTube deleted the German account of RT, a Russian state-backed network that has spread misinformation about the pandemic.