For the first time ever, neuroscientists have translated the cognitive signals associated with handwriting into text, and in real time. The new technique is more than twice as fast than the previous method, allowing a paralysed man to text at a rate of 90 characters per minute.

Researchers with the BrainGate collaboration have developed a system that could eventually “allow people with severe speech and motor impairments to communicate by text, email, or other forms of writing,” according to Jaimie Henderson, co-director of the Neural Prosthetics Translational Laboratory at Stanford University and a co-author of the new Nature study.

Brain signals induced by thoughts associated with handwriting were translated into text in real time, allowing a paralysed man to text at a rate of 16 words per minute. The system uses brain implants and a machine learning algorithm to decode brain signals associated with handwriting.

The BrainGate consortium has made key contributions to the development of brain-computer interfaces (BCIs) over the years, including a sophisticated brain-controlled robotic arm that was showcased in 2012 and a newly announced high-bandwidth wireless BCI for humans. The project to develop the new handwriting brain-computer interface was led by Frank Willett, a research scientist at Stanford University, and supervised by neuroscientist Krishna Shenoy from the Howard Hughes Medical Institute, and Henderson, a neurosurgeon at Stanford.

In 2017, Shenoy and his colleagues developed a thought-to-text system that significantly improved upon earlier techniques, allowing monkeys to text at a rate of 12 words per minute. This research formed the basis of subsequent work that appeared later that year, namely a brain-computer interface that allowed people with paralysis to type at a rate of 40 characters, or roughly eight words, per minute. But as Henderson explained in his email, the “current work goes beyond the 2017 paper by more than doubling the speed of typing by a person with paralysis, and uses an entirely new and different method.”

Indeed, neuroscientists had never previously tried to capture the mental act of handwriting, and the new experiment was explicitly done to see if it would result in a more efficient thought-to-text system.

At the time of the experiment, the lone participant, a 65-year-old man, was 10 years removed from a spinal cord injury that left him paralysed below the shoulders.

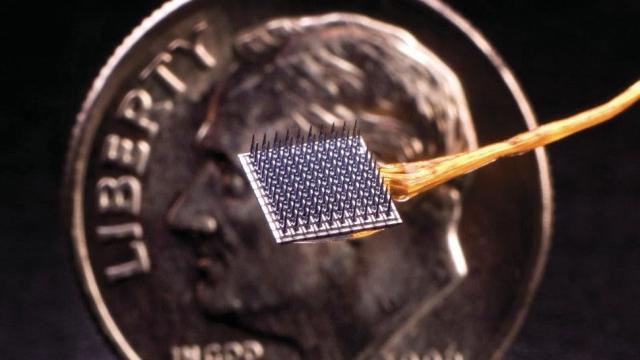

“Two sensors, each measuring 4×4 mm, about the size of a baby aspirin, with 100 hair-fine electrodes, were placed in the outer layers of the brain’s motor cortex — the area that controls movement on the opposite side of the body,” Henderson explained. “These electrodes can record signals from about 100 neurons,” and the resulting signals are “processed by a computer to decode the brain activity associated with writing individual letters.”

During the experiment, the man attempted to move his paralysed hand to write words. He visualised “writing the letters one on top of another with a pen on a yellow legal pad,” while a decoder typed each letter as it was “identified by the neural network,” said Henderson. The team used the “greater than” symbol to denote spaces between words, “since otherwise there would be no way to detect the intent to write a space,” he added.

The system was able to distinguish individual letters to roughly 95% accuracy. Henderson said the rate of 16 words per minute is around three-quarters the speed of what’s typically seen among people above age 65 when typing on their smartphones.

The results are promising, but the system is not without its limitations. First and foremost, it’s highly invasive, as it requires brain surgery and implants. It’s also not generalisable across individuals, requiring the system to learn the cognitive nuances of each and every user. The new approach is also “very computationally intensive,” according to Henderson, requiring a “specialised high-performance computer or a compute cluster.” Finally, the system requires a technician to set up the brain-computer interface and run the software.

These limitations notwithstanding, Henderson envisions a fully matured version that’s “wireless, always available, and self-calibrating.” All of these goals are achievable, he said, but that would “require investment of resources that would ideally be provided by a company rather than an academic lab.”

Looking ahead, the team hopes to study the way brains coordinate dexterous movements across multiple limbs and to understand how speech is generated by the brain.