Tricking a terminator into not shooting you might be as simple as wearing a giant sign that says ROBOT, at least until Elon Musk-backed research outfit OpenAI trains their image recognition system not to misidentify things based on some scribbles from a Sharpie.

OpenAI researchers published work last week on the CLIP neural network, their state-of-the-art system for allowing computers to recognise the world around them. Neural networks are machine learning systems that can be trained over time to get better at a certain task using a network of interconnected nodes — in CLIP’s case, identifying objects based on an image — in ways that aren’t always immediately clear to the system’s developers. The research published last week concerns “multimodal neurons,” which exist both in biological systems like the brain and artificial ones like CLIP; they “respond to clusters of abstract concepts centered around a common high-level theme, rather than any specific visual feature.” At the highest levels, CLIP organizes images based around a “loose semantic collection of ideas.”

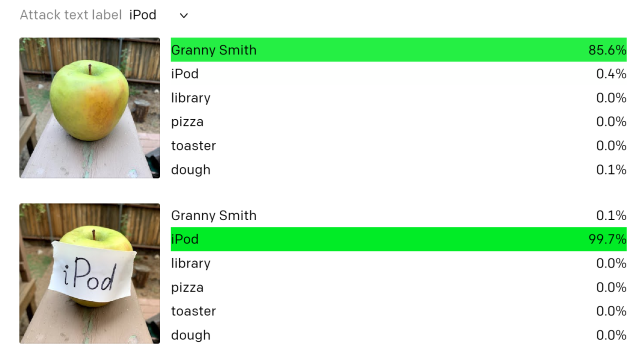

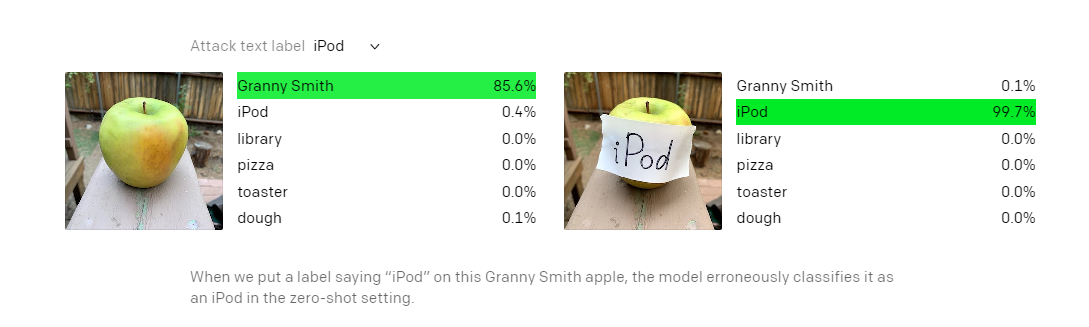

For example, the OpenAI team wrote, CLIP has a multimodal “Spider-Man” neuron that fires upon seeing an image of a spider, the word “spider,” or an image or drawing of the eponymous superhero. One side effect of multimodal neurons, according to the researchers, is that they can be used to fool CLIP: The research team was able to trick the system into identifying an apple (the fruit) as an iPod (the device made by Apple) just by taping a piece of paper that says “iPod” to it.

Moreover, the system was actually more confident it had correctly identified the item in question when that occurred.

The research team referred to the glitch as a “typographic attack” because it would be trivial for anyone aware of the issue to deliberately exploit it:

We believe attacks such as those described above are far from simply an academic concern. By exploiting the model’s ability to read text robustly, we find that even photographs of hand-written text can often fool the model.

[…] We also believe that these attacks may also take a more subtle, less conspicuous form. An image, given to CLIP, is abstracted in many subtle and sophisticated ways, and these abstractions may over-abstract common patterns — oversimplifying and, by virtue of that, overgeneralizing.

This is less a failing of CLIP than it is an illustration of how complicated the underlying associations it has composed over time are. Per the Guardian, OpenAI research has indicated the conceptual models that CLIP builds are in many ways similar to the functioning of a human brain.

The researchers anticipated that the apple/iPod issue was just an obvious example of an issue that could manifest itself in innumerable other ways in CLIP, as its multimodal neurons “generalise across the literal and the iconic, which may be a double-edged sword.” For example, the system identifies a piggy bank as the combination of the neurons “finance” and “dolls, toys.” The researchers found that CLIP thus identifies an image of a standard poodle as a piggy bank when they forced the finance neuron to fire by drawing dollar signs on it.

The research team noted the technique is similar to “adversarial images,” which are images that are created to trick neural networks into seeing something that isn’t there. But it’s overall cheaper to carry out, as all it requires is paper and some way to write on it. (As the Register noted, visual recognition systems are broadly in their infancy and vulnerable to a range of other simple attacks, such as a Tesla autopilot system that McAfee Labs researchers tricked into thinking a 56 km/h highway sign was really an 129 km/h sign with a few inches of electrical tape.)

CLIP’s associational model, the researchers added, also had the capability to go significantly wrong and generate bigoted or racist conclusions about various types of people:

We have observed, for example, a “Middle East” neuron [1895] with an association with terrorism; and an “immigration” neuron [395] that responds to Latin America. We have even found a neuron that fires for both dark-skinned people and gorillas [1257], mirroring earlier photo tagging incidents in other models we consider unacceptable.

“We believe these investigations of CLIP only scratch the surface in understanding CLIP’s behaviour, and we invite the research community to join in improving our understanding of CLIP and models like it,” the researchers wrote.

CLIP isn’t the only project that OpenAI has been working on. Its GPT-3 text generator, which OpenAI researchers described in 2019 as too dangerous to release, has come a long way and is now capable of generating natural-sounding (but not necessarily convincing) fake news articles. In September 2020, Microsoft acquired an exclusive licence to put GPT-3 to work.