Today in terrible ideas: an AI system that claims to be able to accurately predict your political affiliation using…your face. Yes, really.

Stanford researcher Michal Kosinski published his research (which you can read here) in the journal Nature earlier this week. You might remember Kosinski’s name from spearheading a similar study back in 2017 that claimed to accurately detect a person’s sexuality based on the way their facial features were aligned. At the time, civil rights groups and fellow academics universally dunked on these findings, calling Kosinski “reckless”for publishing it.

According to a registration docket that Kosinski filed for this new study with the Centre for Open Science, he began work on this latest study in September 2017 — not long after that first round of outrage began petering off. This time, according to the study’s paper, Kosinski pulled a sample of a little over a million people’s faces, largely from an unnamed “popular dating website” used in the U.S., UK, and Canada. On top of that sample, Kosinski’s original filing describes how some of these faces were pulled from U.S.-centric Facebook profiles onboarded by MyPersonality, a psychological testing app that Facebook ended up cutting ties with in 2018, citing some pretty poor data practices.

In all cases — either somewhere on that dating site, or somewhere on their Facebook profile–these users indicated that they were “conservative” or “liberal.”

These face batches were fed into an open-source facial recognition algorithm that then reduced each face into roughly 2,000 different data points “subsuming their core features.” After feeding the algorithm enough data points from the photos, Kosinski ended up with an “average” set of points that could be tied to either a liberal- or conservative-leaning face, and an algorithm that could successfully predict a person’s political affiliation from their photograph roughly 72% of the time, on average.

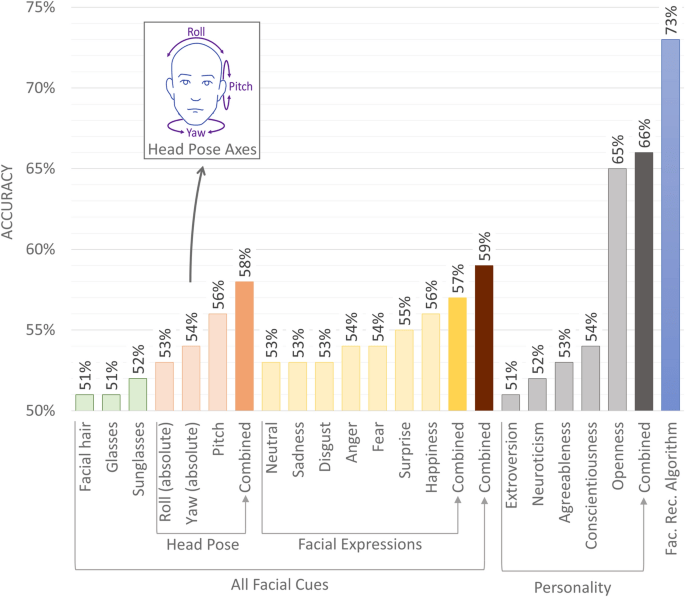

Exactly what distinguishes a red face from a blue face is kind of hard to define, at least according to the paper. Kosinski attempted to isolate a few facial features — like the glasses they’re wearing in a given photo, or the way their face was tilted towards the camera — and tested whether these traits could act as predictors for that person’s political leanings as well. Suffice to say, none of these features were as on-target as this black box of an algorithm.

That said, there were a few commonalities that Kosinski describes in his notes discussing the paper. People that self-identify as liberals, for example, were more likely to face the camera directly, and were more likely to express “surprise” in their pictures. Conservatives, meanwhile, on top of being on the whole more white, male, and old, also ended up expressing more “disgust” in their photos than their liberal counterparts.

But there’s nothing in the author’s notes that really covers why this study was even done in the first place. As VentureBeat points out in its own coverage of the study, the entire thesis of Kosinski’s research is based around the pseudoscientific idea of what’s called “physiognomy” — being able to suss out a person’s entire personality by the way their facial features line up. Psychology researchers have been saying for years that the algorithms that claim to classify whether someone might be more likely to be a bank robber, a political scientist, or a hard-nosed Republican based on their face don’t actually do much better than chance.

Kosinski’s notes attempt to refute these findings somewhat, saying that “many studies have shown that people can determine others’ political views, personality, sexual orientation, honesty, and many other traits from their faces,” without really… pointing to any studies to back up his point. Maybe it’s because all evidence seems to point in the exact opposite direction.