It’s getting pretty hard to tell what is real and what isn’t these days. Technological advancements are allow people to create increasingly sophisticated version of reality. And NVIDIA’s newest piece of software takes this a step further.

Earlier this week, NVIDIA launched the open beta of its Omniverse, its cloud platform for three dimensional design.

There are plenty of nifty features including a Google Docs-like editing experience for design software for architecture, engineering and construction professionals.

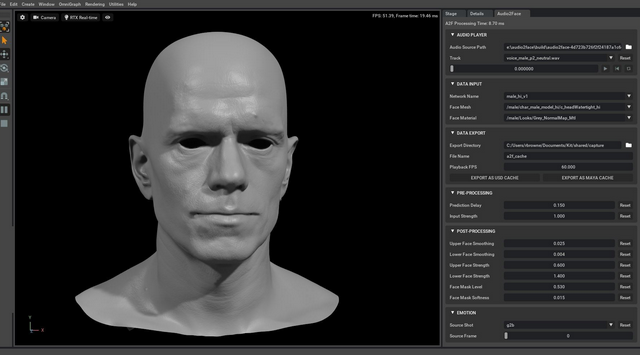

But there’s another feature that is particularly intriguing: NVIDIA’s forthcoming Omniverse Audio2Face.

According to the company, the software uses a “combination of AI based technologies that generates facial motion and lip sync that are derived entirely from an audio source.”

Translated from PR speak, what this means is that Audio2Face will take a recording and generate a face that looks like it’s saying those words. You design a face, it’ll move its ‘muscles’ to make it seem like it’s speaking.

And, it can do this on the fly. The NVIDIA software will emulate the correct mouth and face shapes in real time.

This has pretty obvious implications for animation designers — imagine how much easier it would be for people who are creating animated movies, for example — but NVIDIA’s tools have other interesting implications.

In the future, software could generate a simulated version of your face while you’re talking.

This could come in handy when, say, you’re in a situation where your internet connection isn’t good enough to carry full video but is able to transmit audio. People would see ‘your’ face saying the words you’re saying — but it’s not really your face.

The tool is now in early release beta with rather limited features meant to demonstrate the functionality of the program.

But NVIDIA said that it’s expecting to launch the full tool early in 2020. In no time at all, you’ll be creating photorealistic versions of faces to go with audio.