Tech platforms use algorithms to make decisions. They’re used to decide what content to show you, whose accounts to recommend, even how to preview images on the timeline. In making these decisions, they can — often unintentionally — reflect the bias of the people who write them. And that’s the charge against Twitter

Over the weekend, Twitter user @bascule tweeted an experiment about how Twitter chooses to preview images in the timeline.

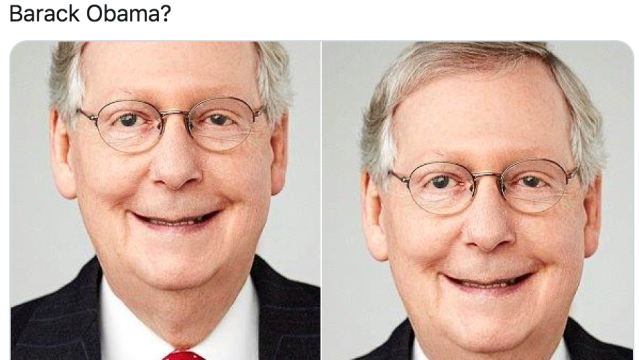

He shared images containing photographs Barack Obama and Mitch McConnell that were too big to display on the timeline. He wanted to see which parts of the images the platform chose to show.

And would you believe it: both times, the very white Mitch McConnell was displayed. And that’s even after he switched the positioning of Obama and McConnell or changed their tie colours.

Trying a horrible experiment…

Which will the Twitter algorithm pick: Mitch McConnell or Barack Obama? pic.twitter.com/bR1GRyCkia

— Tony “Abolish (Pol)ICE” Arcieri ???? (@bascule) September 19, 2020

But when he inverted the photo’s colours, no such problem. Weird, huh? So then other users began to experiment to see if there was racial bias in Twitter’s algorithmic. They tried with the cast of Black Panther.

Holy shit, this thread is a horror show. Made me wonder who Twitter would pick as the star of Black Panther… https://t.co/f68y6nGd9N pic.twitter.com/sIgaK6c4Xm

— ???? WEAR A MASK ???? (@JackCouvela) September 20, 2020

And even Lenny and Carl from the Simpsons.

I wonder if Twitter does this to fictional characters too.

Lenny Carl pic.twitter.com/fmJMWkkYEf

— Jordan Simonovski (@_jsimonovski) September 20, 2020

How did Twitter respond to allegations of algorithmic racial bias?

Twitter spokesperson Liz Kelly told Gizmodo that the company was investigating allegations. She also provided evidence showing that the platform’s image preview didn’t always prefer black faces over white faces, as a rule.

She pointed towards a post from the company’s Chief Design Officer that showed other factors may have been playing a role in the algorithm’s decisions to prefer some faces. She also cited analysis from an unaffiliated researcher who found that black faces were slightly preferred to white faces.

Still, she said the company was going to look into it further.

“Our team did test for bias before shipping the model and did not find evidence of racial or gender bias in our testing,” she wrote in an email.

“But it’s clear from these examples that we’ve got more analysis to do. We’re looking into this and will continue to share what we learn and what actions we take. ”

While a platform’s decision to preview images in one way may not be a huge issue in itself, algorithmic bias continues to be a significant and on-going problem for tech platforms. Twitter’s commitment for a transparent investigation is commendable, but it will be little comfort to the people of colour and other minority groups whose lives are increasingly impacted by powerful and inscrutable algorithms.