Researchers have built a massive dataset of hotel images with the intention of helping law enforcement in human trafficking investigations. The dataset, called Hotels-50k, consists over more than 1 million images from 50,000 hotels globally.

“Recognising a hotel from an image of a hotel room is important for human trafficking investigations,” the researchers wrote in the paper published late last month. “Images directly link victims to places and can help verify where victims have been trafficked, and where their traffickers might move them or others in the future.”

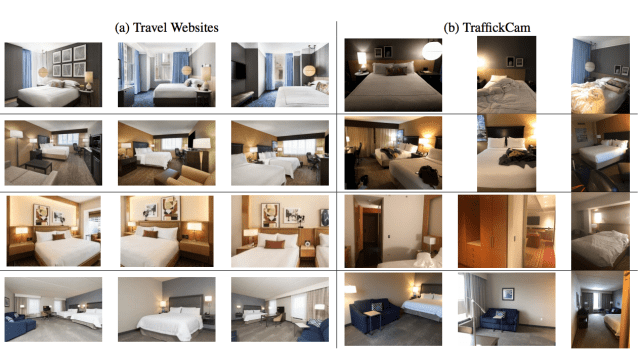

The images included in the dataset come from professional photographs on publicly-available travel websites as well as crowdsourced images from the mobile app TraffickCam, which asks users who are travelling to take photos of their hotel rooms and submit them to a database with the location. The app contributes to a database of images for law enforcement.

The researchers, who come from George Washington University, Temple University, and Adobe Research, note that the images gathered from the app more closely align with images used in official investigations because they are taken on “similar devices, at varying orientations, with luggage and other clutter, and without professional lighting.”

Gathering images from investigations “is problematic for many reasons,” they wrote. Each of the 1,027,871 images includes the hotel name, geographic location, and if applicable, the hotel chain.

Since the researchers didn’t include photos from actual human trafficking investigations in the dataset, they needed to make sure the photos scraped from travel websites and the mobile app more closely mirrored them. Abby Stylianou, a PhD student at George Washington University and co-author of the paper, told the Register that they “assume that an investigator will always erase the victim from the photo, leaving a ‘blanked out’ region in the image,” so the researchers generated “people-shaped masks” from MS-COCO, the Microsoft Common Objects in Context dataset. “This encourages the network to learn to produce similar codes for images from the same hotel even if there is a large blanked out shape in the image,” Stylianou said.

The researchers tested their dataset on two pre-trained “off-the-shelf” neural networks. Their model was able to accurately identify the hotel chains from the images “with nearly 80 per cent accuracy,” according to the Register. But the system had trouble identifying the correct individual hotel—Stylianou told the Register that of the first 100 images, it correctly identified the hotel 24 per cent of the time.

That’s an exceedingly low success rate to be considered for any meaningful use case. And leaning on artificial intelligence as a tool to track down traffickers is hardly a novel concept—it’s been floated a number of times. Though with this system, no matter how well-intended, it’s evident the approach still has a long ways to go before it can be an efficient hotel recognition system.

Beyond accuracy, there remain ethical considerations in its deployment. For starters, it’s unclear how such a system would be able to differentiate advertisements for human trafficking from images of consenting, adult sex workers.

This wouldn’t be the first misguided approach to fighting human trafficking at the detriment of the sex work community, such as the erasure of online platforms sex workers depended on to work safely and independently.

Pushing sex workers offline and onto the streets is already resulting in an increase in violence and arrests against them.

There is also little reassuring evidence that AI surveillance systems can operate free from bias and error. Or, more troublingly, that law enforcement agencies will use the systems for more than just their stated purpose—which, in this case, is to identify hotels and hotel chains involved in human trafficking efforts.

A sheriff’s office in Oregon using Amazon’s facial recognition tech, Rekognition, released documents last year indicating that police used the system for more than just its marketed purpose, including identifying both “unconscious or deceased individuals” and also “possible witnesses.”

While the researchers’ hotel recognition system may be developed with good intentions, it’s important to understand how these powerful databases can not only screw up and exploit unintended vulnerable populations but be abused by those in power.