In May 2008, Facebook announced what initially seemed like a fun, whimsical addition to its platform: People You May Know.

“We built this feature with the intention of helping you connect to more of your friends, especially ones you might not have known were on Facebook”, said the post.

It went on to become one of Facebook’s most important tools for building out its social network, which expanded from 100 million members then to over two billion today. While some people must certainly have been grateful to get help connecting with everyone they’ve ever known, other Facebook users hated the feature. They asked how to turn it off.

They downloaded a “FB Purity” browser extension to hide it from their view. Some users complained about it to the US federal agency tasked with protecting American consumers, saying it constantly showed them people they didn’t want to friend. Another user told the Federal Trade Commission that Facebook wouldn’t stop suggesting she friend strangers “posed in sexually explicit poses”.

In an investigation last year, we detailed the ways People You May Know, or PYMK, as it’s referred to internally, can prove detrimental to Facebook users. It mines information users don’t have control over to make connections they may not want it to make. The worst example of this we documented is when sex workers are outed to their clients.

When lawmakers recently sent Facebook over 2,000 questions about the social network’s operation, Senator Richard Blumenthal (D-Conn.) raised concerns about PYMK suggesting a psychiatrist’s patients friend one another and asked whether users can opt out of Facebook collecting or using their data for People You May Know, which is another way of asking whether users can turn it off.

Facebook responded by suggesting the senator see their answer to a previous question, but the real answer is “no”.

Facebook refuses to let users opt out of PYMK, telling us last year, “An opt out is not something we think people would find useful”. Perhaps now, though, in its time of privacy reckoning, Facebook will reconsider the mandatory nature of this particular feature. It’s about time, because People You May Know has been getting on people’s nerves for over 10 years.

Facebook didn’t come up with the idea for PYMK out of thin air. LinkedIn had launched People You May Know in 2006, originally displaying its suggested connections as ads that got the highest click-through rate the professional networking site had ever seen. Facebook didn’t bother to come up with a different name for it.

“People You May Know looks at, among other things, your current friend list and their friends, your education info and your work info”, Facebook explained when it launched the feature.

That wasn’t all. Within a year, AdWeek was reporting that people were “spooked” by the appearance of “people they emailed years ago” showing up as “People They May Know”. When these users had first signed up for Facebook, they were prompted to connect with people already on the site through a “Find People You Email” function; it turned out Facebook had kept all the email addresses from their inboxes.

That was disturbing because Facebook hadn’t disclosed that it would store and reuse those contacts. (According to the Canadian Privacy Commissioner, Facebook only started providing that disclosure after the Commission investigated it in 2012.)

Though Facebook is now upfront about using uploaded contacts for PYMK, its then-chief privacy officer, Chris Kelly, refused to confirm it was happening.

“We are constantly iterating on the algorithm that we use to determine the Suggestions section of the home page”, Kelly told Adweek in 2009. “We do not share details about the algorithm itself”.

Address books were so valuable to Facebook in its early days that one of the first companies it acquired, at the beginning of 2010, was Malaysia-based Octazen, a contact importing service that had been used, until its acquisition by Facebook, to tap into user contacts on the world’s biggest social and email sites.

In a TechCrunch post at the time, Michael Arrington suggested that acquiring a tiny start-up on the other side of the world only made sense if Octazen had been secretly keeping users’ contact information from all the sites it worked with to build a “shadow social network”.

That would have been incredibly valuable to a then-fledging Facebook, but Facebook dismissed the unsupported claim, saying that it just needed a couple of guys who could quickly help it build tools to suck up contacts from novel services as it expanded into new countries.

That was important because to be the best social network it could be, Facebook needed to develop a list of everyone in the world and how they were connected. Even if you don’t give Facebook access to your own contact book, it can learn a lot about you by looking through other people’s contact books.

If Facebook sees an email address or a phone number for you in someone else’s address book, it will attach it to your account as “shadow” contact information that you can’t see or access.

That means Facebook knows your work email address, even if you never provided it to Facebook and can recommend you friend people you’ve corresponded with from that address. It means when you sign up for Facebook for the very first time, it knows right away “who all your friends are.“.

And it means that exchanging phone numbers with someone, say at an Alcoholics Anonymous meeting, will result in your not being anonymous for long.

Smartphone behemoth Apple seems to have only recently realised how valuable address books are and how easily they can be abused by nefarious actors. In a Bloomberg report, an iOS developer called address books “the Wild West of data”.

In June, Apple changed its rules for app developers to forbid accessing iPhone contacts “to build a contact database for your own use.” Apple didn’t respond to a request for comment about whether Facebook’s collection of contact information for its People You May Know database violates that rule.

In 2010, Ellenora Fulk of Pierce County, Washington, saw a woman she didn’t recognise pop up in her People You May Know. In the accompanying profile photo, the woman was with Fulk’s estranged husband, standing next to a wedding cake and drinking champagne. After Fulk alerted the authorities, her husband, a corrections officer who had changed his last name, was charged with bigamy.

He was sentenced to one year in gaol, but was able to suspend the sentence by paying a $US500 ($673) “victim compensation” fee, presumably to wife #1. Both marriages were ended, the first in divorce and the second in annulment. PYMK takes casualties.

Early on, Facebook realised there were some connections between people that it shouldn’t make. A person familiar with the People You May Know team’s early work said that as it was perfecting the art of linking people, there was one golden rule: “Don’t suggest the mistress to the wife”.

One of the primary ways PYMK systems figure out who knows each other is through “triangle-closing”, as LinkedIn put it in a blog post: “If Alice knows Bob and Bob knows Carol, then maybe Alice knows Carol”. But that can get awkward if you are making those connections by looking at a person’s private contact list rather than at their public friend list.

Bob might have phone numbers for both Alice and Carol in his phone because Alice is his wife and Carol is his side piece. Bob doesn’t want that particular triangle to close, so Facebook’s engineers initially avoided making suggestions that relied solely on “two hops” through a contact book.

Despite hiccups like the Fulk incident, People You May Know was batting it out of the park. During a presentation in July 2010, the engineer in charge of PYMK said it was responsible for “a significant chunk of all friending on Facebook”. That was important because “people with more friends use the site more”, according to the 2010 presentation by Lars Backstrom, who went on to become the head of engineering for all of Facebook.

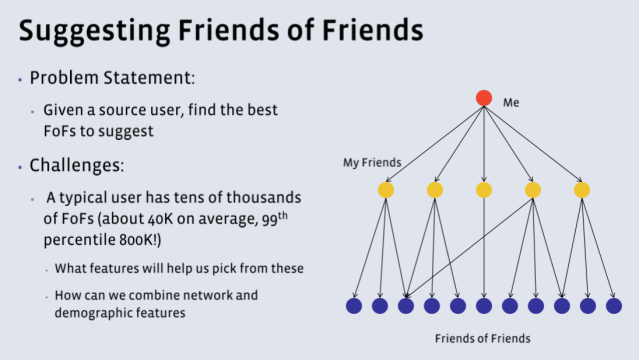

Backstrom got his PhD from Cornell where he studied how social networks evolve. When he joined Facebook in 2009, he got the chance to control the evolution. Backstrom built “the PYMK backend infrastructure and machine learning system“. Backstrom explained in his 2010 talk how the PYMK algorithm decided which “friends of friends” to put in your “People You May Know” box: Facebook looked at not just how many mutual friends you had, but how recently those friendships were made and how invested you were in them.

That all got converted into maths. In engineering language, a person is a “node” and a friendship between people is an “edge”. If you appear to be in a clustered node with someone else — i.e., have a lot of mutual friends — and all the edges are fresh — i.e., a lot of those friendships are recent — that is like an algorithmic alarm bell going off, saying that a new clique has been formed offline and should be replicated digitally on the social network.

But just having friends in common doesn’t mean that you necessarily want to be friends with someone. In 2015, Kevin Kantor recounted in spoken poetry how painful it was to have his rapist show up as a “person you should know”. He and his rapist had three mutual friends.

The same year, a woman whom I will call Flora, to protect her anonymity, went on a first date with a guy she met via a dating app. Flora doesn’t like new, strange men to know too much about her, so she only tells them her nickname. She was happy about that in this case, because things immediately turned sour with the guy and he began to harass her via text, sending her messages repeatedly for months which she ignored.

In the spring of 2016, about a year after she first met him, he sent her a message revealing he now knew her real name because she had been suggested to him as a “person he may know” on Facebook.

When you start aggressively mining people’s social networks, it’s easy to surface people we know that we don’t want to know.

In the summer of 2015, a psychiatrist was meeting with one of her patients, a 30-something snowboarder. He told her that he’d started getting some odd People You May Know suggestions on Facebook, people who were much older than him, many of them looking sick or infirm. He held up his phone and showed her his friend recommendations which included an older man using a walker. “Are these your patients?” he asked.

The psychiatrist was aghast because she recognised some of the people. She wasn’t friends with her patients on Facebook and in fact barely used it, but Facebook had figured out that she was a link between this group of individuals, probably because they all had her contact information; based apparently on that alone, Facebook seemed to have decided they might want to be friends.

“It’s a massive privacy fail”, the psychiatrist told me at the time.

In 2016, a man was arrested for car robbery after he was suggested to his victim as a Facebook friend. How that connection was made, if it wasn’t just a coincidence, is inexplicable.

In his 2010 presentation, Lars Backstrom said it would be near impossible for Facebook to suggest more than “Friends of Friends” as People You May Know. Yet he showed a graph that demonstrated that a good number of friendships on Facebook were between people who had no obvious tie. There was no path between them, even if you did a network analysis that allowed for 12 degrees of Kevin Bacon.

To be able to predict connections between people where the “paths” weren’t obvious, Facebook would need more data. And since then, it has developed new avenues to learn more about its users. It bought Instagram in 2012 and can now use information about whose photos you care about to recommend friends. In 2014, it bought WhatsApp, which would theoretically give it direct insight into who messages who.

Facebook says it doesn’t currently use information from WhatsApp for People You May Know, though a close read of its privacy policy shows that it’s given itself the right to do so: “Facebook … may use information from us to improve your experiences within their services such as making product suggestions (for example, of friends or connections, or of interesting content)”.

Facebook continues to seek out novel ways to better get to know its users, reportedly seeking data from hospitals and from banks. And as more and more people downloaded Facebook’s apps to their smartphones, Facebook engineers realised that offered a well of valuable data for PYMK.

In 2014, Facebook filed a patent application for making friend recommendations based on detecting that two smartphones were in the same place at the same time; it said you could compare the accelerometer and gyroscope readings of each phone, to tell whether the people were facing each other or walking together.

Facebook said it hasn’t put that technique into practice and despite persistent claims to the contrary, says that it doesn’t use location derived from people’s phones or IP addresses to make friend suggestions.

In 2015, an engineer suggested in a patent application that Facebook could look at photo metadata, such as presence of dust on the camera lens, to determine if two people had uploaded photos taken by the same camera. That anyone would ever want to be subjected to this level of scrutiny and algorithmic pseudo-science for the sake of a friend recommendation was not addressed by the engineer.

In 2016, North Carolina artist Andy Herod opened a show called Sorry I Made It Weird: Portraits of People You May Know. Herod had painted portraits of 30 strangers who Facebook had suggested he might know. He didn’t actually know any of them.

“Facebook is such a big part of people’s lives”, said Herod by phone. “They don’t think about the fact that their photos are constantly being popped up into strangers’ homes, through PYMK”.

Herod wanted to put those photos permanently on someone’s walls. An Asheville art collector, who prefers to stay anonymous, bought the bulk of Herod’s series. As it happens, the collector is not a member of the social network; he quit Facebook in 2009 because it was “one big ad space” and a “graveyard of ex girlfriends” — which is how a lot of people might describe their People You May Know.

Quitting Facebook is the obvious answer for users disturbed by the social network’s practices. But for people dependent on Facebook for professional or personal reasons, it’s not an option, so they remain and have to accept that the social network will mine information about them that they can’t see or control to make unwelcome suggestions to them.

That mining is particularly disturbing because it seems Facebook may have abandoned its own golden rule against making friend suggestions based on “two hops” though contact books. Last year, in 2017, Facebook recommended I friend a relative I didn’t know I had.

I could not figure out how Facebook had linked me to Rebecca Porter, a biological great-aunt from an estranged part of my family, because none of the people who linked us were on Facebook. Since then I’ve determined it must be because Facebook drew a long and complicated path between me and a distant relative by analysing information in the contact books of two otherwise disconnected users: Rebecca Porter and my stepmother both had the email address and phone number for another Porter and I am friends with my stepmother on Facebook.

If that is indeed how Facebook made the link, that is some NSA-level network science.

Making connections like that is how you wind up “recommending the mistress to the wife”. An acquaintance of mine recently told me that happened to him, but the gender roles were reversed. He figured out his wife had resumed an affair she had ended years earlier when the guy suddenly started showing up in his People You May Know. Facebook was essentially telling him, “Hey, this guy is part of your network again”.

He confronted his wife and she admitted to it. “Thank you, Facebook, for being the fucking Stasi”, he texted me.

Facebook won’t make its current People You May Know team available for interviews. But in a leaked memo published by Buzzfeed in March, Facebook executive Andrew Bosworth explained the thinking that motivates tools like PYMK.

“The ugly truth is that we believe in connecting people so deeply that anything that allows us to connect more people more often is *de facto* good”, he wrote in 2016. “That’s why all the work we do in growth is justified. All the questionable contact importing practices. All the subtle language that helps people stay searchable by friends”.

In other words, People You May Know is an invaluable product because it helps connect Facebook users, whether they want to be connected or not. It seems clear that for some users, People You May Know is a problem.

It’s not a feature they want and not a feature they want to be part of. When the feature debuted in 2008, Facebook said that if you didn’t like it, you could “x” out the people who appeared there repeatedly and eventually it would disappear. (If you don’t see the feature on your own Facebook page, that may be the reason why.) But that wouldn’t stop you from continuing to be recommended to other users.

Facebook needs to give people a hard out for the feature, because scourging phone address books and email inboxes to connect you with other Facebook users, while welcome to some people, is offensive and harmful to others. Through its aggressive data-mining this huge corporation is gaining unwanted insight into our medical privacy, past heartaches, family dramas, sensitive work associations and random one-time encounters.

So Facebook, consider belatedly celebrating People You May Know’s 10th anniversary by letting users opt out of it entirely.