Even if you know what to look for, spotting phoney photos on the internet isn’t always the easiest thing to do — and it’s only going to get harder. Fortunately Adobe — who you could strongly argue is responsible for the whole “photoshopping” thing — is on the front lines, fighting forgeries with an AI it’s taught to spot the telltales signs of tampering.

How To Spot Fake Photos On The Web

Take a moment before you reshare that hilarious or terrifying image on your favourite social media channel of choice — is it, in fact, as authentic as it first appears?

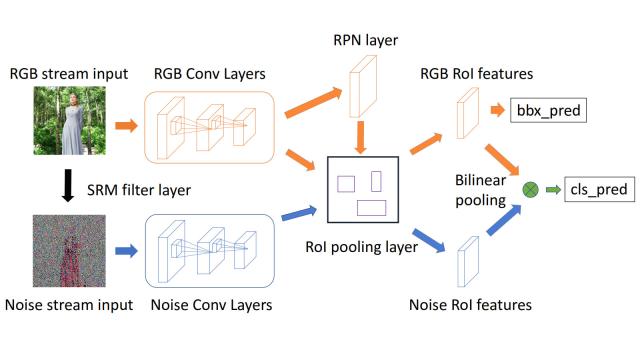

A paper from Adobe Research, entitled “Learning Rich Features for Image Manipulation Detection” explains how researchers trained a neural network to recognise three types of manipulation — splicing, copy-move and removal — to sift the real from the unreal.

According to senior research scientist Vlad Morariu, a lot of it comes down to “imperceptible noise”, which is basically impossible for humans to notice, but a massive red flag to a machine:

Every image has its own types of imperceptible noise statistics, so when you manipulate an image, you actually move the noise statistics along with the content. We can … identify these small differences.

The paper mentions a two-pronged approach, using an RGB and noise stream, that was able to “[detect] tampering artifacts [and distinguish] between various tampering techniques”. Best of all, it proved robust to “augmentation methods” — that is, efforts from the forger to hide edits, either using noise or JPEG compression.

12 More Viral Photos That Were Totally Fake

We’ve been debunking fake photos at Gizmodo since 2013, but in the year 2017, the fakes seem to be spreading online faster than ever. Here are just a few of the images we’ve seen swirling around the internet lately. And none of them are what they appear to be at first glance.

Hopefully the research will find its way into an app or, better yet, be incorporated into search engines. Imagine Google Image Search auto-flagging suspect content? Sounds good to me.

[Open Access, via PetaPixel]