Across the US, law enforcement agencies are teaming up with data firms to bring facial recognition to public spaces, including newly released documents from Oregon officials using Amazon’s facial recognition offer our clearest look yet into how US cops and their tech partners are massaging the ugly truths of facial recognition, including frequent mismatches, its use on people not suspected of crimes, and how to sell the public on something so obviously creepy – a task even police aren’t sure they’re up to.

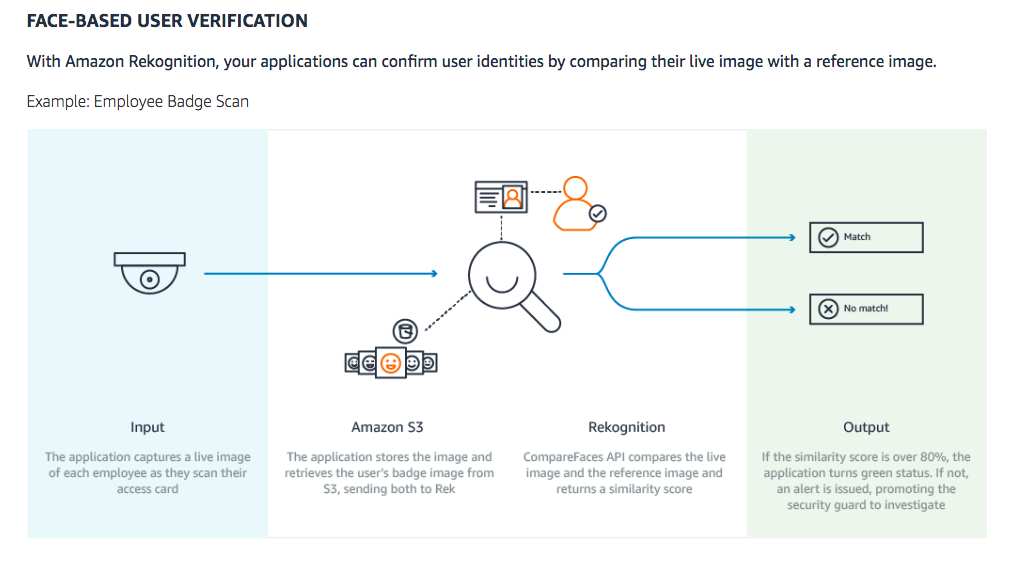

Rekognition, Amazon’s own face recognition effort, isn’t a secret. It has its own landing page where Amazon touts its partnerships with both private companies and police departments. The innocent-seeming applications advertised by Amazon, however, become far more troubling when applied to government surveillance. Consider this illustration showing how Rekognition can be used for scanning employee badges. Boring, but functionally the same as using surveillance cameras to match people against a government database: a real-time image is compared to a stored one.

When selling the same technology for surveillance purposes, Amazon advertises how Rekognition can use AI to compare people captured in live or recorded footage against huge databases, making “investigation and monitoring of individuals easy and accurate.” One government partner that has explored this application is Oregon’s Washington County, whose sheriff’s office has been using Rekognition to match surveillance photos and videos to a database of almost 300,000 mugshots. Through public records requests, the ACLU was able to obtain revealing internal documents related to the county’s use of Amazon’s tech.

While Amazon’s marketing materials focus on how law enforcement can use Rekognition to find “persons of interest,” internal emails reveal Washington County police have used Rekognition to identify “unconscious or deceased individuals,” as well as “possible witnesses and accomplishes in images.” According to the ACLU, it is not illegal to refuse to provide ID to a police officer in Oregon. With Rekognition, of course, police need not ask.

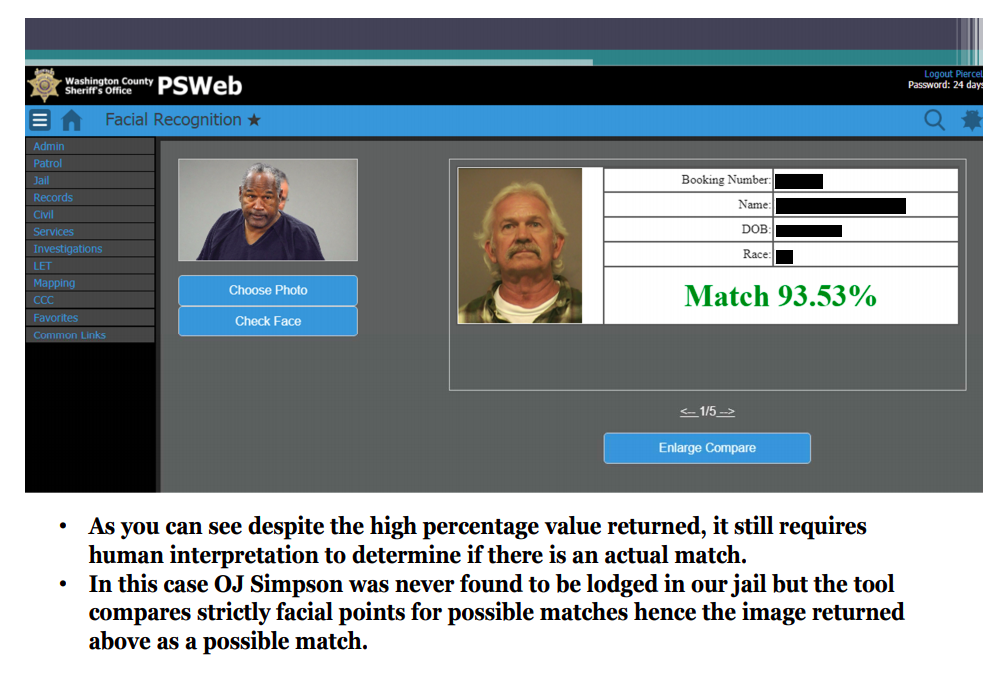

Other documents show the potential for mismatches by Amazon’s technology. In one screenshot, Rekognition identifies an image of O.J. Simpson as a 93.53 per cent match with a (notably white) arrestee. It’s unclear if the matched man was even convicted of a crime – mugshots are taken when people are arrested, not when they’re found guilty. If suspects are cleared of charges, there’s no guarantee their photo will be deleted from a mugshot database.

Screenshot: ACLU of Northern California

Email exchanges also demonstrate that government officials understand how unsettling Rekognition’s power is. In one email, a Washington County official explains how the county sheriff is cautious about publicizing his office’s partnership with Amazon, writing the sheriff is “hesitant about appearing to be ‘in bed’ with big data.” In another, an official relates how the county’s executive staff is similarly concerned about optics.

“Even though our software is being used to identify persons of interest from images provided to the [sheriff’s office],” the email reads, “the perception might be that we are constantly checking faces from everything, kind of a Big Brother vibe.”

Perhaps most troubling of all, a series of emails show two Amazon representatives setting up a call between a Washington County police official and a vice president at Federal Signal, a body camera manufacturer. The topic was how to sway public opinion on the use of face recognition with camera footage, referred to in a later email as “people challenges.”

“My colleague has a customer that manufactures police body cameras,” reads the intial email, “he was a bit sceptical that recognition of individuals in video feeds would be adopted at the moment because of all the issues surrounding it. That being said, he also believes that this technology will eventually be used broadly. He would love to understand how you overcame stakeholder resistance in order to get this cutting‐edge technology implemented.”

Face recognition in body cameras has long been considered a terrifying turning point in law enforcement. While surveillance cameras are limited by their physical placement, real-time face recognition with body cameras would turn police officers into roving surveillance platforms. There’s no indication Rekognition is being used in body cameras – as an official notes in one email, doing so is illegal in Oregon, “So that would be one huge barrier” — but the courting of Federal Signal shows at least some Amazon employees are eager about the possibility.

In conjunction with the ACLU’s release of the documents, more than 40 civil rights organisations joined together to call for Amazon to end sales of Rekognition to law enforcement. The letter is addressed to Amazon CEO Jeff Bezos. From the open letter:

Amazon Rekognition is primed for abuse in the hands of governments. This product poses a grave threat to communities, including people of colour and immigrants, and to the trust and respect Amazon has worked to build. Amazon must act swiftly to stand up for civil rights and civil liberties, including those of its own customers, and take Rekognition off the table for governments.

Update 6:18pm: In a statement to Gizmodo, Amazon said that it “requires that customers comply with the law and be responsible” and if its services are abused, “we suspend that customer’s right to use our services”:

Amazon Rekognition is a technology that helps automate recognising people, objects, and activities in video and photos based on inputs provided by the customer. For example, if the customer provided images of a chair, Rekognition could help find other chair images in a library of photos uploaded by the customer. As a technology, Amazon Rekognition has many useful applications in the real world (e.g. various agencies have used Rekognition to find abducted people, amusement parks use Rekognition to find lost children, the Royal Wedding that just occurred this past weekend used Rekognition to identify wedding attendees, etc.). And, the utility of AI services like this will only increase as more companies start using advanced technologies like Amazon Rekognition. Our quality of life would be much worse today if we outlawed new technology because some people could choose to abuse the technology. Imagine if customers couldn’t buy a computer because it was possible to use that computer for illegal purposes? Like any of our AWS services, we require our customers to comply with the law and be responsible when using Amazon Rekognition.