A new review of face recognition software found that, when identifying gender, the software is most accurate for men with light skin and least accurate for women with dark skin. Joy Buolamwini, an MIT Media Lab researcher and computer scientist, tested three commercial gender classifiers offered as part of face recognition services. As she found, the software misidentified the gender of dark-skinned females 35 per cent of the time. By contrast, the error rate rate for light-skinned males was less than one per cent.

Photo: AP

“Overall, male subjects were more accurately classified than female subjects replicating previous findings (Ngan et al., 2015), and lighter subjects were more accurately classified than darker individuals,” write Buolamwini and co-author Timnit Gebru in the paper. “An intersectional breakdown reveals that all classifiers performed worst on darker female subjects.”

The results mirror previous findings about the failures of face recognition software when identifying women and individuals with darker skin. As noted by Georgetown University’s Center for Privacy and Technology, these gender and racial disparities could, in the context of airport facial scans, make women and minorities more likely to be targeted for more invasive processing such as manual fingerprinting.

All face recognition software is trained by scanning thousands upon thousands of images in a dataset, refining its ability to extract valuable datapoints and ignore what isn’t useful. As Buolamwini notes, many of these datasets are themselves biased. Adience, one gender classification benchmark, uses subjects that are 86 per cent light-skinned. Another dataset, IJB-A, uses subjects that are 79 per cent light-skinned.

Among other problems with skewed datasets, they allow companies to call their face recognition software “accurate”, when really they’re only accurate for people similar to those in the dataset: Mostly men, mostly lighter. Darker women were least represented in these data sets. 7.4 per cent of the Adiance dataset were dark-skinned women, while IJB-A was 4.4 per cent. This becomes a problem when companies rely on them.

Buolamwini tested three commercial software APIs: Microsoft’s Cognitive Services Face API, IBM’s Watson Visual Recognition API, and Face++, a Chinese computer vision company that’s provided tech for Lenovo. Buolamwini tested to see if each could reliably classify the gender of the person in each photo.

As she found, all classifiers performed better on male faces than female faces and all classifiers were least accurate when determining the gender of dark-skinned females. Face++ and IBM had a classification error rate of 34.5 and 34.7 per cent, respectively, on dark-skinned women. Both had light-skinned male error rates of less than one per cent. Microsoft’s dark-skinned female error rate was 20.8 per cent and effectively zero for light-skinned males.

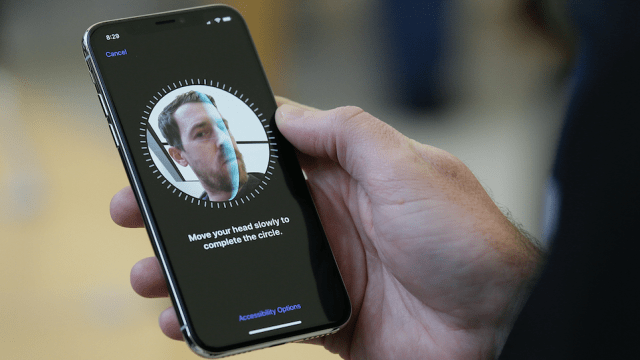

Buolamwini hopes for parity in face recognition accuracy, particularly as face recognition software has become standardised in law enforcement and counterterrorism. Passengers are scanned in airports, spectators are scanned in arenas, and, in the age of iPhone X, everyone may soon be scanned by their phones. Buolamwini cites the Georgetown study, warning that as face recognition becomes standard in airports, and those who “fail” the test receive extra scrutiny, a potential feedback loop of bias could develop. In the paper, she hopes the field will embrace even more intersectional audits that look at disproportionate impacts, particularly as AI is poised to become a core part of our society.

[NYT]