Facebook announced a new messaging service yesterday for children as young as six. The new app, Messenger Kids, enables Facebook to target parents whose kids are still too young to use world’s largest social network (which requires users to be at least 13 years old). Messenger Kids has parental controls and policies in place to ban inappropriate content and cyberbullying, but that doesn’t make the service exempt from Facebook’s pattern of moderation failures or the broader evils of the interweb. And in the event a child is harassed or exposed to banned content in Messenger Kids, the burden falls in part on Facebook’s human moderators to act.

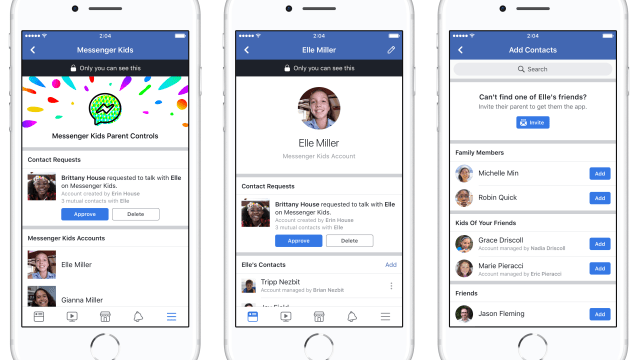

Photo: Facebook

So how does Facebook plan to moderate its Kids app? Like the main Facebook network, users can report content inside Messenger Kids. But the app also has its own “dedicated Community Operations team,” a Facebook spokesperson told Gizmodo. The team “will have a fast turnaround time to make sure all reports are handled quickly,” said the spokesperson, but Facebook did not respond when asked how fast that turnaround time will be. When a child reports content or another account, Facebook says it will also notify a parent.

Compared to the main Facebook network, the automated moderation tech used for the Kids app “has more stringent policies, so it is implemented differently” than Facebook’s typical moderation tools, the spokesperson said. Like Facebook, Messenger Kids has “real people looking at those reports and making the decisions”, but sometimes automated systems take over, like to address duplicate content reports and “spam attacks”.

Facebook’s existing Community Standards apply to Messenger Kids, the company says, but beyond that, specialised “reviewers [for Messenger Kids] will also look for other signs of potential abuse and take appropriate action, whether it’s removing specific messages or entire accounts for repeated abuse”. The spokesperson said that Facebook will continue to update the enforcement of its policies depending on the types of content that Messenger Kids users report. But the company did not respond to our questions asking what it means by “stringent policies” or if it will publicly disclose them.

Judging from Facebook’s own announcement posts, Messenger Kids is seemingly set up to maintain an appropriate space for young minds looking to dip into the wild world of the web. But as Facebook has shown in the past, community standards, a team of moderators, and automated tools do not necessarily ensure that the platform will be moderated appropriately, efficiently, and free of bias.

An investigative report from ProPublica this year found that Facebook’s policies protected white men and hate speech. Also this year, Marines were caught sharing nonconsensual intimate photos of female colleagues in private Facebook groups. More than a week after the scandal was revealed, Facebook had still failed to take down some of the groups that had posted nude photos. Facebook also mistook a Pulitzer-winning photograph for child porn, its automated moderation tools wrongfully took down the Facebook Live video documenting the moments after Philando Castile’s shooting, and a bug once leaked the identities of moderators to suspected terrorists.

All of these screwups signal that while Facebook has systems – both human and machine – in place to handle inappropriate content on its services, those systems are flawed. For people (read: Not six-year-olds) accustomed to the hellish landscape of the internet, these risks are expected. But as parents consider plugging their babies into the internet (metaphorically speaking), they should also understand that even the most outwardly well-meaning social app is bound to include its own hidden risks.