Artificial intelligence is coming to your phone. The iPhone X has a Neural Engine as part of its A11 Bionic chip; the Huawei Kiri 970 chip has what’s called a Neural Processing Unit or NPU on it; and the Pixel 2 has a secret AI-powered imaging chip that just got activated. So what exactly are these next-gen chips designed to do?

As mobile chipsets have grown smaller and more sophisticated, they have started to take on more jobs and more different kinds of jobs. Case in point, integrated graphics — GPUs now sit alongside CPUs at the heart of high-end smartphones, handling all the heavy lifting for the visuals so the main processor can take a breather or get busy with something else.

The new breed of AI chips are very similar – only this time the designated tasks are recognising pictures of your pets rather than rendering photo-realistic FPS backgrounds.

What we talk about when we talk about AI

AI, or artificial intelligence, means just that. The scope of the term tends to shift and evolve over time, but broadly speaking it’s anything where a machine can show human-style thought and reasoning.

A person hidden behind a screen operating levers on a mechanical robot is artificial intelligence in the broadest sense – of course today’s AI is way beyond that, but having a programmer code responses into a computer system is just a more advanced version of getting the same end result (a robot that acts like a human).

Google, show me some dogs. Image: Screenshot

As for computer science and the smartphones in your pocket, here AI tends to be more narrowly defined. In particular it usually involves machine learning, the ability for a system to learn outside of its original programming, and deep learning, which is a type of machine learning that tries to mimic the human brain with many layers of computation. Those layers are called neural networks, based on the neural networks inside our heads.

So machine learning might be able to spot a spam message in your inbox based on spam it’s seen before, even if the characteristics of the incoming email weren’t originally coded into the filter — it’s learned what spam email is.

Deep learning is very similar, just more advanced and nuanced, and better at certain tasks, especially in computer vision — the “deep” bit means a whole lot more data, more layers, and smarter weighting. The most well-known example is being able to recognise what a dog looks like from a million pictures of dogs.

Plain old machine learning could do the same image recognition task, but it would take longer, need more manual coding, and not be as accurate, especially as the variety of images increased. With the help of today’s superpowered hardware, deep learning (a particular approach to machine learning, remember), is much better at the job.

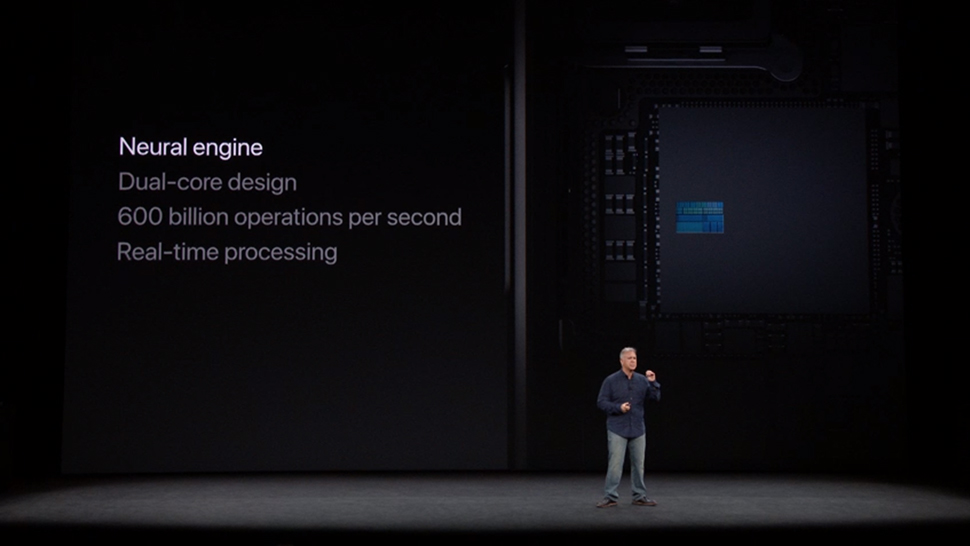

Apple introduces its Neural Engine. Image: Apple

To put it another way, a machine learning system would have to be told that cats had whiskers to be able to recognise cats. A deep learning system would work out that cats had whiskers on its own.

Bear in mind that an AI expert could write a volume of books on the concepts we’ve just covered in a couple of paragraphs, so we’ve had to simplify it, but those are the basic ideas you need to know.

AI chips on smartphones

As we said at the start, in essence, AI chips are doing exactly what GPU chips do, only for artificial intelligence rather than graphics – offering a separate space where calculations particularly important for machine learning and deep learning can be carried out. As with GPUs and 3D graphics, AI chips give the CPU time to focus on other tasks, and reduces battery draw at the same time. In also means your data is more secure, because less of it has to be sent off to the cloud for processing.

So what does this mean in the real world? It means image recognition and processing could be a lot faster. For instance, Huawei claims that its NPU can perform image recognition on 2,000 pictures every second, which the company also claims is 20 times faster than it would take with a standard CPU.

The Huawei Kirin 970 has a dedicated AI component. Image: Huawei

More specifically, it can perform 1.92 teraflops, or a trillion floating point operations per second, when working with 16-bit floating point numbers. As opposed to integers or whole numbers, floating point numbers – with decimal points – are crucial to the calculations running through the neural networks involved with deep learning.

Apple calls its AI chip, part of the A11 Bionic chip, the Neural Engine. Again, it’s dedicated to machine learning and deep learning tasks – recognising your face, recognising your voice, recording animojis, and recognising what you’re trying to frame in the camera. It can handle some 600 billion operations per second, Apple claims.

App developers can tap into this through Core ML, and easy plug-and-play way of incorporating image recognition and other AI algorithms. Core ML doesn’t require the iPhone X to run, but the Neural Engine handles these types of tasks faster. As with the Huawei chip, the time spend offloading all this data processing to the cloud should be vastly reduced, theoretically improving performance and again lessening the strain on battery life.

AI chips, recognising faces now, and much more soon. Image: Apple

And that’s really what these chips are about: Handling the specific types of programming tasks that machine learning, deep learning, and neural networks rely on, on the phone, faster than the CPU or GPU can manage. When Face ID works in a snap, you’ve likely got the Neural Engine to thank.

Is this the future? Will all smartphone inevitably come with dedicated AI chips in future? As the role of artificial intelligence on our handsets grows, the answer is likely yes. Qualcomm chips can already use specific parts of the CPU for specific AI tasks, and separate AI chips is the next step. Right now these chips are only being utilised for a small subsection of tasks, but their importance is going to only grow.