A few weeks ago, Google announced that it had teamed up with a mental health advocacy group to take a stab at addressing the US epidemic of depression. People who type the words “clinical depression” into Google search via mobile in the US are now presented automatically with a link to a screening questionnaire to assess their depression. The assertion that Google has the answer to everything just got taken to a whole new level.

Image: Google

Google is not the only company hoping there may be some digital solutions to the problems of mental health. Apple recently posted a job application for programmers with psychology and counselling backgrounds in order to turn Siri into a better therapist. On Thursday, the Food and Drug Administration approved the first mobile app for treating substance abuse — an app from Pear Therapeutics originally designed to be prescribed by a physician.

The pharmaceutical company GlaxoSmithKline inked a deal to create a “digitally guided therapy”, one of many such ventures in the growing “digital therapeutics” space hoping to move health apps beyond wellness trends.

It makes sense. Our phones have become intimate companions of sorts. As Apple noted in its job posting: “People talk to Siri about all kinds of things, including when they’re having a stressful day or have something serious on their mind. They turn to Siri in emergencies or when they want guidance on living a healthier life.”

A small contingent of experts, though, is arguing that asking Google to diagnose depression might be taking things too far.

In a paper published in the journal BMJ this week, University of York psychologist Simon Gilbody said the chance of receiving a “false positive” on Google’s depression questionnaire was high and the test “may in fact do harm”.

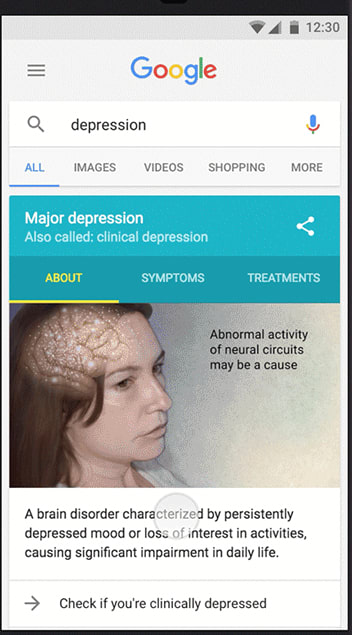

The patient health questionnaire offered by Google is a screening tool used by doctors to test a patient’s level of depression. For those with high scores, Google will offer links to materials from the National Alliance on Mental Illness and telephone helplines. The end goal is to drive higher percentages of people to seek treatment for depression.

Image: Google

Gilbody raised concerns about inadequate treatment resources that Google offers its users.

“Google’s initiative has been reported positively and uncritically despite bypassing the usual checks and balances that exist for good reason. It is unlikely that their initiative will improve population health and may in fact do harm,” he wrote.

John Grohol, a psychologist who runs the website PsychCentral, also said the move raises red flags, especially when it comes to questions of user privacy.

“Google is a technology and marketing company that collects people’s data,” he told Gizmodo. “It’s not really clear about how they would use this information. I wouldn’t want sensitive information like mental health to be in the hands of a marketing company.”

Indeed, in its privacy policy, Google notes that while your individualized data will never link you to your answers or results without your consent, it also says that anonymized data “may be used in aggregate to improve your experience.” In other words, Google could use information about your mental health to serve you ads, among other things.

While Google, Siri or some other app will not replace the need of a visit to a doctor’s office any time soon, these new developments offer a step towards technology products that do a better job of recognising the complexity of human emotional needs. This is a big improvement.

And just maybe, clinical psychiatrist Ken Duckworth wrote in BMJ in defence of Google, such initiatives can raise awareness and help more people seek treatment. Just don’t expect Google to hand you a tissue.