Back in January, Facebook CEO Mark Zuckerberg said he was “quite proud of the impact that we were able to have on civic discourse”, doubling down on his stance that the rise of misinformation, spread of outright propaganda, and rapid erosion of trust in the fourth estate were anyone’s problems but his. A whitepaper from the world’s largest social media platform — where an estimated 66 per cent of the site’s American users get their news — casually mentions that Facebook is also fertile soil for “subtle and insidious forms of misuse, including attempts to manipulate civic discourse and deceive people”.

“Help me” Image: AP Photo/Eric Risberg

Wow, screw this guy!

The paper — first obtained by Reuters — was drafted by well-heeled analysts Jen Weedon and William Nuland, formerly from FireEye, Inc and Dell SecureWorks, respectively, as well as Alex Stamos, the social giant’s chief security officer. It outlines what teens in Russia and the United States’ far-right have known for some time now: Every social network can be gamed.

Sophisticated spear phishing attacks and malware designed to compromise the security of political operatives or journalists is dystopian as hell but not terribly novel. The meat of the paper is Facebook admitting that its product can be used as a highly-tuned, souped-up vehicle for propaganda. Armies of sockpuppet accounts strategically liking, commenting and sharing are able to inflate the visibility of posts until they reflect an ideological consensus that may not actually exist. At that point, easily swayed real humans jump on the bandwagon. “Well-executed information operations have the potential to gain influence organically, through authentic channels and networks, even if they originate from inauthentic sources, such as fake accounts,” the paper states.

If that sounds familiar, yes, we’ve been telling you this for a long time.

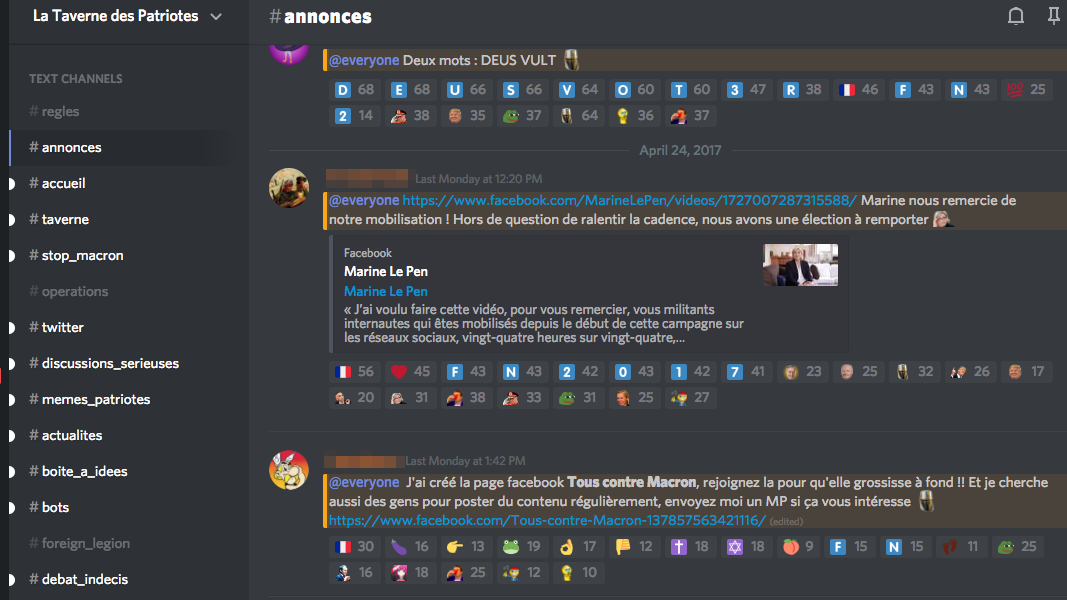

The company later pats itself on the back for “taking action against over 30,000 fake accounts” in France, presumably those attempting to sway the country’s ongoing election in favour of far-right candidate Marine Le Pen. In the highly organised Discord server La Taverne des Patriotes, attacks on other platforms are coordinated, and graphic designers create new politicised memes. The “foreign legion” room is a meeting place for those outside France who wish to influence the French election, and is mostly comprised of exiles from the largely defunct Great Liberation of France server which cropped up in the wake of Trump’s election. The third message in the announcements channel asks the server’s users to join a newly-created anti-Macron Facebook group.

Image: screengrab from Discord

Whether through coordinated action by a mix of legitimate and illegitimate accounts to dominate conversation (as in the case of Reddit’s r/the_donald), or using Twitter bot armies like the one commanded by the mysterious MicroChip to push hashtag campaigns to the masses, malicious groups have known how to exploit social networks with terrifying efficiency. Facebook is no exception. The platform’s many far-right groups, which almost exclusively post malicious or fabricated news content, show no signs of slowing down.

Facebook already got into hot water for making determinations on what was and was not news with its Trending News module while claiming it was the impartial work of an algorithm. Now the company puts itself in the unenviable position of say whether “organised actors” are genuine activists or operatives intending to “distort domestic or foreign political sentiment”.

“Our responses will constantly evolve, but we wanted to be transparent about our approach,” the whitepaper offers, being neither terribly transparent nor offering much by way of tangible fixes to a problem much larger than any Facebook has failed to solve to date. Given that Facebook Live can’t seem to stop people form broadcasting suicides, sex acts and violent crimes, it likely isn’t offering solutions because it has none to give.

Part of the whitepaper’s intention might be to insinuate Facebook further into the lives of those at risk of becoming the target of problems it helped to create (emphasis theirs):

Governments may want to consider what assistance is appropriate to provide to candidates and parties at risk of targeted data theft. Facebook will continue to contribute in this area by offering training materials, cooperating with government cybersecurity agencies looking to harden their officials’ and candidate’s social media activity against external attacks, and deploying in-product communications designed to reinforce observance of strong security practices.

Journalists and news professionals are essential for the health of democracies. We are working through the Facebook Journalism Project15 to help news organisations stay vigilant about their security.

Are you ready to set fire to all of the Menlo Park empire yet? If not, the whitepaper saves a portion of its 13-pages to — after noting the ease with which Facebook can be used to influence geopolitics — continue denying its impact in the US election. “The reach of known [information] operations during the US election of 2016 was statistically very small compared to overall engagement on political issues,” the paper notes.

Say you screwed up. Then fix it. Or admit you don’t know how.