Since 2011 the FBI has used facial recognition software to identify people during criminal investigations. The agency combs through a database of over 411 million photos, including everything from mugshots to driver’s licenses. Today, the Government Accountability Office (GAO) released a report that’s critical of the technology.

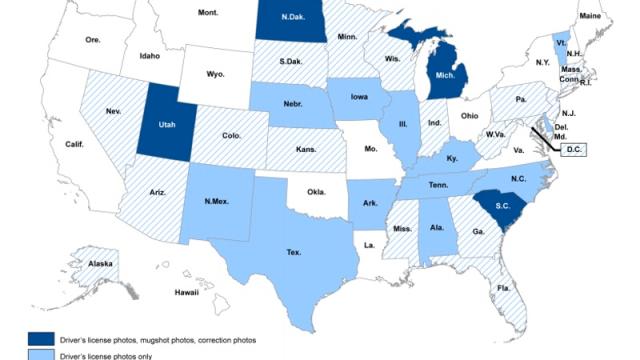

Map of where the FBI’s database photos are coming from (GAO)

The GAO is concerned about not only the privacy implications, but also the accuracy of the FBI’s facial recognition technology. The report found that the FBI has never tested the accuracy of searches that are asked to yield fewer than 50 photo matches.

Put more simply, if someone from the FBI has a photo of a suspect and runs it through facial recognition while requesting just two potential matches, they have no way of knowing if those matches are any more accurate than the next 48 in line. Put even more simply, a lot of innocent people could potentially be identified positively as suspects.

The FBI’s primary system is called the Next Generation Identification-Interstate Photo System (NGI-IPS). The agency and its local partners can submit photos taken from practically anywhere (including surveillance cameras), and they will get back a list of potential matches from roughly 30 million photos. They can also submit to a program called FACE, where over 400 million additional photos reside. But there’s a concern that the system isn’t nearly as precise as it should be, even with millions of photos at its disposal.

From the GAO report:

Although the FBI has tested the detection rate for a candidate list of 50 photos, NGI-IPS users are able to request smaller candidate lists — specifically between 2 and 50 photos. FBI officials stated that they do not know, and have not tested, the detection rate for other candidate list sizes. According to these officials, a smaller candidate list would likely lower the detection rate because a smaller candidate list may not contain a likely match that would be present in a larger candidate list. According to a Texas Department of Safety official responsible for coordinating with the FBI on the state’s NGI-IPS searches, Texas law enforcement officials request different candidate list sizes when submitting search requests, sometimes less than 50 photos. According to the FBI Information Technology Life Cycle Management Directive, testing needs to confirm the system meets all user requirements. Because the accuracy of NGIIPS’s face recognition searches when returning fewer than 50 photos in a candidate list is unknown, the FBI is limited in understanding whether the results are accurate enough to meet NGI-IPS users’ needs.

The privacy nightmare that is our future should be abundantly clear. Even if the system were more accurate, the partnerships that the FBI is establishing with state and local law enforcement around the US mean that we’re on the precipice of some sci-fi future garbage.

Put all your favourite dystopian pre-crime fiction in a hat and pick one out at random. Minority Report, 1984, Enemy of the State — they have all become tiresome cultural touchstones for talking about the surveillance state, but they’re here. And what’s worse, the people handling the tech aren’t even testing it properly for accuracy.

As the report explains, “it is essential that both the detection rate and the false positive rate for all allowable candidate list sizes are assessed prior to the deployment of the system.” It’s a little late for that. The system went live in 2015 after the pilot program which began in 2011.

And even people with the attitude of “if you have nothing to hide you shouldn’t worry” should get worried pretty damn quick. Because you know what’s even worse than a perfect surveillance state that sees every single crime committed? An imperfect surveillance state that incorrectly thinks it found the criminal.