Not long ago, fingerprints were the cutting edge of biometric profiling. Today, the use of biosignatures to identify individuals has expanded to include everything from iris and facial scans right through to DNA profiling and even the unique shape of a person’s arse. Here’s what you need to know about how companies and governments are tracking your biometrics.

Facial Recognition

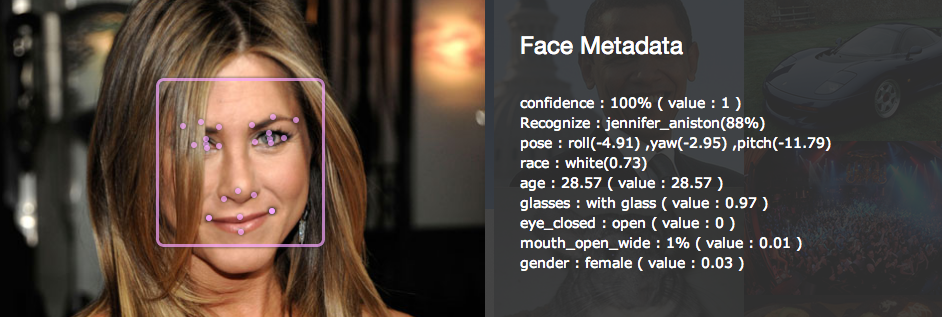

Image: ReKognition.

Facial patterning is a particularly powerful biometric technique, mostly because of how easy it is to have our pictures taken (both knowingly and covertly) and how often our mugs appear on the internet. What’s more, mobile devices and laptops are potent and ubiquitous vectors for acquiring and disseminating this information. It’s also not easy for us to hide our faces.

Indeed, some members of U.S. Congress have been pressuring Google into blocking facial recognition software on Google Glass. The company has implemented a ban on this technology, but it probably won’t last forever. In fact, Google is planning to launch its open software tool-kit service, Rekognition, for Glass in the near future. Already today, and for better or worse, Google Glass is being worn by police in some jurisdictions.

Facial recognition works by scanning, measuring, and then storing facial characteristics in massive data repositories. Matching can be up to 99% accurate, but that’s usually for those “best circumstances” photos, like headshots and passport photos.

Looking to the future, upgraded versions of these technologies will be able to detect and identify faces hidden in the photos of eyes. And the company Animetrics is developing proprietary software that can turn 2D images into simulated 3D models of a person’s face, allowing users to change the person’s pose. The system can boost success rates from 35% up to 85%, and it’s already being implemented the on police officers’ iPhones.

Iris and Retina Recognition

Both of these techniques involve the eye, but they’re actually two different things.

Iris scanning is one of the best authentication and identification processes in use today. Our Iris — the externally visible coloured ring around the pupil — is as unique as a snowflake. It exhibits a distinctive pattern that forms randomly in utero in a process called chaotic morphogenesis. In fact, it’s estimated the chance of two iris being identical is 1 in 1078. The scanning process involves taking a picture of the iris, which is then cross-reference for matching purposes. The technology is fairly straightforward, utilising systems similar to what’s found in camcorders.

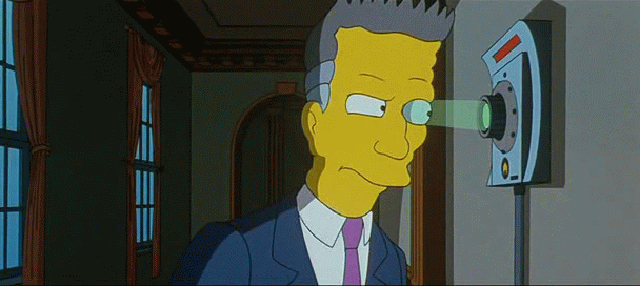

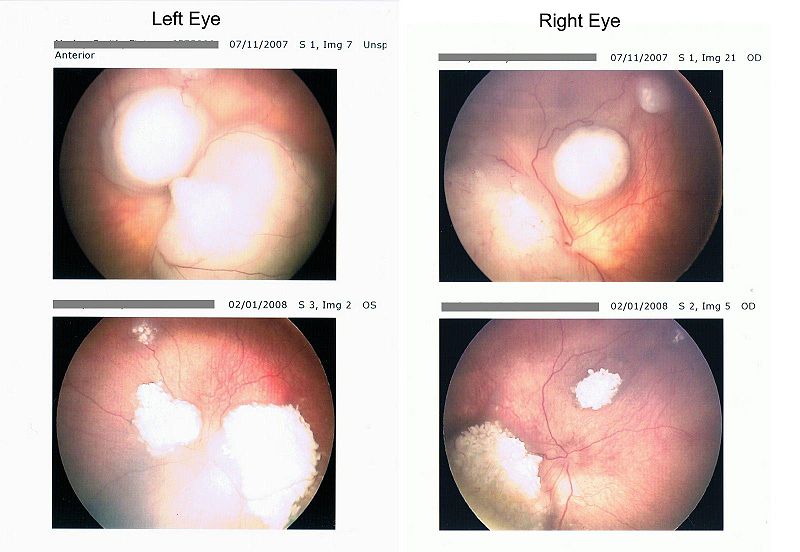

Image: Morleyj/Wikimedia Commons.

Retina recognition was developed back in the 1980s. It’s a well known technique, but not widely used. It works by mapping the unique patterns of a person’s retina; the blood vessels within the retina absorb more light more readily than surrounding tissue, making it easily identifiable with appropriate lighting. The scan is performed by casting a beam of low-energy infrared light into a person’s eye as they peer through an eyepiece. The beam traces a standardised path on the retina. Once a retinal image has been captured the software takes care of the rest, compiling the unique features of the network of retinal blood vessels into a template.

Nose Scanning

Good luck trying to disguise your face with a pair of sunglasses. Your nose is rather hard to conceal, and it’s not susceptible to changes in expression. What’s more, they provide a fixed point for scanning devices.

Image: University of Bath.

Researchers from the University of Bath’s Department of Electronic & Electric Engineering have developed a photometric stereo image acquisition program called PhotoFace. The system scans the 3D shape of noses and uses computer software to analyse them according to the six main nose shapes, namely Roman, Greek, Nubian, Hawk, Snub, and Turn-up. It then considers three characteristics of the nose during analysis, including the ridge profile, the nose tip, and the nasion or section between the eye at the top of the nose.

Ear Profiling

The human ear is also unique. It’s comprised of a series of structures that can generate a host of measurable characteristics.

Image: Yale.

Ear scanning and profiling technologies are currently being developed by researchers at the University of Southampton. Their system, which considers both outer and inner ear areas, is up to 99.6% accurate. It’s also a good substitute to facial recognition, which is often confused by facial wrinkles, crow’s feet around the eyes, and other signs of ageing. Our ears, on the other hand, develop relatively gracefully, growing proportionately larger over time, while our lobes become more elongated.

Posterior Profiling

But why stop at faces, noses, and ears when we can also measure a person’s arse?

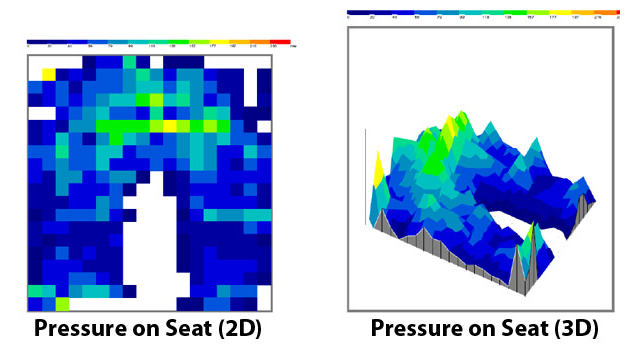

Image: Advanced Institute of Industrial Technology.

A team at the Advanced Institute of Industrial Technology in Tokyo designed its posterior sensor as an anti-theft measure for cars. The group claims that these prototype seats can recognise the owner’s butt with 98 per cent accuracy.

Voice Recognition

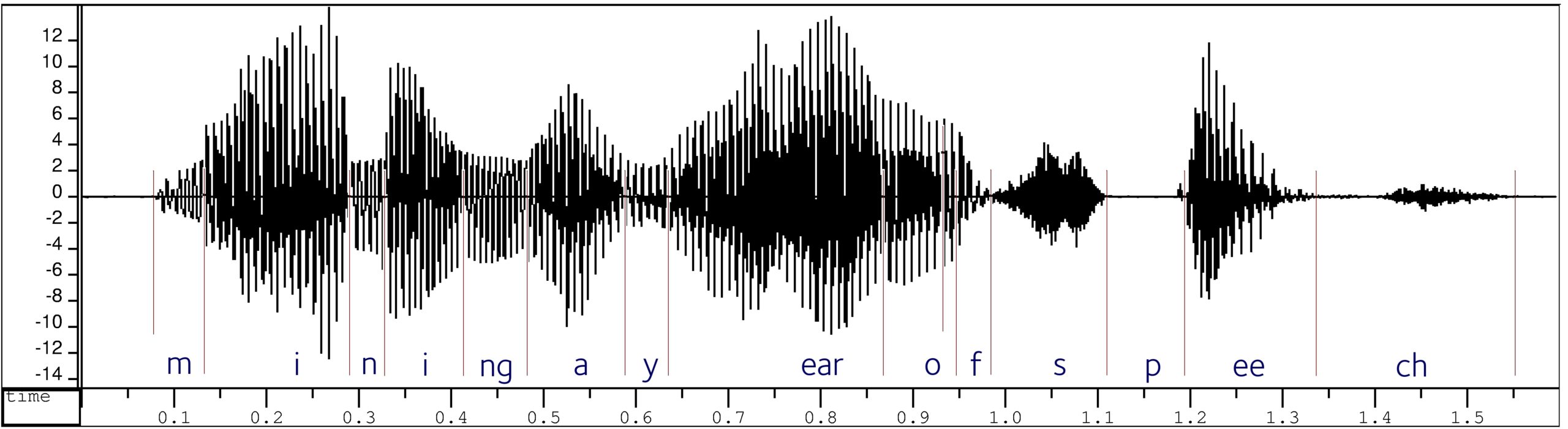

Our voices exhibit a distinct wave pattern that can be matched by software to pre-existing samples.

Image: U of Oxford.

The FBI Audio Lab has a system that does this — a voice analyser which captures the frequency, intensity, timbre, and other elements to match a voice to a person, or to determine the authenticity of a voice in a recording.

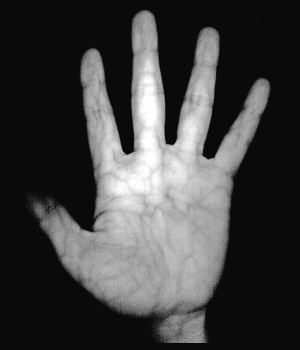

Palm Vein Patterns

This technique is already being used in some U.S. schools. It typically involves an unobtrusive near-infrared scanner that doesn’t require physical contact. Our blood veins are formed during the first eight weeks of gestation in a chaotic manner, influenced by environment in a mother’s womb. This is why vein patterns are unique to an individual — even to twins. Veins grow along with our skeleton, and while capillary structures continue to grow and change, vascular patterns are set at birth and don’t tend to change over the course of one’s lifetime.

PatientSecure, a manufacturer of these technologies, describes how it works:

To scan the veins, an individual’s hand is placed on the hand guide (the plastic casing of the scanner device) and the vein pattern is captured by lighting the hand with near-infrared light. Veins contain deoxidized hemoglobin, an iron-containing pigment in the blood that carried oxygen through the body. These pigments absorb the near-infrared light and reduce the reflection rate causing the veins to appear as a black pattern. An individual’s scanned palm vein data (biometric template) is encrypted for a protection and registered along with the other details in his/her profile as a reference for future comparison.

Heart Rhythms

Our hearts pound at different rates throughout the day. Each person has a unique overall pattern, typically based on the size and position of the heart within the body. The Canadian firm Bionym has a system, called the Nymi Bracelet, which uses electrocardiogram technology to read these rhythms and confirm the wearer’s identity.

DNA Fingerprinting

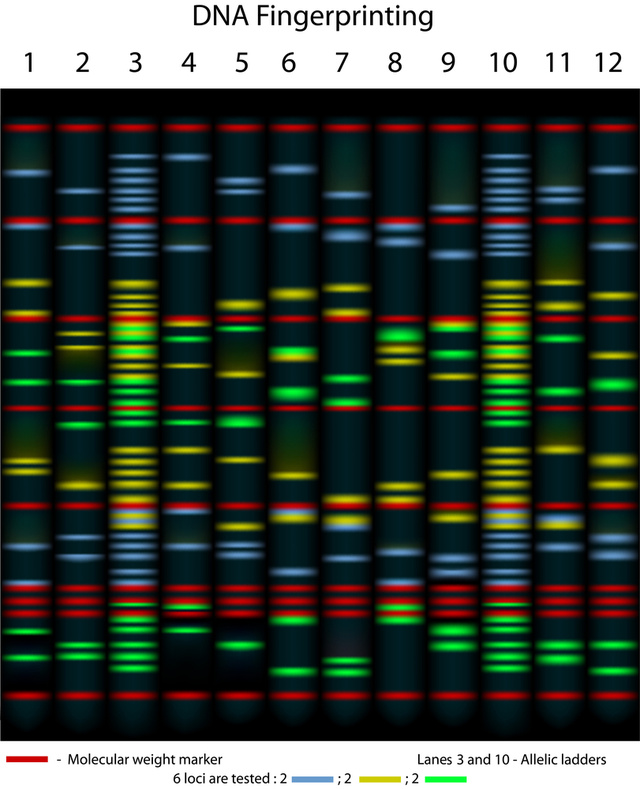

Every person has a unique DNA signature, which can be extracted from blood, hair, skin, or other sources. Also called chemical biometrics, it involves the identification of an individual by analysing segments of DNA. It’s done by isolating the DNA from human tissue, which in turn is cut using special enzymes, sorted, and passed through a gel. It then gets transferred to a nylon sheet, where radioactive probes are added to produce the pattern — the so-called DNA fingerprint.

To make a match, scientists use polymerase chain reaction — which amplifies specific regions of a DNA strand — and restriction fragment length polymorphism — the process of creating and differentiating DNA fragments. Both of these techniques produce genetic fingerprints that can yield a match with 99.99% accuracy. The technique is still expensive, but it’s coming down in price.

An example of DNA fingerprinting, 10 individuals are tested for 6 loci (Alila Medical Media/Shutterstock):

Relatedly, DNA can be used to create “mugshots” and predict hair and eye colour of suspects. But once our DNA profiles are made available online, they can be hacked, stolen, and used for target marketing.

Body Odor

Image: Getty.

A Polytechnic University research group is working with the Spanish engineering consulting firm Ilía Systems Ltd. on the development of “personal odor” biometrics. Indeed, as anyone who’s worked with a bloodhound knows, we have recognisable patterns in our odor that remain fairly constant (though body odours vary on account of disease, diet change, and even mood swings). These researchers have developed a sensor that can detect volatile elements present within body odor to 85% accuracy.

Scarily, capturing someone’s body odor can be as easy as having them walk past a sensor. It’s far less intrusive than fingerprint readers or iris scanner.

Related research is also underway at the U.S. Department of Homeland Security. Its system, which will be used in lie detector tests, can track odor changes like galvanic skin response in a polygraph.

Gait and Walking Style

Even if you’ve somehow managed to disguise yourself and your stinky smells, the peculiar way you walk will still give you away. Our gait can be measured, scanned, and cross-referenced like any other biosignature. Japanese researchers, for example, have developed a 3D imaging technique that captures gait and walking style up to 90% accuracy. And its barefoot print analysis is at 99.6% accuracy — which could help airport security officials identify travellers as they walk through security without their shoes on.

Image: inf.ed.ac.uk.

Also, engineers from Southampton’s School of Electronics and Computer Science (ECS) have been working on a computer system that can analyse the gait of criminals caught on security cams and then compare them with those of a suspect. Indeed, sometimes investigators have only grainy and limited surveillance camera footage to work with.

Here’s how it works:

[A] working prototype is in operation in the form of an innovative tunnel that can track and record an individual’s gait. On first appearance the tunnel is a strange patchwork of multicoloured one-foot-squares. This is a novel form of chromakey — a digital image capturing technique often used in film production where the background can be ‘erased’ from the shot, leaving just the subject behind. The gait recognition version of chromakey differs from the film version because it must track individuals wearing a large array of colours, so different colours are used for each square. ‘We use the tunnel footage alongside other images of the individual walking in an everyday environment to build up a complete picture of how the individual moves.’ The tunnel uses eight cameras to record the movement of the individual as they walk, which is then recreated digitally using novel software back in the lab. Thus the individual can be viewed from any angle once they are digitally recreated. From there the team can characterise and map the unique walking patterns. The individual’s walk can then be recorded on a database and matched to CCTV footage.

Hand Geometry

This technique has been around since the 1980s and it’s fairly straight forward. Devices can be used to measure and record the length, width, thickness, and surface area of a individual’s hand while guided on a plate. These systems use a camera to capture a silhouette image of the hand.

Motion Identification

Though motion identification may not be able to prove the identify an individual (at least not yet — but that’s obviously coming), it can work to recognise certain physical activities. Researchers at Cornell University, for example, reverse engineered a Microsoft Kinect to identify common household activities like cooking and brushing teeth.

There’s also DARPA’s Mind’s Eye project — an attempt to create a camera with “visual intelligence.” Once operational, the system will not only be able to function as a conventional camera, it will also be able to recognise human activities and predict what might happen next. Should it encounter a potentially threatening scene or dangerous behaviour, it could sound the alarm and notify a human agent.

Signature Recognition

Again, like fingerprints and hand measurements, this one’s a classic biometric technique. When performing signature recognition (or handwriting analysis), experts consider static and dynamic aspects. Static is a visual comparison between one scanned signature and another scanned signature, or a scanned signature against an ink signature. Advanced algorithms take care of the rest. Dynamic works by capturing X, Y, T and P coordinates from the signing device.

Keystroke Signatures

This is a behavioural biometric in which software is used to measure the speed, rhythmic patterns, and other peculiarities found in our unique typing styles. This is particularly effective when scanning a password entry, which tends to be very consistent. Keystroke dynamics and/or recognition is probably one of the easiest biometrics forms to implement and manage.

The Future

Lastly, and in the future, our bodies could be scanned any number of ways, including electronic circuits integrated into our skin and by readers that can scan our blood.

Other sources: The Futurist [Tech News Daily [University of Bath [Biometrics Institute [University of Southampton [IrisID [Biometric Update [ID Control]

Follow me on Twitter: @dvorsky