Founded in 1958 to prevent technological surprises such as Sputnik, the Defence Advanced Research Projects Agency funds projects that are both outside the box and off the wall. Although DARPA gave us the Internet and GPS, plenty of its blue-sky ideas have crashed back down to Earth. Here are ten of them.

1) Mecha-Elephant

In 1966, DARPA commissioned a study to “identify the types of surface vehicles whose physical and performance characteristics would vastly improve the present limited capability of transporting cargo and personnel cross-country over various types of terrain to South Vietnam.”

The researchers concluded that top priority should be given to an R&D program for “a narrow-trail vehicle (NTV) that is capable of transporting personnel and cargo in mountainous terrain along narrow winding jungle trails with steep slopes, and across small marshes and shallow rivers and streams.”

Hmmm….something that could carry troops and cargo for extended distances over mountainous terrain and steep slopes…Hey, didn’t Hannibal move an entire army across the Alps using elephants?

And thus began one of DARPA’s most infamous endeavours: designing a “mechanical elephant” that could traverse difficult terrain on “servo mechanism legs.” When then-DARPA Director Eberhardt Rechtin found out about the project, he immediately terminated it, calling it a “damn fool” idea that would destroy DARPA’s credibility if Congress ever found out.

2) “Advancing” Our Paranormal Abilities

In the early 1970s, DARPA commissioned the Rand Corporation to assess “scientific and technical activities where substantial disparities exist between the respective U.S. and USSR research programs on paranormal phenomenon.” In other words, DARPA was concerned about the growing psychic gap with the Soviet Union.

The report was quite thorough, describing Soviet “advances” in the fields of telepathy, precognition, telekinesis and dermo-optics (the “dermal sensing of visual information”). The study concluded:

- Over forty years of research in the United States have failed to significantly advance our understanding of paranormal phenomena.

- Soviet research is much more oriented toward biological and physical theorizing than is U.S. research.

- If paranormal phenomena do exist, the thrust of Soviet research appears more likely to lead to explanation, control and application than is U.S. research.

DARPA invested millions trying to identify and recruit telepaths who could conduct remote espionage (and, presumably, torment Soviet leaders by bending spoons in the Kremlin).

No records exist on how much of that money was spent on evaluating the efficacy of tinfoil hats.

3) Let’s Make A Synthetic Polio Virus

In the late 1990s, concerns over biological weapons prompted DARPA to establish the “Unconventional Pathogen Countermeasures Program,” in order to “develop and demonstrate defensive technologies which afford the greatest protection to uniformed warfighters, and the defence personnel who support them, during U.S. military operations.”

DARPA failed to inform anyone that one of its “unconventional” projects was $US300,000 ($393,939) to fund a trio of scientists who thought it would be a neat idea to synthesise polio. They constructed the virus using its genome sequence, which was available on the Internet, and obtained the genetic material from companies that sell made-to-order DNA.

And then, in 2002, the scientists published their research — basically, a how-to guide — in the journal Science. Eckard Wimmer, a professor of molecular genetics and the leader of the project, defended the research, saying that he and his team had made the virus to send a warning that terrorists might be able to make biological weapons without obtaining a natural virus.

This project would have been controversial at any time, but publishing it less than a year after the September 11 terrorist attacks was truly clueless — prompting panicky headlines such as “New Life for Polio?”, “A New Terror Risk,” and “Surfing for a Satan Bug.”

Most of the scientific community called it an “inflammatory” stunt without any practical application. Polio would not be an effective terrorist bioweapon because it’s not as infectious and lethal as many other pathogens. And, in most cases, it would be easier to obtain a natural virus than to build one from scratch. The only exceptions would be smallpox and ebola, which would be nearly impossible to synthesise from scratch using the same technique.

“It’s critically important to hold a national dialog among biologists, health care experts, politicians, and the general public about the future of biological work with biological weapons implications,” said Steven Block, a Stanford University expert on the applications of biotechnology to biowarfare. “But publishing research like this is a poor way indeed to open the conversation.” Block later said that the incident set back discussions about how to properly defend against biological weapons by “at least three years,” since new calls for regulation by Congress “had a chilling effect.”

4) Hydra, the Drone Mothership

Hail Hydra! Named for the multi-headed creature from Greek mythology, DARPA’s Hydra project — announced in 2013 — aims to develop an undersea network of platforms that could de deployed for weeks and months in international waters. The submerged platforms would be capable of deploying both underwater and aerial drones. In other words, Hydra is a drone mothership.

The rationale for the project, according to DARPA:

Even the most advanced vessel….can only be in one place at a time, making the ability to respond increasingly dependent on being ready at the right place at the right time. With the number of U.S. Navy vessels continuing to shrink due to planned force reductions and fiscal constraints, naval assets are increasingly stretched thin trying to cover vast regions of interest around the globe. To maintain advantage over adversaries, U.S. naval forces need a way to project key capabilities in multiple locations at once, without the time and expense of building new vessels to deliver those capabilities.

Bruce Berkowitz, a national security analyst and expert on undersea drones, has described the project as being a tad too ambitious: “The hybrid submarine/aircraft carrier idea has been around since the 1930s, when the French put a seaplane hanger on the 3,000 ton Surcouf, and the technology might be feasible. But a more likely solution is to integrate [drones] operated from land and surface ships.”

Another concern is that filling international waters with undersea killer robot launch facilities could be make other countries uneasy. “Pre-deploying large amounts of warfighting hardware, including ‘non-lethal’ autonomous weapons and possibly lethal ones as well, over broad areas of the Western Pacific, or any other waters well outside the recognised boundaries of U.S. territorial waters, is potentially provocative and offensive to China and other nations,” says Mark Gubrud, at Princeton University’s Woodrow Wilson School of Public and International Affairs. “How would we react if China placed similar systems on the seabed offshore of the United States, or around various geographic locations where it is thought that American and Chinese forces might clash?”

5) Building an AI for War

It sounds a lot like Skynet. Between 1983 and 1993, DARPA spent $US1 ($1) billion on computer research to achieve machine intelligence that could support humans in the battlefield or, in some cases, act autonomously.

The project was called the Strategic Computing Initiative (SCI). According to DARPA:

Improvements in the speed and range of weapons have increased the rate at which battles unfold, resulting in a proliferation of computers to aid in information flow and decision making at all levels of military organisation. . . A countervailing effect on this trend is the rapidly decreasing predictability of military situations, which makes computers with inflexible logic of limited value. . . Confronted with such situations, leaders and planners will . . . be forced to rely solely on their people to respond in unpredictable situations. Revolutionary improvements in computing technology are required to provide more capable machine assistance in such unanticipated combat situations. . . . Improvements can result only if future computers can provide a new quantum level of functional capabilities.

Translation: Faster battles push us to rely more on computers, but current computers cannot handle the increased uncertainty and complexity. This means that we have to rely on people. But without computer assistance, people can’t cope with the complexity and unpredictability, either. So we need new, more powerful computer systems that can actually think.

The goal of SCI was nothing less than full machine intelligence. “The machine…would run ten billion instructions per second to see, hear, speak, and think like a human,” wrote Alex Roland with Philip Shiman in their history of the project, Strategic Computing: DARPA and the Quest for Machine Intelligence, 1983-1993. “The degree of integration required would rival that achieved by the human brain, the most complex instrument known to man.”

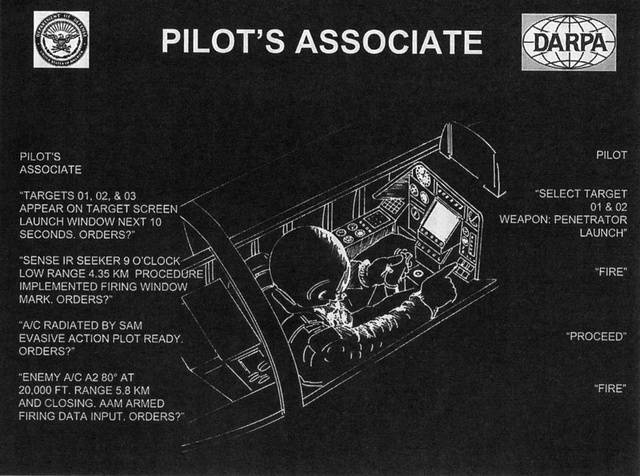

This artificial intelligence would supposedly enable three specific military applications. For the Army, DARPA proposed a class of “autonomous land vehicles,” able not only to move around independently, but also to “sense and interpret their environment, plan and reason using sensed and other data, initiate actions to be taken, and communicate with humans or other systems.” For the Air Force, DARPA envisioned a “pilot’s associate” to aid aircraft operators who are “regularly overwhelmed by the quantity of incoming data and communications on which they must base life or death decisions,” in tasks ranging from the routine to those that are “difficult or impossible for the operator altogether” and require the “ability to accept high-level goal statements or task descriptions.” Finally, the Navy would be given a “battle management system,” “capable of comprehending uncertain data to produce forecasts of likely events.”

The expectation of creating full artificial intelligence during this era was derided as “fantasy” by critics within the computer industry. Another point of contention: war is unpredictable because human behaviour can be unpredictable, so how could a machine possibly be expected to forecast and respond to events?

And, from the very onset, there were concerns that SCI would be given control of the Reagan administration’s Strategic Defence Initiative.

As a 1985 article in the Bulletin of the Atomic Scientists observed:

Much of the technology push of SCI fits comfortably into the ballistic missile defence framework. The Initiative states: “An extremely stressing example. . . is the projected defence against strategic nuclear missiles, where systems must react so rapidly that it is likely that almost complete reliance will have to be placed on automated systems.” The government’s Fletcher Report, which analyses the proposed “star wars” defence system, claims: “It seems clear. . . that some degree of automation in the decision to commit weapons is inevitable if a ballistic missile defence system is to be at all credible.” Whatever one’s assessment of “star wars,” this analysis supports our general concern with the increasing reliance on automatic decision-making in critical weapons systems.

In the end, though, the debate was moot. Much like the Strategic Defence Initiative, the Strategic Computer Initiative’s goals proved to be technologically out of reach. As the Bulletin presciently warned nearly a decade before the project’s cancellation:

Over the years, the lure of artificial intelligence has led to a growing appetite for research funding. The appetite, in turn, has led the professional community to make promises, many of which have turned out to be more difficult to fulfil than was anticipated….These unfulfilled promises are frequently a combination of ordinary naivete, unwarranted optimism and a common if regrettable tendency to exaggerate in scientific proposals. Shortcomings are often masked by subtle semantic shifts. When we fail to instill “reasoning” or “understanding” in our machines, we tend to adjust the meaning of these terms to describe what we have in fact accomplished. In the process, we obscure the real meaning of our claims for artificial intelligence.

6) The Hafnium Bomb

DARPA spent $US30 ($39) million to build a hafnium bomb — a weapon that never existed and probably never will. Its would-be creator, Carl Collins, was a physics professor from Texas who, in 1999, claimed that he had used a dental X-ray machine to release energy from a trace of the isomer of hafnium-178. An isomer is a long-lived excited state of an atom’s nucleus that decays by the emission of gamma rays. In theory, isomers might store millions of times more triggerable energy than that contained in chemical high explosives.

Collins claimed that he had unlocked the secret. If so, then a hafnium bomb the size of a hand grenade could have the force of a small tactical nuclear weapon. Better still, from the perspective of defence officials, because the triggering was an electromagnetic phenomenon, not nuclear fission, a hafnium bomb would not release radiation and might not be covered by nuclear treaties.

Just one small problem: nobody else was able to reproduce the results of Collins’ experiments, including a team of physicists from the Lawrence Livermore National Laboratory, in collaboration with scientists at the Los Alamos and Argonne national labs. And a report published by the Institute for Defence Analyses — a federally funded research arm of the Pentagon — concluded that Collins’ work was “flawed and should not have passed peer review.”

The story should have ended there. But, according to a 2004 investigative report by the Washington Post:

Martin Stickley arrived at DARPA as a program manager in 2002….According to two of the participants in Collins’s dental X-ray experiment, Stickley was a believer…..For Stickley, a promoter of isomer research, the timing was fortunate…. the 2002 Nuclear Posture Review, unveiled by Secretary of Defence Donald Rumsfeld, emphasised that the United States needed new nuclear as well as non-nuclear bombs to destroy difficult targets, such as buried bunkers that could hide terrorists or weapons of mass destruction.

Stickley gave a PowerPoint briefing to a review panel in which he promoted the hafnium program as the next revolution in warfare. Hafnium bombs could be loaded in artillery shells, according to a copy of the briefing slides, or they could be used in the Pentagon’s missile defence systems to knock incoming ballistic missiles out of the air. He encapsulated his vision of the program in a startling PowerPoint slide: a small hafnium hand grenade with a pullout ring and a caption that read, “Miniature bomb. Explosive yield, 2 KT [kilotons]. Size, 13cm diameter.” That would be an explosion about one-seventh the power of the bomb that obliterated Hiroshima in 1945.

Hafnium would have been just what the secretary had ordered, if it had worked.

It didn’t.

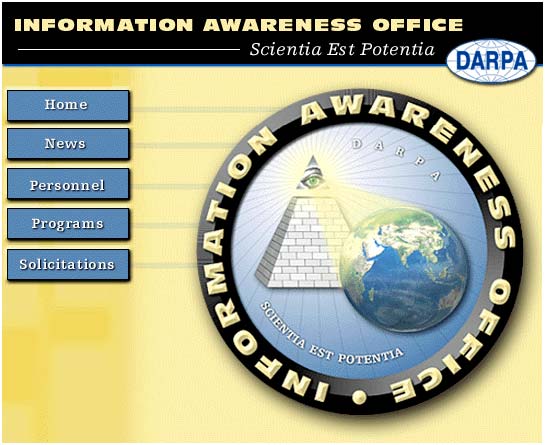

7) Total Information Awareness

Here’s some belated advice to DARPA if you’re going to establish a massive data-mining program:

- Don’t give it a creepy, Orwellian name like “Total Information Awareness.”

- If you’re trying to gain the trust of Congress and the public, don’t pick John Poindexter — a key player in the Iran-Contra Scandal — to oversee the program.

- Design a logo that doesn’t look like the Eye of Sauron atop a pyramid, casting its gaze across the entire planet. I’m not a conspiracy theorist, but even I’m convinced that it’s a secret symbol for the Illuminati cabal.

In 2002, DARPA envisioned TIA as a massive counter-terrorism database that would use advanced methods for data collection, processing and analysis. The ultimate goal was to preempt terrorist attacks. Poindexter, who was the head of the program, declared in a speech that the TIA is “about creating technologies that would permit us to have both security and privacy. More than just making sure that different databases can talk to one another, we need better ways to extract information from those unified databases, and to ensure that the private information of innocent citizens is protected.”

At the time, the technology capable of accomplishing some of the program’s data mining goals hadn’t even been invented yet. For instance, one component of the system was a technology that enabled unilingual English speakers to monitor information in other languages. To that end, DARPA began awarding contracts for the design and development of TIA system components in the summer of 2002.

An ensuing firestorm in the press over privacy concerns prompted Congress to kill TIA’s funding in September 2003. The Department of Defence’s Inspector General concluded: “Total Information Awareness could prove valuable in combating terrorism, but DARPA could have better addressed the sensitivity of the technology to minimise the possibility of any governmental abuse of power and could have assisted in the successful transition of the technology into the operational environment.” Poindexter resigned from the government.

And, if this program sounds curiously familiar to the National Security Agency, it’s no coincidence. As far back as 2006, the National Journal learned that the program was still alive — it had been quietly moved from DARPA to another group, which built technologies primarily for the NSA.

And the NSA version was worse. As reporter Shane Harris wrote in 2012:

After TIA was officially shut down in 2003, the NSA adopted many of Mr. Poindexter’s ideas except for two: an application that would “anonymize” data, so that information could be linked to a person only through a court order; and a set of audit logs, which would keep track of whether innocent Americans’ communications were getting caught in a digital net.

Had the agency’s leaders actually listened to everything Mr. Poindexter had to say, they might not find themselves telling the American people: “We’re not spying on you. Trust us.”

TIA may never have come to fruition, but it did lead to one successful project. The creators of the science fiction show Person of Interest say that TIA was one of their inspirations for the idea of the artificially intelligent Machine, created to keep the entire country under surveillance.

8) The Flying Humvee

In 2010, DARPA unveiled a new concept for troop transport. The Transformer — otherwise known as the Vertical Takeoff and Landing Roadable Air Vehicle — was envisioned as a flying Humvee capable of carrying up to four soldiers.

According to DARPA’s initial solicitation announcement, the Transformer “provides unprecedented options to avoid traditional and asymmetrical threats while avoiding road obstructions. Transportation is no longer restricted to trafficable terrain that tends to makes movement predictable. The vehicle can avoid Improvised Explosive Devices (IEDs) and ambushes, while also allowing the warfighter to approach targets from directions that give our warfighters the advantage in mobile ground operations.”

The concept got high marks for its inherent coolness, but not so much for practicality. As Spencer Ackerman observed:

It’s difficult to imagine a military problem that the Transformer actually solves. DARPA initially envisioned it as an answer to homemade bombs that disable ground vehicles; just glide above the ground to avoid the boom. But assuming that the U.S. is even involved in a land conflict after Afghanistan to make that problem salient, why wouldn’t insurgents plant those bombs to lure the Transformer into the air and then hit it with a rocket-propelled grenade or anti-tank missile?

After all, the thing only has to stop small arms fire. Armoring it more seriously will add weight, imperiling its ability to stay aloft and stressing the fuel system. Would the Transformer come equipped with tiny anti-missile weapons?

Outside of that, would the Marines use the thin-skinned Transformer as a ship-to-shore aircraft, replacing their longed-for swimming tanks as a way of storming an enemy beach?….For that matter, in an age of tight defence budgets, is it sensible to solve the problem of improvised explosive devices by building a hybrid truck, helicopter and aeroplane?

In 2013, DARPA changed the direction of the program, so that it became the Aerial Reconfigurable Embedded System (ARES). ARES (image above) is conceived as an unpiloted payload system with detachable modules designed for specific missions: carrying surveillance equipment, evacuating wounded or hauling up to 3,000 pounds of cargo. It would primarily serve ground units in the field that don’t have helicopters. A cargo drone is not as exciting as a flying Humvee, but arguably more practical.

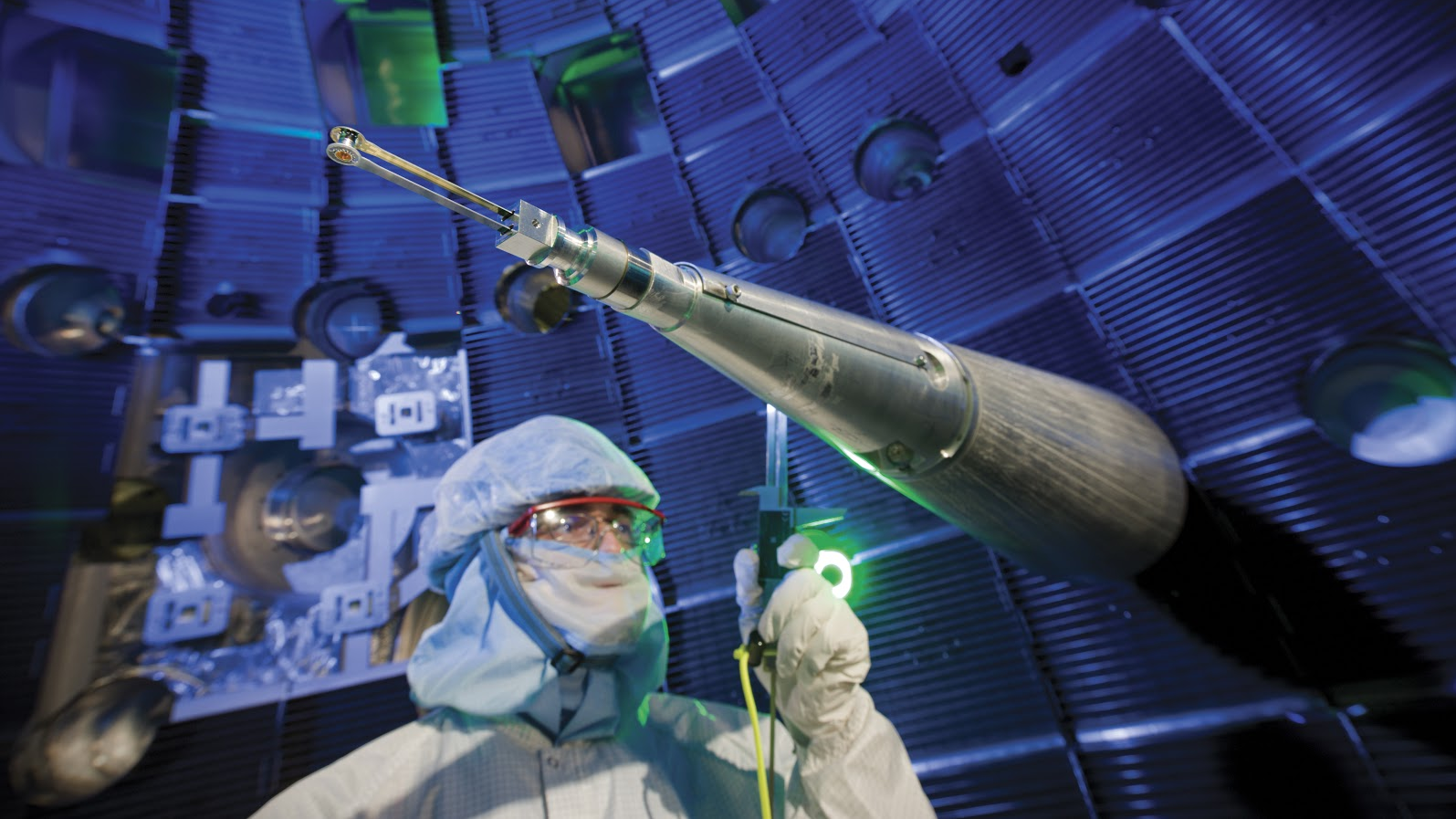

9) A Hand-Held Fusion Reactor

This one is a bit of a mystery…a $US3 ($4) million project that appeared in DARPA’s Fiscal Year 2009 budget, never to be heard about again. [Insert conspiracy theory here]

What is known is that DARPA believed it was possible to construct a fusion reactor the size of a microchip:

The Chip-Scale High Energy Atomic Beams program will develop chip-scale high-energy atomic beam technology by developing high-efficiency radio frequency accelerators, either linear or circular, that can achieve energies of protons and other ions up to a few mega electron volts. Chip-scale integration offers precise, micro actuators and high electric field generation at modest power levels that will enable several order of magnitude decreases in the volume needed to accelerate the ions. Furthermore, thermal isolation techniques will enable a high efficiency beam to power converters, perhaps making chipscale self-sustained fusion possible.

At least it cost less than the hafnium bomb.

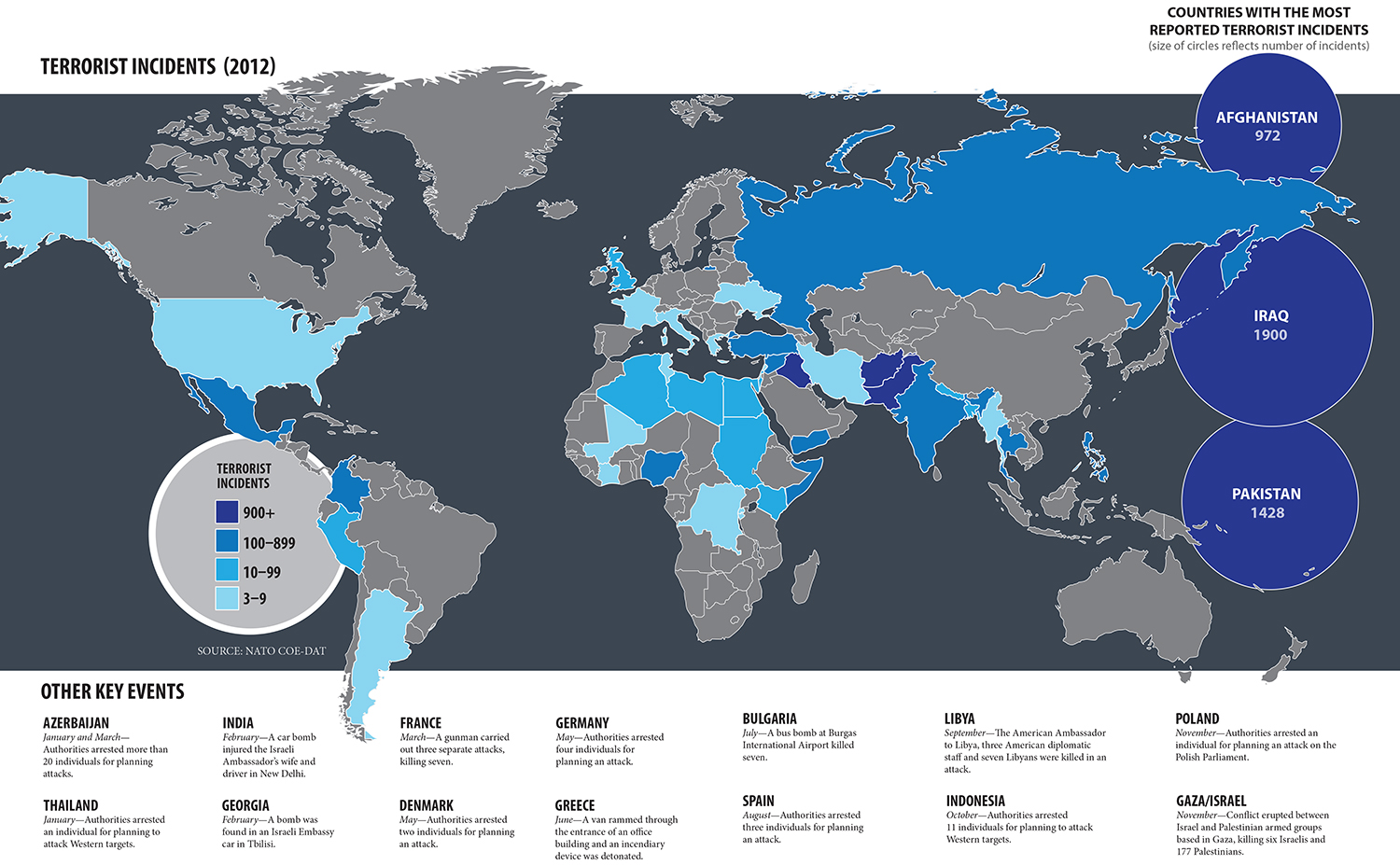

10) A Futures Market in Terrorism

Several DARPA projects have focused on developing ways to anticipate future events. And what better way to do that than to tap into the futures market?

The project, dubbed Future Markets Applied to Prediction, or FutureMAP, intended to launch a public website that would encourage anonymous speculators to bet on the likelihood of terrorist attacks, assassinations and coups in the Middle East.

Two angry senators disclosed the project in July 2003, hoping to head off the registration of investors. Senator Ron Wyden (D-OR) and Senator Byron Dorgan (D-ND) said more than $US500,000 ($656,565) in taxpayer money had already been spent to develop the project, and the Pentagon had requested an additional $US8 ($11) million over the next two years.

“Spending taxpayer dollars to create terrorism betting parlors is as wasteful as it is repugnant,” Wyden and Dorgan said in a letter to the Pentagon. “The American people want the federal government to use its resources enhancing our security, not gambling on it.”

Initially, the Pentagon defended the project, releasing a statement that read:

”Research indicates that markets are extremely efficient, effective and timely aggregators of dispersed and even hidden information. Futures markets have proven themselves to be good at predicting such things as elections results; they are often better than expert opinions. DARPA has undertaken this research as part of its effort to investigate the broadest possible set of new ways to prevent terrorist attacks and will continue to reevaluate the technical promise of the program before committing additional funds beyond Fiscal Year 2003.”

But, the outcry was such that the Pentagon quickly distanced itself from FutureMAP — calling it an example of imaginative “excess” — and shut it down.

Still, FutureMAP had its defenders, including financial journalist James Surowiecki, who wrote in his book, The Wisdom of Crowds:

Killing the project ensured only that we would have no ideas whether decision markets might have something to add to our current intelligence efforts…Let’s admit that there’s something viscerally ghoulish about betting on an assassination attempt. But, let’s also admit that U.S. government analysts ask themselves every day the same questions that traders would have been asking: How stable is the government of Jordan? How likely is it the House of Saud will fall?…If it isn’t immoral for the U.S. government to ask these questions, it’s hard to see how it’s immoral for people outside the U.S. government to ask them.