Nvidia has announced its first in-car artificial intelligence supercomputer at CES. It sounds like it should turn any vehicle into a computational powerhouse, capable of performing 24 trillion deep-learning operations every single second.

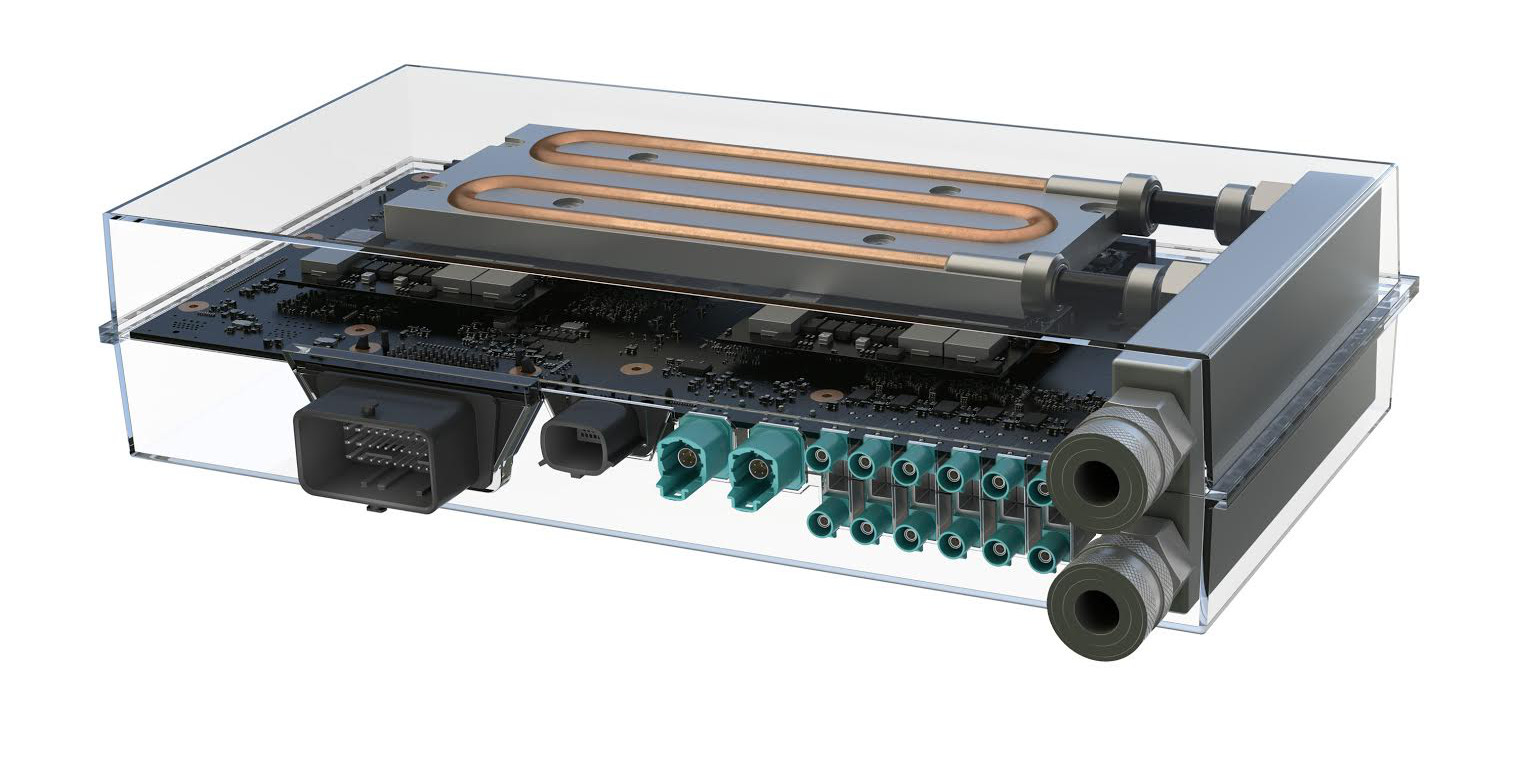

The new computer, called Drive PX 2, is said to be ‘the size of a lunchbox and with the computing capability of 150 MacBook Pros’ in a press release. The second half of that statement is backed by two Tegra processors and two discrete GPUs. It’s claimed it works quickly enough to gobble up data from 12 video cameras, along with lidar, radar and ultrasonic sensors, then processes the streams of information to make sense of the outside world.

The result is a computer that can work through 24 trillion deep learning operations per second. Tech Crunch reports from CES that in reality that means it can process up to 2800 images per second using a neural network-based algorithm. That should be enough for a car to orient itself accurately in the world, plan routes and work out what to do in the face of everyday road hazards, whether it’s bad drivers, erratic cyclists, or simply debris. These are, after all, some of the most difficult problems that ultimately face autonomous vehicles.

Volvo has already announced that it’s to test the new supercomputers in 100 of its XC90 SUVs that will be made available for public use next year.

Gizmodo’s on the ground in Las Vegas! Follow all of our 2016 CES coverage here.