From asteroid impacts to robot takeovers to superbug pandemics, there are a thousand ways human civilisation could be destroyed. Most of us prefer not to dwell on the End Times, but for the folks at the Global Catastrophic Risk Institute, the apocalypse is just another day at work.

AU Editor’s Note: This story is from Gizmodo’s Survival Week.

In the spirit of Seth Baum, and research associate Dave Denkenberger, to learn how we’re all going to die. Just kidding: We also talked about what can be done to prevent the apocalypse. Below is a condensed and lightly edited version of our interview.

Gizmodo: How do you define “catastrophic risks,” and how does the Global Catastrophic Risk Institute study them?

Baum: In simple terms, a catastrophic risk is something that could permanently destroy global human civilisation. A lot of these risks are things you’ve probably already thought of, like super volcanoes, asteroid strikes, nuclear war, certain biotechnologies, or global warming.

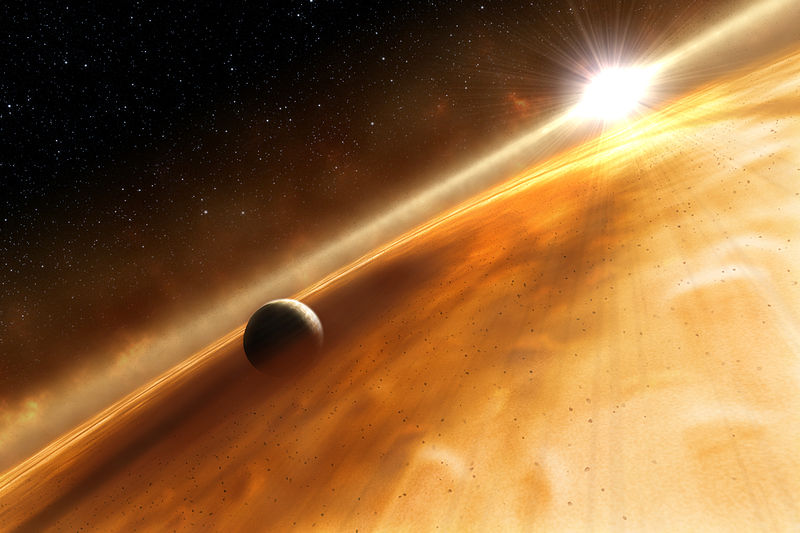

In slightly more precise terms, I’m interested in whether human civilisation could survive beyond the lifetime of our home planet. In a few billion years, our Sun will grow so hot that the Earth won’t be able to support life anymore. I’m especially interested in catastrophes that could prevent humans from being somewhere else by that time.

In a billion years or so, the ageing, brightening sun will spell destroy Earth’s biosphere. Image credit: Wikimedia

At the Global Catastrophic Risk Institute, our mission is to understand the threats to the survival of human civilisation, and to help people make better decisions to confront those threats. We use a risk analysis research approach, meaning we look at the probability of different disaster scenarios and the severity of the impact. Then, we use that information to identify the best opportunities to reduce the risk.

A lot of us have engineering backgrounds, including myself and Dave, as well as Tony Barrett, who co-founded the Institute with me a few years back. One thing that our engineering backgrounds bred is the ability to look at all the great technical details of catastrophic risks. That’s part of our specialty.

We seem to be a pretty apocalypse-obsessed society these days. Do you think this reflects a real increase in the likelihood that we’re going to destroy ourselves?

Denkenberger: I think the big picture is that when we didn’t have civilisation and high technology, we only had the natural risks: things like asteroids, supervolcanoes and pandemics. But now that we do have a technological civilisation, there are a host of new risks, like nuclear war and molecular manufacturing. If, say, we built a lot of tiny robots that can make copies of themselves, that could get out of hand and do a lot of damage. We didn’t have that threat ten thousand years ago.

Another important observation is that as a society, we are much more integrated than we used to be. We have global trade. In a way, that makes us more resilient, because a local catastrophe like a drought doesn’t have to lead to starvation if we can ship food in from elsewhere.

But integration also means our entire civilisation could fall. Now that we travel globally, we’re more susceptible to a global pandemic. In a way, we’re more resilient to local disasters, but we have a higher probability of a worldwide disaster wiping us out.

Baum: One point I’d like to add that could be pertinent for your Survival Week coverage: As our civilisation becomes more modernised, urbanized, and specialised, we have fewer and fewer people who know how to support themselves, given what’s available to them. Fewer people know how to farm, fewer people know how to clean water and build machines — all that basic stuff that we’d be on our own for in the absence of functional, modern civilisation.

But now, today we have the preppers movement — the survivalist movement. Some people write preppers off as a little kookie, but personally, I’m glad they’re out there. I’m glad there are people who are teaching themselves to grow food, people finding plots of land where they can keep going. It might not be needed for the specific scenarios they’re thinking of, but my personal feeling is that humanity as a whole is more resilient because of them.

If you had to pick a top threat to humanity, what would it be?

Baum: That depends on what time period you’re talking about. For the next twelve months, I would say the two highest things on the list by a pretty large margin are a nuclear war — for example, if the situation in Ukraine got worse — or a disease outbreak. Those are things that could happen right now and destroy us within twelve months time. If you look 20, 30, 40 years out, then scenarios involving new types of technology become more likely.

Russia’s Sarychev Volcano, erupting in 2009 as seen from space. Massive volcanic eruptions can lead to abrupt climate change and have been implicated in previous mass extinctions on Earth. Image credit: Wikimedia

Denkenberger: I would largely agree with that. I’d also include some risks that others might not, including things that could cause a ten per cent global agricultural shortfall, like a volcanic eruption the size of the one in 1815 that caused the year without a summer, abrupt climate change, or multiple breadbasket failures on multiple continents at the same time. A ten per cent shortfall might not sound so bad, but the reality is that food prices would get very high and a billion people could easily be priced out of food. There could be panic and conflict, and that could escalate into nuclear war. Even some of these medium-sized catastrophes could spiral out of control.

Devastating climate change seems a more likely risk than a massive asteroid impact. But the latter would almost certainly have large, immediate and devastating repercussions. How do we decide which of these risks to focus our resources on?

Baum: One point I would make to that is that to a pretty large extent we don’t have to choose. A lot of the specific actions we should do to protect ourselves against one risk also protect ourselves against other risks. I would cite Dave’s work on protection against food catastrophes as a prime example. You’d take the same steps to help address the food crisis following a volcanic eruption as you would an asteroid impact.

Dave, can you tell us about your research on improving resilience to food catastrophes?

Denkenberger: The original scope of my work was to look at catastrophes that could block the sun and destroy conventional agriculture. The assumption here is that we only have a few months of food storage globally, so most people are going to die and we could go extinct. I was reading a journal article premised on how fungi took over the planet after a previous mass extinction. The conclusion of the paper was, maybe when the humans go extinct, the world will be ruled by mushrooms again. And I said, wait a minute, why don’t we eat the mushrooms instead of going extinct?

In a world without sunlight, mushrooms might be the key to humanity’s survival. Image credit: Wikimedia

So then I thought, what are some ways we could get food with the sun blocked? In general, we’d want to focus on things that can breed fast and survive off energy sources we can’t. Mushrooms that digest wood are one possible answer. There are also bacteria that grow on natural gas. I found out that there’s a company that’s using natural gas as an energy source for certain bacteria, and turning the bacteria into fish food.

I ended up writing a book called Feeding Everyone No Matter What. A colleague and I have done some calculations, and we think we could ramp up some of these alternate food supplies within one year, if we cooperated globally and highly prioritised this. It won’t necessarily cost a lot of money [to get prepared], especially if we retrofitted some of our existing infrastructure, like we did in World War II to produce aeroplanes. [Editor’s note: During World War II, automobile manufacturers joined forces with the military to produce aircraft components, helping America produce hundreds of thousands of aeroplanes within a few years.]

I’d also mention that if billions are starving, not only are we going to care about saving species, we’d actively eat species to extinction. I’ve estimated that preserving 100 individuals from all 5,000 mammal species would take a thousandth as much food as feeding all 7 billion humans. If we can feed humans, we could help preserve biodiversity as well.

If you had the attention of Congress, what would you ask them to do?

Denkenberger: I’d ask for money to do some planning and targeted R&D on developing alternate foods. And really, if you’re only spending $US100 million, it might not require an act of Congress. I have forthcoming work on how cost effective this would be, and also on the urgency. Every day or year we delay, we expose ourselves to more risk.

Baum: I would shy away from thinking in terms of one big solution that could take care of everything. If I had Congress’s attention, speaking offhand, I’d promote a broader effort to work on the full space of these risks. We can do more than one thing at a time!

For instance, we’re pretty sure the risk from asteroids is a fair bit smaller than the risk from say, war or AI. But at the same time, if you’re NASA, figuring out how to prevent an asteroid from hitting the Earth is a good use of your time. I think what ultimately would be best is to have a broad network of programs taking care of everything that needs to be done in a coordinated fashion, so that we’re not duplicating efforts.

Does thinking about the destruction of humanity every day get you down?

Denkenberger: Optimists tend to ignore the risks. Pessimists tend to take the risks seriously and don’t think we can do anything about them. And not enough work gets done either way. I’m say I’m more in the optimist camp, but now that I take the risks seriously I do think we can do something about them.

Baum: Yea, ok, I have my moments when it kinda settles in exactly what we’re talking about here. But on a day to day basis, this is a job, and you get used to it. A good comparison here is medical doctors. They’re in life and death situations every day, and most of those situations are much more immediately emotional than the analysis we do on computers. And they’re able to distance themselves emotionally from it, so that on a day to day basis, they can just keep going. It needs to be that way or you’d just wear out. The other side of it is that this is deeply fascinating work.