A team of British researchers have a salacious hypothesis: People like robots more when they exhibit the same sorts of flaws that characterise humans. This makes some sense — after all, the notion of a perfect, all-knowing robot is the stuff of dystopian science fiction. But do you know what’s worse than a perfect, all-knowing robot? A flawed robot that will screw up and kill you.

The challenge of programming a robot with a personality is a fascinating one. It’s one that we’ve noodled on since the earliest stories about automatons and joked about in Hollywood blockbusters like Short Circuit and Wall-E. The aforementioned researchers, a team of computer scientists from the University of Lincoln in the United Kingdom, recently presented their findings on a study into the matter at the International Conference on Intelligent Robots and Systems in Hamburg. (Imagine the after parties at that get-together.)

The team argues that people responded well to the so-called “personality uncanny valley.” That is, they accept the robots more quickly if they’re kind of dumb and forgetful. That sort of makes sense. Humans are dumb and forgetful, and humans tend to accept other humans.

“People seemed to warm to the more forgetful, less confident robot more than the overconfident one,” John Murray, a lecturer at the University of Lincoln, told Motherboard. “We thought that people would like a robot that remembered everything, but actually people preferred the robot that got things wrong.”

You know what, though, John? I do not want my robot companion to get things wrong. Sure, it’s funny if you ask it a simple maths problem, and it goofs up the answer. But when I ask my own personal Rosie to drop the knife, I don’t want her to drop it into my belly.

That’s an extreme example of a fuck up, one that’s you might think would be easily avoided by programming a robot with Isaac Asimov’s “Three Laws of Robotics.” Then again, Asimov’s laws can’t actually protect us. Furthermore, one of the big challenges in robotics right now is coping with our nagging suspicion that all robots are out to get us. This is well-founded, to a degree, since there are many cases on record of robots accidentally killing humans. However, one could also blame Hollywood’s depictions of robots as powerful and even evil death machines. (If you don’t believe me, just watch Ex Machina.)

None of this is to say that we shouldn’t experiment with different types of personalities for robots. Maybe we really would like a robot that acted like a pal, sort of like TARS from Interstellar. It really seems like people won’t like the robot that gets things wrong in the long run. People are scared of robots getting things wrong. We screw up enough on our own.

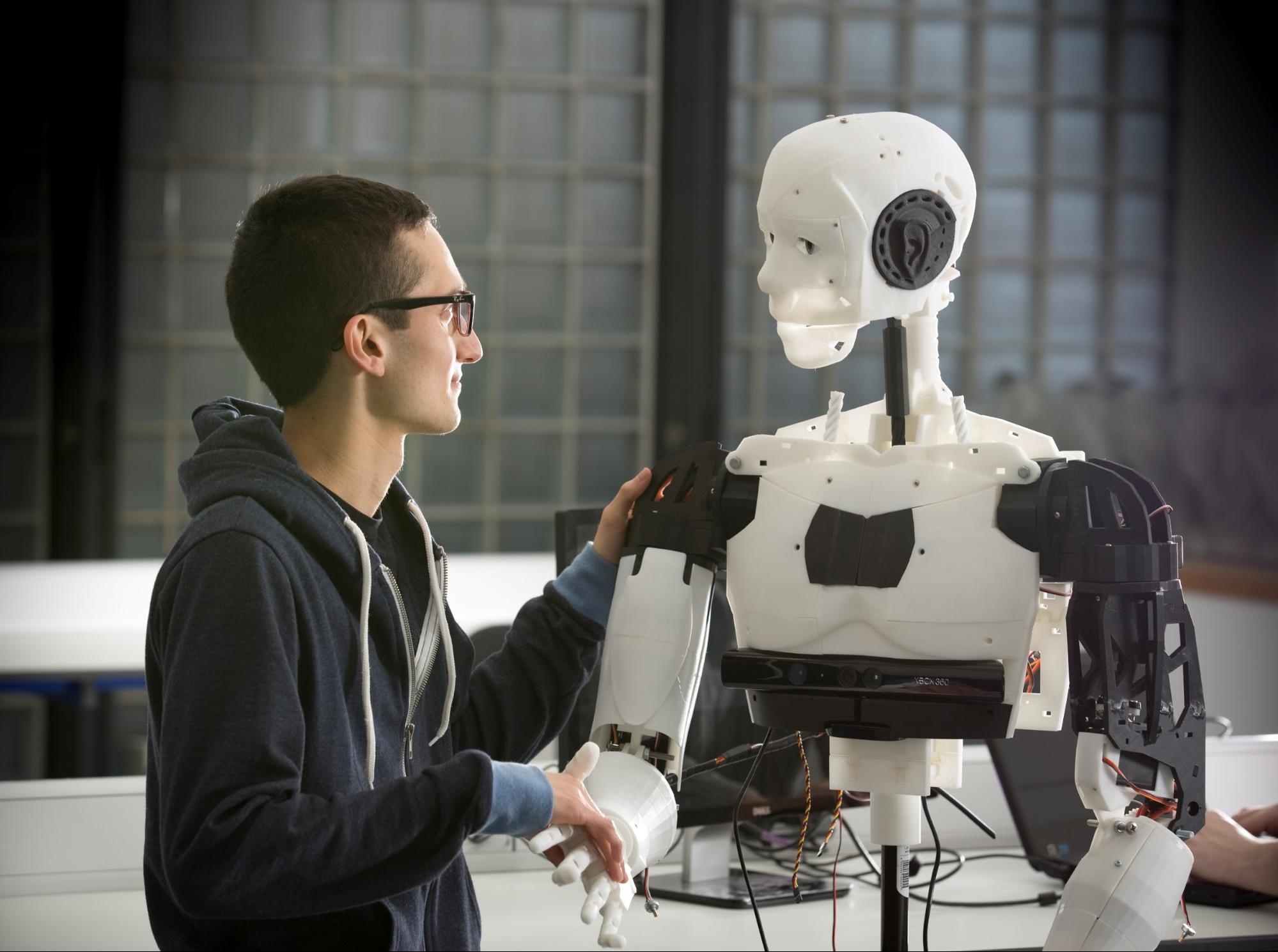

Photo via University of Lincoln.