What if you knew exactly what to say over email to get someone to like you? When to insert a smiley face, when to get to the point, when to flirt? A service called Crystal offers a cheat sheet for email finesse.

Crystal promises to help people write emails so perfectly aligned with recipients’ interests that the people who get them feel like they have found a kindred spirit. It’s a brilliant business idea. And it’s an unsettling example of how little control we have over our personal data.

When you use Crystal, you can “look up someone’s personality” and the service will coach you to pander to that person. It will also show you how it predicts you will interact with each other.

It does this by trawling the internet for data on whomever you want to impress. The company’s algorithm takes everything publicly available the recipient has written, as well as things written about them — tweets, blog posts, LinkedIn recommendations, Yelp reviews they left five years ago — and it crunches it into an easy-to-read personality primer. The algorithm is custom-made, but draws from personality tests like Meyer’s Briggs, DISC, and Five Factors.

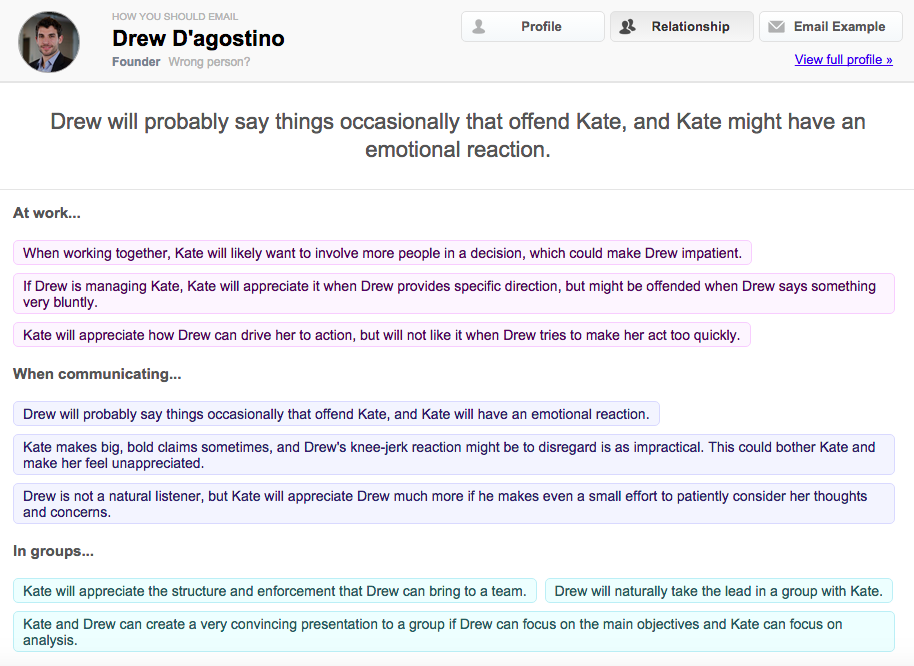

This is the predicted interaction with me and the head of Crystal

Crystal is still new, but I can see it becoming enormously popular with marketing types, as well as people using the internet to date. And that’s because what it offers can seem like an extraordinary opportunity to be heard.

The concept of data-crunching to predict personality isn’t new, but this is the first time that personal data has been whirred into personality-based assessments specifically to make it easier to get something from people. “The only other thing in this vein that’s out there are recommender systems, like Netflix recommending movies or Amazon recommending products, but this obviously has a very different feel to it,” Jen Golbeck, a computer scientist who studies how people are profiled based on their online activity, told me.

“There’s a separate issue of manipulation. On one hand, it would be great if people start sending me concise emails because Crystal says so. I like that. On the other hand, it feels bad to have an algorithm analysing me and helping you essentially manipulate me into doing what you want.”

***

It’s not just social media power users who pop up on Crystal. Most people with personality profiles on Crystal are just regular humans who do stuff online sometimes. In minutes, I found my parents, almost all of my coworkers, my partner, my friends. I found my high school teachers and my old boss.

Even my badass dentist Dr. Dan, who is old as hell and has a negligible digital footprint, made it on. (Crystal told me I should use “emotionally expressive language” with him.)

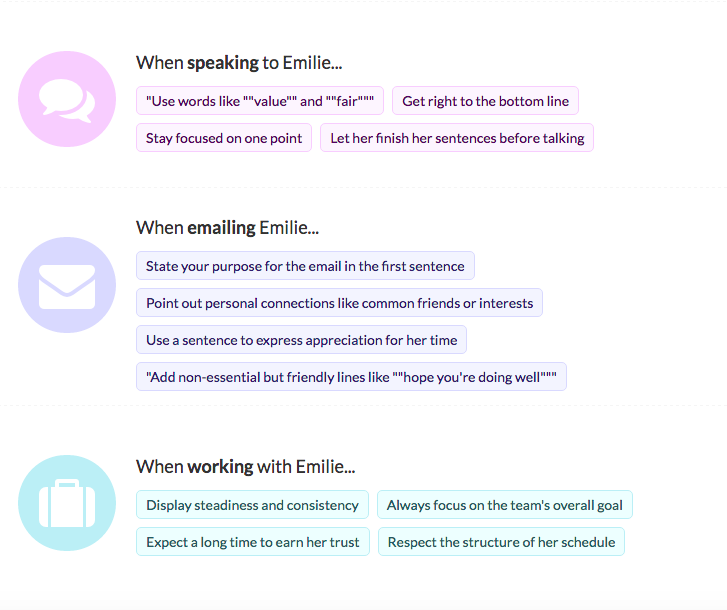

Want to manipulate my friend? This is a snippet of her profile.

When I looked myself up, I did NOT like what I found. The algorithm saw me as a long-winded, insecure exaggerator who loves to snack. Sample assessment: “It comes naturally to Kate to exaggerate details while telling a story.” Another: “Schedule meetings with food.”

I found the reduction of my personality to a series of blunt bullet-points to be insultingly reductive. But I can’t deny its allure — now anyone who wanted to email me had a comprehensive rundown on what sort of wording and style to use to impress me.

Like all assessments from personality tests, the profiles are often so vague that you could read into them what you wanted, like a horoscope. But even if they aren’t entirely accurate, Crystal’s assessments still make people feel like they have a secret power over the person they’re emailing. They have the advantage of big data.

***

Companies have been using our posts, photos, likes, favourites, and web browsing history to figure out what to sell us and how to sell it for years. Advertisers target us based on dossiers created around our browsing histories. Facebook uses your “likes” in plans to convince you to buy stuff. Crystal is certainly not the only company trying to figure out our personalities based on what we do online.

What makes Crystal a different breed of dismaying is that our incremental, daily actions are being lumped together to create blueprints for emotional manipulation that are available to anyone who wants them. The Crystal team claims that the software helps build healthy relationships by making people communicate better. Well, maybe it can help a marketer write a less-annoying email, but I call bullshit on the “healthy relationships” thing.

This service conditions people to understand others as easily parseable and tricked. It normalizes the practice of repackaging someone else’s words to come to a broad conclusion about them. Far from helping people understand each other, it encourages people to place their understanding of other humans through an algorithm’s filter. It’s an attitude that further obscures us from each other.

Top GIF via Crystal