Deep in the belly of the Johnson Space Center in Houston, Texas, lie “The Domes”. Step into one of them and suddenly you’re standing on the surface of Mars, or you’re flying high above the Earth, looking out from the International Space Station. This is the Systems Engineering Simulator, where we learnt to fly, drive and design better space vehicles.

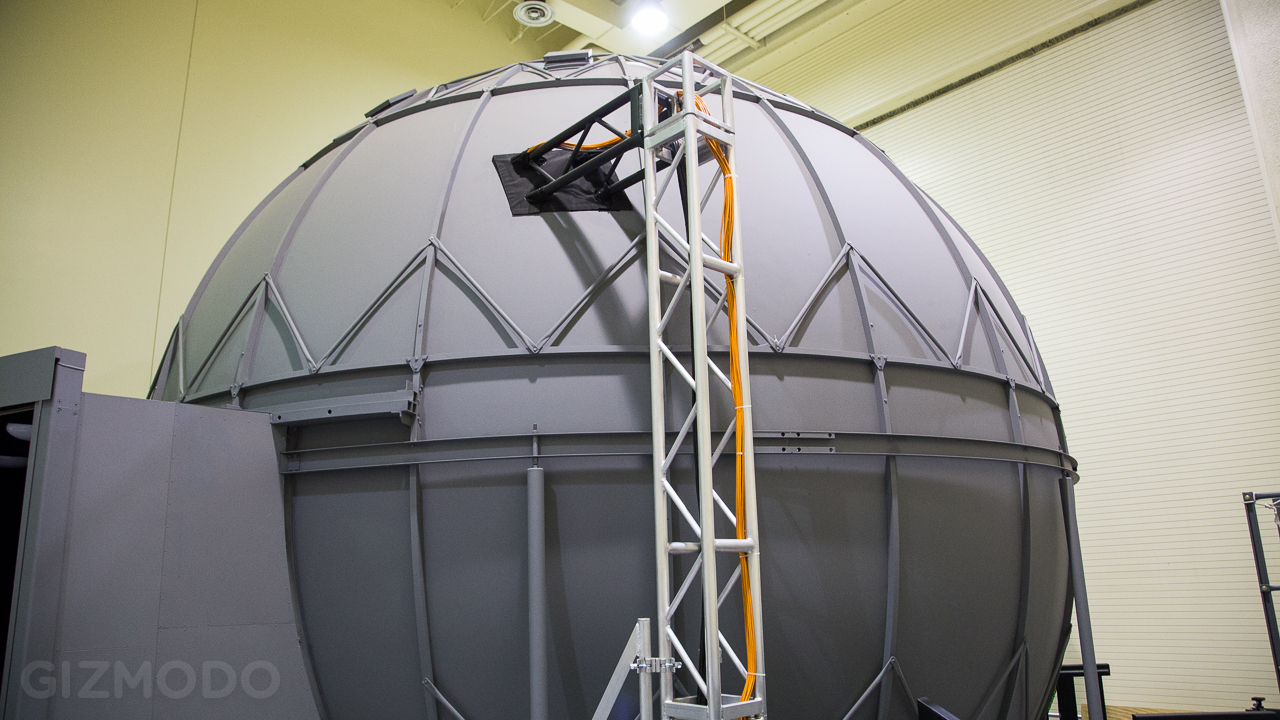

The two spherical simulators, dubbed Alpha Dome and Beta Dome, are both set up to be extremely versatile. They perform simulations for crew training, engineering analysis for the two missions currently flying now that support the ISS, and for the prototype vehicles that may fly in the future.

Each dome is inside a large room with high ceilings. From the outside they look like huge spherical silos, grey and nondescript, with nothing that would tip you off to the host of technology within. Inside, it feels kind of like an IMAX theatre, but warped. The screen has a very pronounced curve, designed to fill your peripheral vision when you’re placed in the middle. Turn around and you’ll see close to a dozen projectors mounted on various poles and catwalks, together weaving a tapestry of a single, gigantic image.

Alpha Dome

First there’s the Alpha Dome. It’s a 7m diameter dome and it has eight projectors that provide the visual models. Down the hall there’s Beta Dome, also 7m in diameter but newer and a bit more advanced — it has a wider field of view because it uses 11 projectors instead of eight, creating an extremely immersive view of the landscape before you.

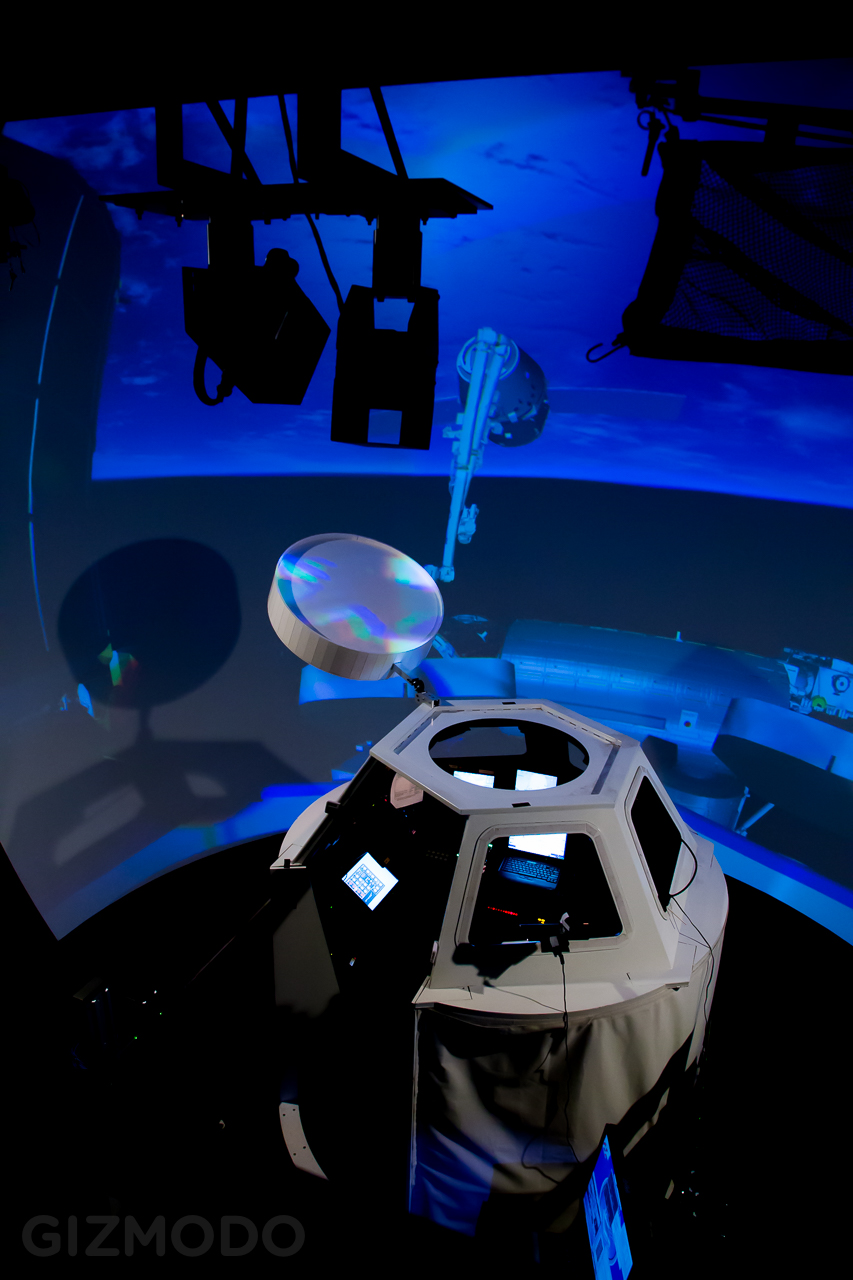

Looking down on the cupola mockup inside Alpha Dome, with parts of simulated Earth and the ISS in the background.

Alpha Dome is currently set up with a model of the cupola observatory module on the ISS. Out the windows you see what the astronauts would see: section of the space station flying high above a stunning view of Earth. Inside are all the same controllers and displays you’ll find on the station. The cupola is where astronauts operate the ship’s large robotic arm, so this setup gives them an idea place to practice those skills without, you know, breaking anything expensive. Like the ISS. Or an astronaut.

See, when a ship makes its way to the ISS, once it gets close enough, someone onboard the station uses the remote manipulator system (a.k.a. the Canada Arm 2) to reach out, grab the ship, and then line it up perfectly with the hatch. The arm is just a much more precise means of control that the small thrusters on the capsule itself, so once it’s grabbed on it’s far easier to guide it in. And that’s how spaceships merge, boys and girls, now let’s never speak of this again.

Beta Dome

Over at the Beta Dome is where things get a little more experimental. While the Alpha Dome is the go-to spot for training, the Beta Dome is where NASA is checking out prototype vehicles.

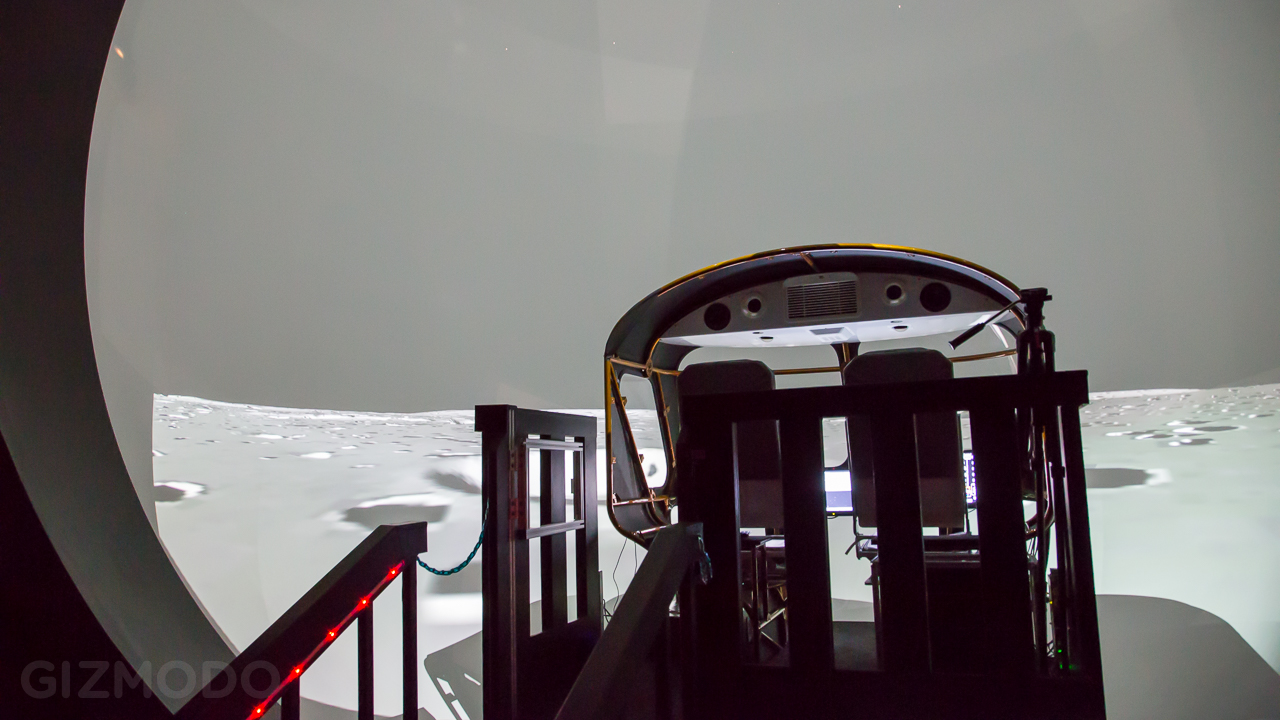

Rear view of the rover mockup inside Beta Dome

When we visited, it was set up with a concept cockpit for a rover vehicle that could potentially be driven on the Moon or on Mars. “They have also looked at using the same chassis as a multi-mission space exploration vehicle, so it could potentially go to an asteroid,” said NASA’s Amy Efting. “So we can actually run a bunch of different simulations in this same mockup. Or we have the capability to roll this mockup out and put another one in, like Orion, or something like that.”

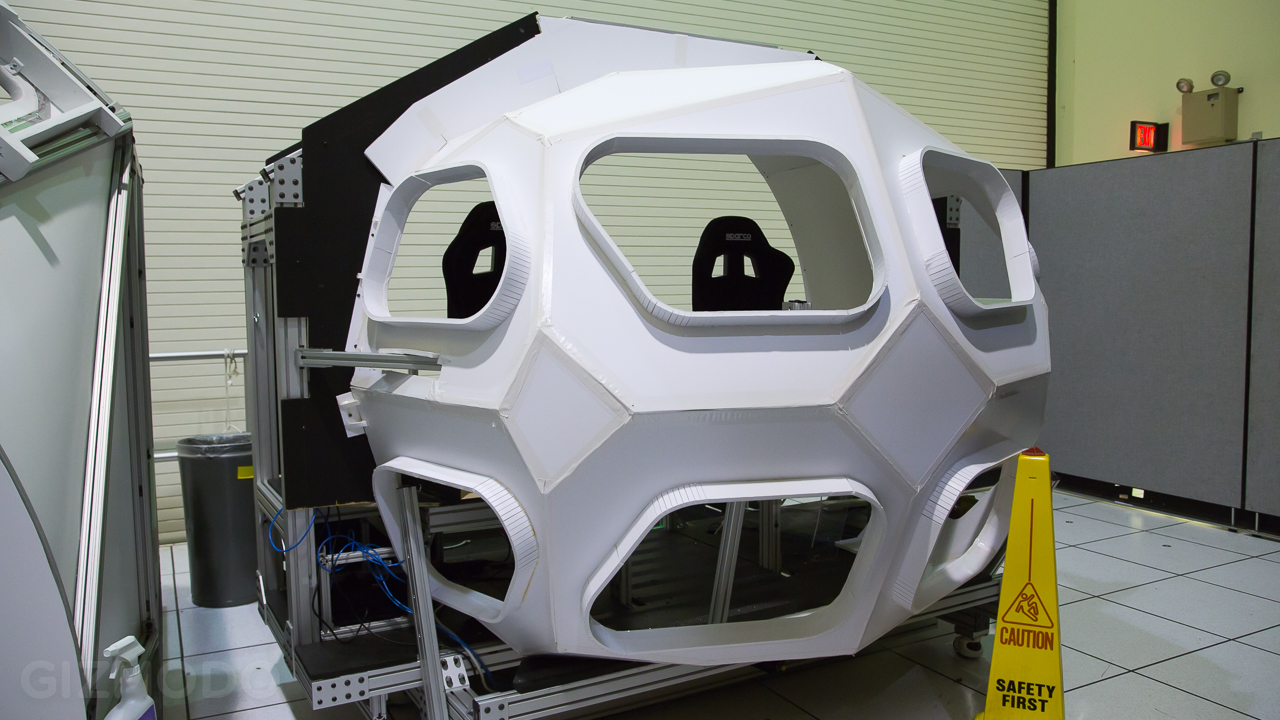

An experimental vehicle mockup, designed to be rolled into Beta Dome, waiting in wings.

Some of the vehicles are in very early conceptual phases, where they’re basically just testing to see what is and isn’t going to work. Others are much further along, and the simulation lab is used to help fine-tune the elements of the vehicle design or systems. Does the size and angle of the main window enable the astronauts to see the terrain properly? Are the displays in a convenient and easy-to-view place? The simulation allows them to test this virtually before they spend a ton of money building hardware.

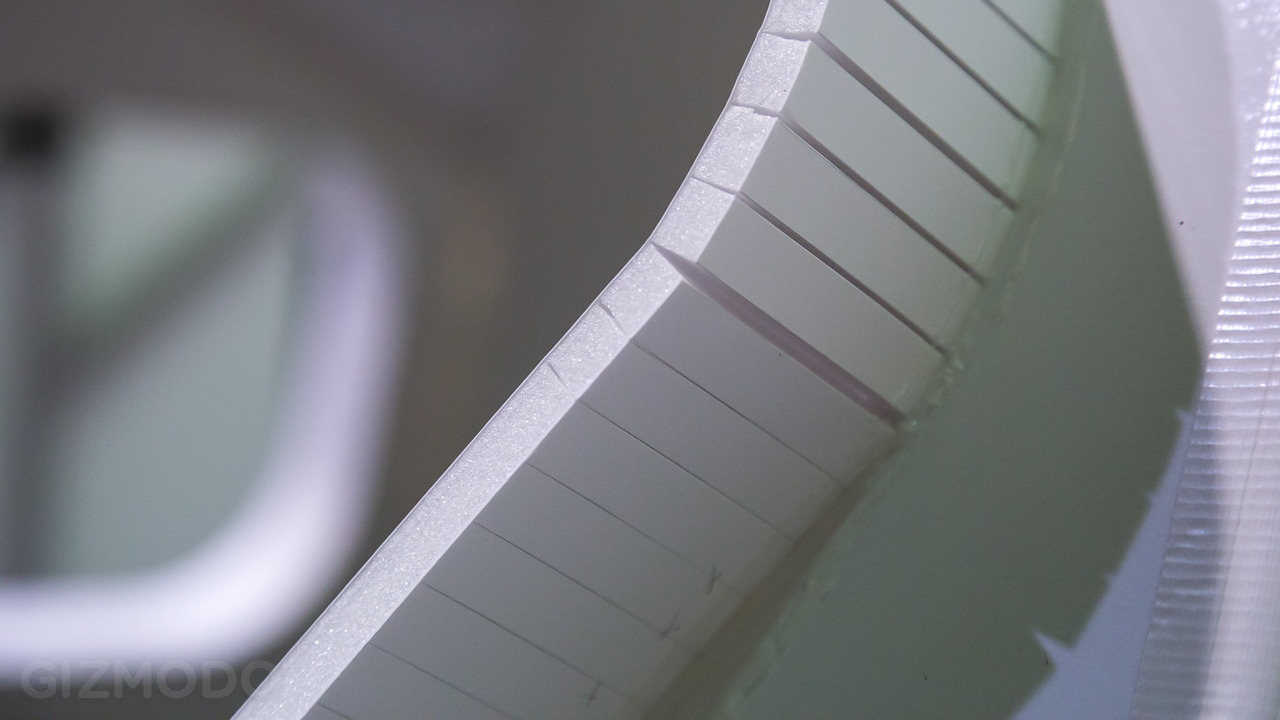

A closeup reveals that the above mockup is all foam-core and tape.

Some items are pretty solidly built, like the model of the space station’s cupola, because it’s a real thing that already exists in space, and so it isn’t going to change. For new designs though, they build models incredibly cheaply. We’re talking about full-sized mockups made of foam-core cardboard, cut with X-Acto knives, and stuck together with tape. Fast n’ dirty and very easy on the tax-payer’s wallet. It also gives them the ability to quickly iterate and evolve their prototype designs.

Amy Efting drives the rover across the surface of the moon

What It Feels Like

Both Alpha and Beta domes present simulations that are incredibly immersive. Driving along the surface of the Moon in a rover, your whole view is filled. It’s detailed. It feels dark, cold, and lonely. We were trying to get to the (sadly non-existent) Altair lander and there was a big crater standing between us. Amy wheeled us up to the edge, and as I looked down, I felt my stomach drop. I had to shake my head to remind myself that it was just a screen.

The models they created of the Moon’s surface have a surprising amount of detail. They were originally modelled after some real terrain, but the scientists wanted to present their prototypes with a greater challenge, so they added more hazards like craters and rocks. It is possible to flip the rover in the simulation, which is exactly why they train in simulations. Flip a rover on the moon and you are very likely dead.

I try my hand and docking the Dragon X to the ISS.

Back in the Alpha Dome I got to try my hand at docking the Dragon Capsule with the ISS. The controller in my left hand (a large, 5-directional joystick) was the translational control. So moving it left/right/up/down would strafe me in those direction. If I pushed the plunger in, I would move closer to the hatch on the floating Dragon. In my right hand was the rotational controller, and on the back of it was a trigger, which I was instructed not to depress until the last possible minute.

On one of the displays, I could see a close-up view of the hatch. There was a white circle on a black bar on the hatch, and my job was to keep the circle aligned with some overlaid crosshairs and keep easing the arm closer. The controls were sensitive, but there would be a bit of a delay (which is how it works in real lift, as the thrusters pulse) which made it really hard to not over-correct. You have to move it just a hair, then let it settle. Then another hair, then let it settle.

As the arm got closer I was told to flip the switch the puts the Dragon into “free drift” mode. Once I did, everything started getting more squirrelly.

“What you did when you put it into free-drift mode is you basically disabled the thrusters on the Dragon so it’s not maintaining it’s position and attitude anymore, Efting told me. “That’s why it gets harder as you get closer. It moves around more because orbital mechanics are having an effect on it. But they do that because they don’t want the Dragon or — any other vehicle — to be firing thrusters when it’s that close to the arm.” Makes sense.

Eventually, I got the arm close enough to the Dragon’s docking pin, and I pulled the trigger which initiated the station’s autonomous capture sequence, and touchdown! Well, on my second try. My first time through, I accidentally bumped the ship and probably killed everybody. There’s so much blood on my space-gloves.

Mike McFarlane stands in front of the computers that drive the domes.

The Brains

Not surprisingly, simulations like these take some significant horsepower to run. While they’re driven by standard, off-the-shelf desktop computers (albeit with high-end graphics cards), the real power is how they work together. Mike McFarlane, the SES Lab manager explains:

“So, we have 11 projectors in the Beta Dome, and we have one PC that drives each of those 11 projectors. Then we have a couple other image generation systems that provide the images you see in the cockpit, and another one that calculates all the equations of motion — models for contact with the lunar surface, and stuff like that. And then we have a manager computer as well, so you do the maths and add them all up, and that’s how many computers you need to drive a simulation like this.”

Not all simulations are created equal though. Some, where they’re testing prototype vehicles are actually a lot lighter, because they make guesses and assumptions about what these things might look like, and so it can be a little rougher.

In contrast, simulations that involve the ISS are massive. Not only do they have to include all of the real details that are on the station, which requires very high fidelity dynamics, but the simulation has to include the ISS’ actual flight software. The actually algorithms that are flown aboard the space station are incorporated into the SES Lab’s simulations, which, as you might imagine are incredibly complex.

The simulator domes are another tool in NASA’s arsenal to train and troubleshoot before sending actual humans into space, and ultimately they’re one of the easiest to use. You don’t have to don any virtual reality helmets, or gloves, or suits. You can just down, flip a few switches, and lay some skid-marks down on the surface of Mars.

Picture: Brent Rose, edited by Nick Stango

Gizmodo’s Space Camp is all about the under-explored side of NASA, from robotics to medicine to deep-space telescopes to art. All this week we’ll be coming at you direct from NASA’s Johnson Space Center in Houston, Texas, shedding a light on this amazing world. You can follow the whole series here.

Special thanks to everybody at NASA JSC for making this happen. The list of thank yous would take up pages, but for giving us access, and for being so generous with their time, we are extremely grateful to everyone there.

Space Camp® is a registered trademark/service of the US Space & Rocket Center. This article and subsequent postings have not been written or endorsed by the US Space & Rocket Center or Space Camp®. To visit the official space camp website, click here.