In a scenario straight out of “Enhance, enhance!“, MIT scientists have figured out that the tiny vibrations on ordinary objects like a potato chip bag or a glass of water or even a plant can be reconstructed into intelligible speech. All it takes is a camera and a snappy algorithm. Take a listen.

Sound waves, after all, are just disturbances in the air. When sound hits something light and delicate, like a potato chip bag, the object will vibrate ever so slightly. Now, you’ve probably noticed that house plants and potato chip bags do not sway and shake when you have a conversation. To capture movements as small as a tenth of a micrometre — or five thousandths of a pixel — the team tracked the colour of single pixels over time. Here’s how it works, as explained by a press release from MIT:

Suppose, for instance, that an image has a clear boundary between two regions: Everything on one side of the boundary is blue; everything on the other is red. But at the boundary itself, the camera’s sensor receives both red and blue light, so it averages them out to produce purple. If, over successive frames of video, the blue region encroaches into the red region — even less than the width of a pixel — the purple will grow slightly bluer. That colour shift contains information about the degree of encroachment.

At first, the team used high-speed cameras shooting 2000 to 6000 frames per second through soundproof glass. In this case, the camera is shooting faster than the frequency of audible sound. As you can hear in the video above, speech recovered from a vibrating plant is fairly understandable.

Picture: MIT video

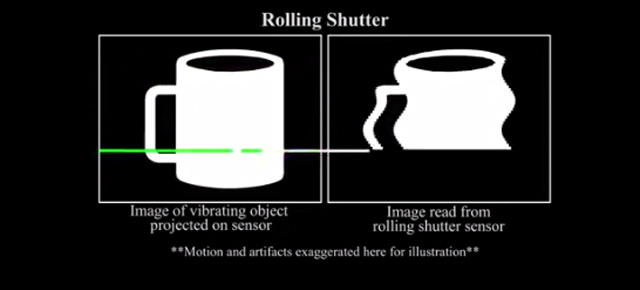

But the coolest part is that the team was able to extract sound from ordinary 60 frame per second video cameras — by exploiting a technical quirk. The camera’s sensor captures images by scanning horizontally, so certain parts of the image are actually recorded slightly after others. The rolling shutter sensor quirk let the team reconstruct audio even from video that was shot at rates slower than the frequency of sound. It’s definitely fuzzier than with a high-speed camera, but one might still identify the number of speakers.

The researchers are presenting their work at the computer graphics conference Siggraph this month. We can think of a few other people *cough* who might be interested. [MIT]