What if, the next time you played a video game, the main character not only looked like you but had the same body, same clothes, same everything? How would it change the way you related to the game? How would it change the way you relate to the other characters in it? I found out.

A team co-led by Ari Shapiro at the Institute for Creative Technologies at USC just gave us an exclusive first-look at a new system they are calling Fast Avatar Capture. It scans you and creates a 3D avatar you can put inside a game. And it does it in two minutes, using a plain old Xbox Kinect.

If it sounds familiar, you might be thinking of the similar proof-of concept rig that Nikon showed off at CES. There, you stood inside a gigantic sphere tricked out with 64 Nikon DLSR cameras. It look an image of you from every angle, and then sent that data through three computers. Two hours of processing later, you had a video-game-ready avatar. It looked awesome when it was done. It’s also totally impractical for home use.

By contrast, the ICT set-up is not only something most people can afford. It’s one that well over 20 million people already own.

How It Works

I stood directly in front of a Kinect and held still while it looked me up and down, which actually felt a little like being creeped on. Then I turned 90 degrees so it could scan my side, another 90 so it could scan my back, then one more 90 for my other side. Then the computer (in this case a PC tower, but someday, ideally, it’ll be an Xbox) processed my images and went to work constructing a 3D model with textures on top and joints in the right places. It then fused my avatar with a number of behaviour libraries the ICT has set up ahead of time; running, jumping, and kicking, for example.

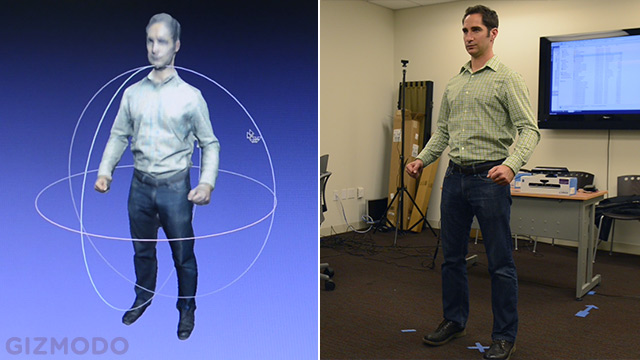

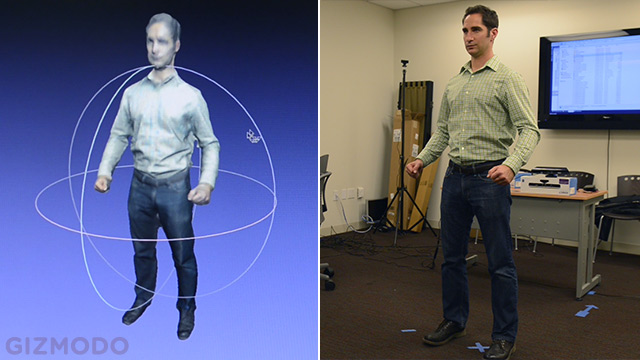

In two minutes, it spat out this:

Now, admittedly, this isn’t anywhere near the fidelity that the Nikon rig had — it looked like someone smashed the side of my face with a bowling ball — but that should change soon. This is, after all, the very first generation of this software, and it was shot with the original Kinect. The Kinect 2 photographs at a much higher resolution, which will give this project a major level-up in image quality.

“Right now the characters are suitable for third-person video games and crowd simulations. They’re very recognisable from a distance, but you wouldn’t necessarily want them for face to face interactions,” Shapiro told us. “As these sensors improve, as our algorithms improve, and as we come up with ways to capture different parts of the body at higher resolutions, we’ll be able to incorporate more and more of your behaviour into the character.”

On the simplest side, that might mean doing the full-body scan, and then putting your face right up next to the Kinect to get a high-res scan of your face to be incorporated. On the more complex side, it could evolve to you walking around the room and going through some habitual motions with your hands, so not only would it look like you, but it could learn to walk like you, stand like you, and gesture like you, too. All of a sudden, you’re not just controlling Solid Snake. You are Solid Snake.

It sounds far-fetched, but it may not be far away. Shapiro said he expects better face and hand fidelity and many more behaviours to be added within the year.

Get in the Game

We’ve all spent hours trying to make Madden football players who look like us, or WWE wrestlers, or even bobble-headed Miis that bear a passing resemblance. Now, instead of a tedious approximation, we can have the real thing.

“For me, it changes what an avatar is,” says Shapiro. “You don’t have to spend time constructing an avatar anymore. If you think about how people change over time, you might capture an avatar every day. You might see how people evolve over time.”

Consider the possibilities, too, of a mixed-reality avatar, where you scan yourself and then manipulate your own features. You could give yourself a giant head and tiny legs, or add a mohawk, or a disco unitard. How much freedom you would have would be up to the game developers, but as far as the technology goes, it’s well within reach.

When it comes to gaming, Shapiro sees heightened human interaction within the world of the game as a major selling point:

“I think games that have a social aspect, where you really interact with other people are going to be the most interesting and most used. It’s something when you see an avatar of another person you don’t recognise — you might think it’s an interesting technology. It’s something else when you see somebody that you know — your family, your friends, your children. Then it has meaning to you.”

That’s probably the best use case, sure, but I can’t help but wonder: What would it be like to play a Mortal Kombat-style game as yourselves, then you rip out your friend’s spine and show it to him before he does? Or you’re in a battle royale in a Halo-type game, you have your sniper scope trained on the back of someone’s head, she turns around, and it’s your girlfriend. Would it be horribly disturbing, or just hilarious? We’re going to find out soon enough.

Non-Gaming Applications

The implications go well beyond your Xbox. The U.S. military (which provides some of the funding for this project) is already beginning to incorporate virtual reality and other computer simulations into training, and they have expressed a great interest in personalizing the characters in the simulations they run.

“They have simulations for soldiers that do different war-game scenarios, squad formations, and whatnot,” Shapiro told us. “They’re interested in coming up with an individualized representation of each soldier, and this technology could be used for that purpose. So even if you’re wearing the same uniform as everybody else, your size is different, the faces are different. It’s actually very distinct when you’re going person by person.”

In a combat situation, it’s critical to know that you’re following (or protecting, or shooting) the right person. That’s why it’s crucial that these simulations are as realistic as possible, so that when you are faced with the actual situation you respond automatically. This would be a dramatic step closer to real life from generic avatars with numbers on them.

And then there’s sex, which is an inevitability for all things internet. Just how many virtual positions might these developers come up with? Will VR girls be the new cam girls? What would it be like to see a replica of yourself engaging in a sexual act that wouldn’t be even remotely possible for you in real life (since you’re not triple-jointed)? Would it be a turn-on, or would it be mind-bendingly disturbing? We’re probably going to find out before long.

Something else that Shapiro said stuck with me: “You could create a crowd simulation very quickly with a variety of different people.” While that’s not so bad if the crowd is filled with friends, what if you run out of personal friends? Can you populate a crowd with the faces of other users? What if someone wanted to use your avatar in a more involved way? Who owns your avatar after you upload it?

Obviously, it will depend on the game and/or the gaming system, but you might want to take a careful look at the terms of service when you install a game. Or who knows? You could could end up getting stabbed by a prostitute in someone else’s copy of Grand Theft Auto.

So… When?

It’s pretty remarkable what the ICT system can do already, given how new it is. We asked Shapiro if they had already spoken to any video game companies about Fast Avatar Capture. He said they hadn’t yet, but they were obviously hoping there would be interest . The animation half of the software, called SmartBody, is already open-sourced and available online. The newer capture part of the technology came from other research groups within USC, led by Evan Suma, Gérard Medioni, and others (full credits here).

When asked if the capture software might go free and open-source as well, Shapiro got a little coy, saying they would have to see. We’re guessing that a large video game company (possibly Microsoft?) will plunk down a large chunk of change to call dibs before that can happen. Then the question of when we’ll be able to get it in our homes will really hinge on whoever owns the licenses.

What’s exciting is that we already have a functional system here. All that’s left is refining and expanding. Increasing the resolution is obviously a big box to check, but it sounds like one of the simpler tasks they have to tackle. Next they’ll have to make the character movement more natural (I swear I don’t run like that) and expand the repertoire of behaviours. From there, though, it could be off to the races.

The point of the system, as Shapiro says it, is “to connect you better with your virtual self.” In an age when so many of us are torn between plugging in and unplugging entirely, it’s interesting to contemplate how plugging in more completely might change the landscape of human experience in the virtual world. [Fast Avatar Capture]

Huge thanks to everyone at USC Institute for Creative Technologies for their time.

Camera: Judd Frazier

Edit: Michael Hession