Microsoft’s Kinect is great, but it has its limitations. Not so MIT’s new nano-camera though, which uses similar technology but can weave the same magic with translucent objects, and even work in snow or rain.

Using the same time-of-flight technology as the Kinect, the camera determines the location of objects according to how long it takes for a light signal to be reflected from a surface and return to the sensor. But, unlike Microsoft’s offering, this thing ain’t fooled by things like rain or fog, and can even work effectively with translucent objects, such as items made from glass. So how the hell does it do that? Ramesh Raskar from MIT explains:

“We use a new method that allows us to encode information in time. So when the data comes back, we can do calculations that are very common in the telecommunications world, to estimate different distances from the single signal… People with shaky hands tend to take blurry photographs with their mobile phones because several shifted versions of the scene smear together. By placing some assumptions on the model — for example that much of this blurring was caused by a jittery hand — the image can be unsmeared to produce a sharper picture.”

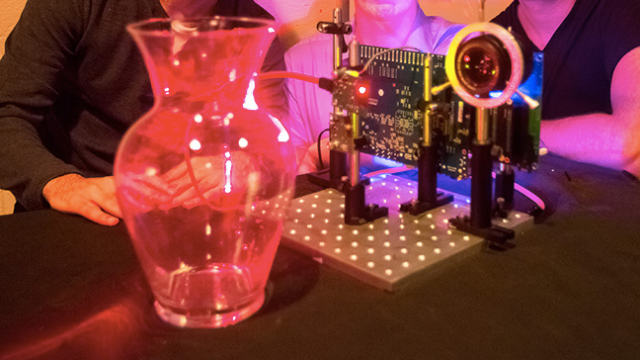

In the past, the results the team have produced — often referred to by the term nanophotography — were only possible with a $US500,000 femto-camera. But by rolling many of the strengths into software, the team can now produce a device which provides the same features for just $US500.

Not only does that mean that the Kinects of the future will be even more awesome than they are right now, it also suggest that the sensors required by self-driving cars and the like will be ever more effective too. This thing can make light work of telling puddles from pedestrians in the pouring rain — and that can only be a great thing. [MIT via Engadget]